Technologies

Gen AI Chatbots Are Starting to Remember You. Should You Let Them?

An AI model’s long memory can offer a better experience — or a worse one. Good thing you can turn it off.

Until recently, generative AI chatbots didn’t have the best memories: You tell it something and, when you come back later, you start again with a blank slate. Not anymore.

OpenAI started testing a stronger memory in ChatGPT last year and rolled out improvements this month. Grok, the flagship tool of Elon Musk’s xAI, also just got a better memory.

It took significant improvements in math and technology to get here but the real-world benefits seem pretty simple: You can get more consistent and personalized results without having to repeat yourself.

«If it’s able to incorporate every chat I’ve had before, it does not need me to provide all that information the next time,» said Shashank Srivastava, assistant professor of computer science at the University of North Carolina at Chapel Hill.

Those longer memories can help with solving some frustrations with chatbots but they also pose some new challenges. As with when you talk to a person, what you said yesterday might influence your interactions today.

Here’s a look at how the bots came to have better memories and what it means for you.

Improving an AI model’s memory

For starters, it isn’t quite a «memory.» Mostly, these tools work by incorporating past conversations alongside your latest query. «In effect, it’s as simple as if you just took all your past conversations and combined them into one large prompt,» said Aditya Grover, assistant professor of computer science at UCLA.

Those large prompts are now possible because the latest AI models have significantly larger «context windows» than their predecessors. The context window is, essentially, how much text a model can consider at once, measured in tokens. A token might be a word or part of a word (OpenAI offers one token as three-quarters of a word as a rule of thumb).

Early large language models had context windows of 4,000 or 8,000 tokens — a few thousand words. A few years ago, if you asked ChatGPT something, it could consider roughly as much text as is in this recent CNET cover story on smart thermostats. Google’s Gemini 2.0 Flash now has a context window of a million tokens. That’s a bit longer than Leo Tolstoy’s epic novel War and Peace. Those improvements are driven by some technical advances in how LLMs work, creating faster ways to generate connections between words, Srivastava said.

Other techniques can also boost a model’s memory and ability to answer a question. One is retrieval-augmented generation, in which the model can run a search or otherwise pull up documents as needed to answer a question, without always keeping all of that information in the context window. Instead of having a massive amount of information available at all times, it just needs to know how to find the right resource, like a researcher perusing a library’s card catalog.

Read more: AI Essentials: 27 Ways to Make Gen AI Work for You, According to Our Experts

Why context matters for a chatbot

The more an LLM knows about you from its past interactions with you, the better suited to your needs its answers will be. That’s the goal of having a chatbot that can remember your old conversations.

For example, if you ask an LLM with no memory of you what the weather is, it’ll probably follow up first by asking where you are. One that can remember past conversations, however, might know that you often ask it for advice about restaurants or other things in San Francisco, for example, and assume that’s your location. «It’s more user-friendly if the system knows more about you,» Grover said.

A chatbot with a longer memory can provide you with more specific answers. If you ask it to suggest a gift for a family member’s birthday and tell it some details about that family member, it won’t need as much context when you ask again next year. «That would mean smoother conversations because you don’t need to repeat yourself,» Srivatsava said.

A long memory, however, can have its downsides.

You can (and maybe should) tell AI to forget

Having a chatbot recommend a gift poses a conundrum that’s all too common in human memories: You told your aunt you liked airplanes when you were 12 years old, and decades later you still get airplane-themed gifts from her. An LLM that remembers things about you could bias itself too much toward something you told it before.

«There’s definitely that possibility that you can lose your control and that this personalization could haunt you,» Srivastava said. «Instead of getting an unbiased, fresh perspective, its judgment might always be colored by previous interactions.»

LLMs typically allow you to tell them to forget certain things or to exclude some conversations from their memory.

You may also deal with things you don’t want an AI model to remember. If you have private or sensitive information you’re communicating with an LLM (and you should think twice about doing so at all), you probably want to turn off the memory function for those interactions.

Read the guidance on the tool you’re using to be sure you know what it’s remembering, how to turn it on and off and how to delete items from its memory.

Grover said this is an area where gen AI developers should be transparent and offer clear commands in the user interface. «I think they need to be providing more controls that are visible to the user, when to turn it on, when to turn it off,» he said. «Give a sense of urgency for the user base so they don’t get locked into defaults that are hard to find.»

How to turn off gen AI memory features

Here’s how to manage memory features in some common gen AI tools.

ChatGPT

OpenAI has a couple types of memory in its models. One is called «reference saved memories» and it stores details that you specifically ask ChatGPT to save, like your name or dietary preferences. Another, «reference chat history,» remembers information from past conversations (but not everything).

To turn off either of these features, you can go to Settings and Personalization and toggle the items off.

You can ask ChatGPT what it remembers about you and ask it to forget something it has remembered. To completely delete this information, you can delete the saved memories in Settings and the chat where you saved that information.

Gemini

Google’s Gemini model can remember things you’ve discussed or summarize past conversations.

To modify or delete these memories, or to turn off the feature entirely, you can go into your Gemini Apps Activity menu.

Grok

Elon Musk’s xAI announced memory features in Grok this month and they’re turned on by default.

You can turn them off under Settings and Data Controls. The specific setting is different between Grok.com, where it’s «Personalize Grok with your conversation history,» and on the Android and iOS apps, where it’s «Personalize with memories.»

Technologies

Google’s Pixel 10A Is Coming to Japan With an Exclusive Blue Edition and Special Wallpaper

This model comes with creatively designed stickers and a special look for Pixel’s 10th anniversary.

Don’t be blue: Google is releasing an Isai blue edition of the Pixel 10A to celebrate the Android phone line’s 10th anniversary, setting it apart with its own sticker set, specialized wallpaper and custom icons. But it’ll only be available in Japan.

Announced Tuesday on the Google Japan blog, the Isai blue Pixel 10A has a dark blue look and includes bonus decorations designed in collaboration with Japan’s Heralbony art company. These include an exclusive bumper case and stickers for customization.

This edition of the Pixel 10A will arrive in Japan on May 20, following the April 14 release of the Pixel 10A in its original colors of lavender, berry, fog and obsidian. The Isai blue model costs 94,900 yen, which roughly translates to $595, and includes 256GB of storage.

This makes it slightly less expensive than the US model’s 256GB edition, but it comes with a number of fun extras at no additional cost.

Google’s creation of a country-specific model for Japan may also reflect strong sales in that market. In 2023, the IDC analytics firm (via 9to5Google) reported that the Pixel 7 series accounted for 10.7% of the country’s market share, a 527% increase from 2022.

Technologies

Can’t Wait for New Emoji? Here’s How to Create Your Own on iPhone

Apple Intelligence-enabled iPhones can create custom emoji in a few easy steps.

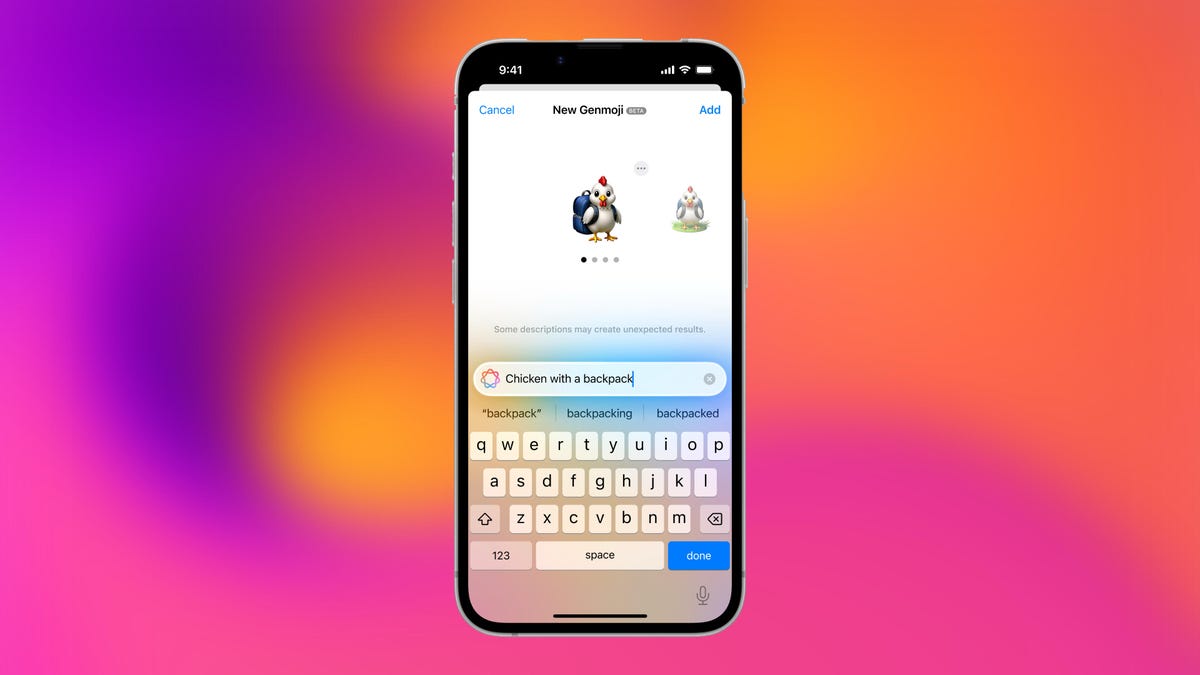

Apple brought new emoji to all iPhones when the company released iOS 26.4 on March 24. The new emoji include «» orca, «» distorted face and «» hairy creature — or as we might normally call it, Sasquatch. According to Emojipedia, there are 3,953 emoji with more on the way, including a pickle. But there’s no emoji for a dog wearing pajamas, a plate with burgers and fries and many other things. But if you have Genmoji on your iPhone you can create these emoji and many more.

Apple released iOS 18.2 in 2024 and the company introduced its own emoji generator, called Genmoji, to Apple Intelligence-capable iPhones at that time. The Unicode Standard, a universal character encoding standard, is responsible for creating new emoji, and approved emoji are added to all devices once a year. With Genmoji, you don’t have to wait for new emoji to appear on your iPhone each year. You can just create them as you need them.

Read on to learn how to use Genmoji on iPhone to create your own custom emoji. Just note that only iPhones with Apple Intelligence, like the iPhone 17 lineup, can use Genmoji at this time.

Note: The new emoji may not display correctly for Apple users whose devices aren’t on a 26.4 software version.

How to make custom emoji

1. Open Messages and go into a chat.

2. Tap the plus (+) button next to your text box.

3. Tap Genmoji.

You can then type a description of an emoji into the text box near the bottom of your screen and tap the check mark on your keyboard to enter that description into Genmoji. You can also tap different suggestions and themes that are right above the text box. And with iOS 26 or later, you can also combine and use emoji to create others rather than describing a new emoji or using suggestions.

Your iPhone will generate a series of new emoji for you to pick from according to your description, and you can swipe through these new emoji. When you find the one you want, tap Add in the top right corner of your screen and the new emoji will be available to use as an emoji, tapback or a sticker. Now you don’t have to wait for the Unicode Standard to propose, create and bring new emoji to devices.

For more iOS news, here’s what to know about iOS 26.4 and iOS 26.3. You can also check out our iOS 26 cheat sheet for other tips and tricks.

Technologies

Save Over 20% on This Handy 10,000-mAh Anker Nano Power Bank

Keep your devices charged on the go with this Anker Nano power bank, now down to just $46.

We’ve just spotted the Anker Nano 45-watt portable power bank for just $46 at Amazon right now. This saves you $14 — a 23% discount on its list price. Though it’s $6 more than the lowest-ever price we saw during Black Friday, it’s still a solid discount when you take the rising cost of tech accessories into account. It also matches the lowest price we’ve seen in 2026. It comes in four colors: black, green, pink and white. They’re all on sale for the same price.

This Anker Nano portable charger weighs approximately 8.2 ounces and measures a compact 3.21×1.99×1.42 inches. Despite its small size, it has a retractable cable and supports fast charging in compatible Apple, Samsung, Google Pixel and other smartphones. It also has a large 10,000-mAh capacity and a smart display so you always know how much juice is left in your power bank.

The Nano can charge an iPhone 17 to up to 50% battery in an estimated 20 minutes, and is powerful enough to charge tablets and laptops. Need to charge your devices while charging your power bank? You can do so safely thanks to pass-through charging so you’ll never have to go without battery life.

We’ve also compiled a list of the best power banks for iPhones and for Android, in case this deal isn’t quite a fit for you.

Why this deal matters

If you travel, have a long commute time or are otherwise always on the go, a portable charger can help you keep your devices fully powered. This 45-watt Anker Nano power bank is compact, includes a loop that lets you keep track of it easily and has a built-in cable so you don’t have to keep up with extra cords. Amazon’s $14 discount makes this a solid deal for anyone looking for a compact power bank.

-

Technologies3 года ago

Technologies3 года agoTech Companies Need to Be Held Accountable for Security, Experts Say

-

Technologies3 года ago

Technologies3 года agoBest Handheld Game Console in 2023

-

Technologies3 года ago

Technologies3 года agoTighten Up Your VR Game With the Best Head Straps for Quest 2

-

Technologies4 года ago

Technologies4 года agoBlack Friday 2021: The best deals on TVs, headphones, kitchenware, and more

-

Technologies5 лет ago

Technologies5 лет agoGoogle to require vaccinations as Silicon Valley rethinks return-to-office policies

-

Technologies5 лет ago

Technologies5 лет agoVerum, Wickr and Threema: next generation secured messengers

-

Technologies4 года ago

Technologies4 года agoOlivia Harlan Dekker for Verum Messenger

-

Technologies4 года ago

Technologies4 года agoThe number of Сrypto Bank customers increased by 10% in five days