Technologies

FTC to AI Companies: Tell Us How You Protect Teens and Kids Who Use AI Companions

As more teens turn to AI for companionship, the investigation comes as no surprise.

The Federal Trade Commission is launching an investigation into AI chatbots from seven companies, including Alphabet, Meta and OpenAI, over their use as companions. The inquiry involves finding how the companies test, monitor and measure the potential harm to children and teens.

A Common Sense Media survey of 1,060 teens in April and May found that over 70% used AI companions and that more than 50% used them consistently — a few times or more per month.

Experts have been warning for some time that exposure to chatbots could be harmful to young people. A study revealed that ChatGPT, for instance, provided bad advice to teenagers, like how to conceal an eating disorder or how to personalize a suicide notes. In some cases, chatbots have ignored comments that should have been recognized as concerning, instead simply continuing the previous conversation. Psychologists are calling for guardrails to protect young people, like reminders in the chat that the chatbot is not human, and for educators to prioritize AI literacy in schools.

There are plenty of adults, too, who’ve experienced negative consequences of relying on chatbots — whether for companionship and advice or as their personal search engine for facts and trusted sources. Chatbots more often than not tell what it thinks you want to hear, which can lead to flat-out lies. And blindly following the instructions of a chatbot isn’t always the right thing to do.

«As AI technologies evolve, it is important to consider the effects chatbots can have on children,» FTC Chairman Andrew N. Ferguson said in a statement. «The study we’re launching today will help us better understand how AI firms are developing their products and the steps they are taking to protect children.»

A Character.ai spokesperson told CNET every conversation on the service has prominent disclaimers that all chats should be treated as fiction.

«In the past year we’ve rolled out many substantive safety features, including an entirely new under-18 experience and a Parental Insights feature,» the spokesperson said.

Don’t miss any of our unbiased tech content and lab-based reviews. Add CNET as a preferred Google source.

The company behind the Snapchat social network likewise said it has taken steps to reduce risks. «Since introducing My AI, Snap has harnessed its rigorous safety and privacy processes to create a product that is not only beneficial for our community, but is also transparent and clear about its capabilities and limitations,» the spokesperson said.

Meta declined to comment, and neither the FTC nor any of the remaining four companies immediately responded to our request for comment. The FTC has issued orders and is seeking a teleconference with the seven companies about the timing and format of its submissions no later than Sept 25. The companies under investigation include the makers of some of the biggest AI chatbots in the world or popular social networks that incorporate generative AI:

- Alphabet (parent company of Google)

- Character Technologies

- Meta Platforms

- OpenAI

- Snap

- X.ai

Starting late last year, some of those companies have updated or bolstered their protection features for younger individuals. Character.ai began imposing limits on how chatbots can respond to people under the age of 17 and added parental controls. Instagram introduced teen accounts last year and switched all users under the age of 17 to them and Meta recently set limits on subjects teens can have with chatbots.

The FTC is seeking information from the seven companies on how they:

- monetize user engagement

- process user inputs and generate outputs in response to user inquiries

- develop and approve characters

- measure, test, and monitor for negative impacts before and after deployment

- mitigate negative impacts, particularly to children

- employ disclosures, advertising and other representations to inform users and parents about features, capabilities, the intended audience, potential negative impacts and data collection and handling practices

- monitor and enforce compliance with Company rules and terms of services (for example, community guidelines and age restrictions) and

- use or share personal information obtained through users’ conversations with the chatbots

Technologies

Google’s Pixel 10A Is Coming to Japan With an Exclusive Blue Edition and Special Wallpaper

This model comes with creatively designed stickers and a special look for Pixel’s 10th anniversary.

Don’t be blue: Google is releasing an Isai blue edition of the Pixel 10A to celebrate the Android phone line’s 10th anniversary, setting it apart with its own sticker set, specialized wallpaper and custom icons. But it’ll only be available in Japan.

Announced Tuesday on the Google Japan blog, the Isai blue Pixel 10A has a dark blue look and includes bonus decorations designed in collaboration with Japan’s Heralbony art company. These include an exclusive bumper case and stickers for customization.

This edition of the Pixel 10A will arrive in Japan on May 20, following the April 14 release of the Pixel 10A in its original colors of lavender, berry, fog and obsidian. The Isai blue model costs 94,900 yen, which roughly translates to $595, and includes 256GB of storage.

This makes it slightly less expensive than the US model’s 256GB edition, but it comes with a number of fun extras at no additional cost.

Google’s creation of a country-specific model for Japan may also reflect strong sales in that market. In 2023, the IDC analytics firm (via 9to5Google) reported that the Pixel 7 series accounted for 10.7% of the country’s market share, a 527% increase from 2022.

Technologies

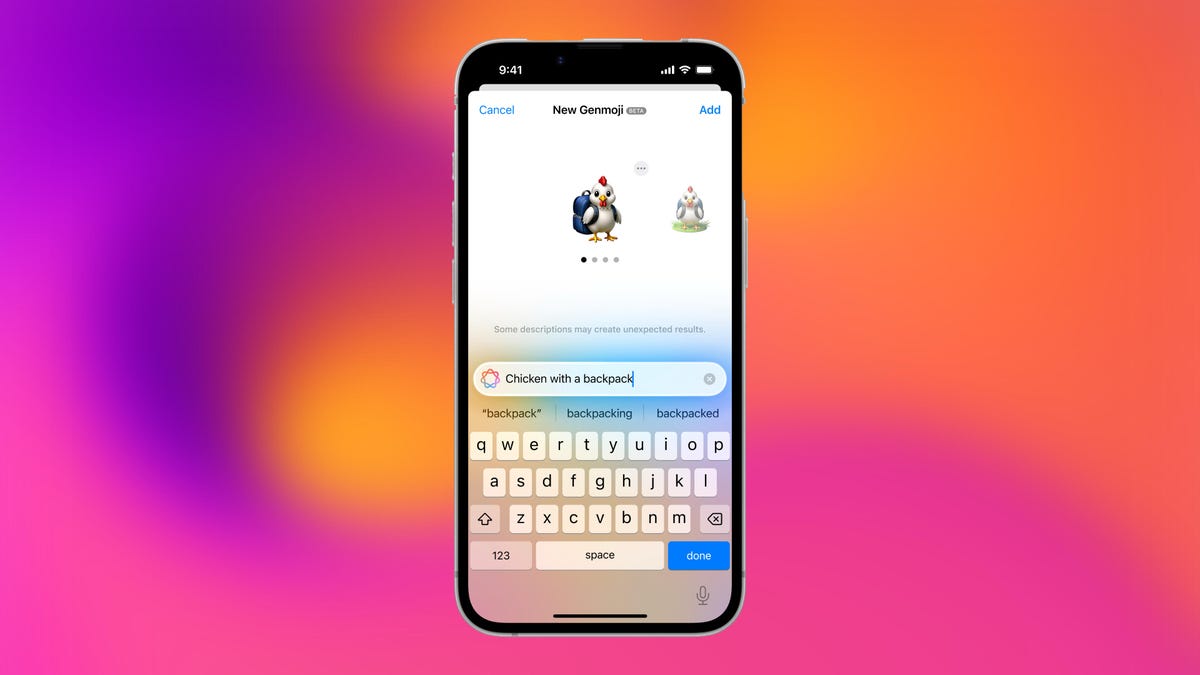

Can’t Wait for New Emoji? Here’s How to Create Your Own on iPhone

Apple Intelligence-enabled iPhones can create custom emoji in a few easy steps.

Apple brought new emoji to all iPhones when the company released iOS 26.4 on March 24. The new emoji include «» orca, «» distorted face and «» hairy creature — or as we might normally call it, Sasquatch. According to Emojipedia, there are 3,953 emoji with more on the way, including a pickle. But there’s no emoji for a dog wearing pajamas, a plate with burgers and fries and many other things. But if you have Genmoji on your iPhone you can create these emoji and many more.

Apple released iOS 18.2 in 2024 and the company introduced its own emoji generator, called Genmoji, to Apple Intelligence-capable iPhones at that time. The Unicode Standard, a universal character encoding standard, is responsible for creating new emoji, and approved emoji are added to all devices once a year. With Genmoji, you don’t have to wait for new emoji to appear on your iPhone each year. You can just create them as you need them.

Read on to learn how to use Genmoji on iPhone to create your own custom emoji. Just note that only iPhones with Apple Intelligence, like the iPhone 17 lineup, can use Genmoji at this time.

Note: The new emoji may not display correctly for Apple users whose devices aren’t on a 26.4 software version.

How to make custom emoji

1. Open Messages and go into a chat.

2. Tap the plus (+) button next to your text box.

3. Tap Genmoji.

You can then type a description of an emoji into the text box near the bottom of your screen and tap the check mark on your keyboard to enter that description into Genmoji. You can also tap different suggestions and themes that are right above the text box. And with iOS 26 or later, you can also combine and use emoji to create others rather than describing a new emoji or using suggestions.

Your iPhone will generate a series of new emoji for you to pick from according to your description, and you can swipe through these new emoji. When you find the one you want, tap Add in the top right corner of your screen and the new emoji will be available to use as an emoji, tapback or a sticker. Now you don’t have to wait for the Unicode Standard to propose, create and bring new emoji to devices.

For more iOS news, here’s what to know about iOS 26.4 and iOS 26.3. You can also check out our iOS 26 cheat sheet for other tips and tricks.

Technologies

Save Over 20% on This Handy 10,000-mAh Anker Nano Power Bank

Keep your devices charged on the go with this Anker Nano power bank, now down to just $46.

We’ve just spotted the Anker Nano 45-watt portable power bank for just $46 at Amazon right now. This saves you $14 — a 23% discount on its list price. Though it’s $6 more than the lowest-ever price we saw during Black Friday, it’s still a solid discount when you take the rising cost of tech accessories into account. It also matches the lowest price we’ve seen in 2026. It comes in four colors: black, green, pink and white. They’re all on sale for the same price.

This Anker Nano portable charger weighs approximately 8.2 ounces and measures a compact 3.21×1.99×1.42 inches. Despite its small size, it has a retractable cable and supports fast charging in compatible Apple, Samsung, Google Pixel and other smartphones. It also has a large 10,000-mAh capacity and a smart display so you always know how much juice is left in your power bank.

The Nano can charge an iPhone 17 to up to 50% battery in an estimated 20 minutes, and is powerful enough to charge tablets and laptops. Need to charge your devices while charging your power bank? You can do so safely thanks to pass-through charging so you’ll never have to go without battery life.

We’ve also compiled a list of the best power banks for iPhones and for Android, in case this deal isn’t quite a fit for you.

Why this deal matters

If you travel, have a long commute time or are otherwise always on the go, a portable charger can help you keep your devices fully powered. This 45-watt Anker Nano power bank is compact, includes a loop that lets you keep track of it easily and has a built-in cable so you don’t have to keep up with extra cords. Amazon’s $14 discount makes this a solid deal for anyone looking for a compact power bank.

-

Technologies3 года ago

Technologies3 года agoTech Companies Need to Be Held Accountable for Security, Experts Say

-

Technologies3 года ago

Technologies3 года agoBest Handheld Game Console in 2023

-

Technologies3 года ago

Technologies3 года agoTighten Up Your VR Game With the Best Head Straps for Quest 2

-

Technologies4 года ago

Technologies4 года agoBlack Friday 2021: The best deals on TVs, headphones, kitchenware, and more

-

Technologies5 лет ago

Technologies5 лет agoGoogle to require vaccinations as Silicon Valley rethinks return-to-office policies

-

Technologies5 лет ago

Technologies5 лет agoVerum, Wickr and Threema: next generation secured messengers

-

Technologies4 года ago

Technologies4 года agoOlivia Harlan Dekker for Verum Messenger

-

Technologies4 года ago

Technologies4 года agoThe number of Сrypto Bank customers increased by 10% in five days