Technologies

WWDC 2023 Biggest Reveals: Vision Pro Headset, iOS 17, MacBook Air and More

From its expected AR/VR headset to new Macs to software updates like iOS 17, here’s what Apple unveiled at WWDC.

Apple’s Worldwide Developers Conference kicked off on Monday with a keynote address showing everything coming to the company’s lineup of devices. WWDC has been typically where the company gives us a first look at new software for iPhones, iPads, Apple Watches and Macs. But this year, Apple revealed a bevy of new hardware, too.

The big announcement was the debut of the Apple Vision Pro headset, a «new kind of computer» as Tim Cook put it in the presentation. But with MacBook Air and other Mac hardware announcements — including new silicon — as well as software upgrades, no corner of Apple’s ecosystem lacked for updates.

11:44

For a detailed summary of everything announced as it happened, give our live blog a look. Read on for the highlights of the presentation and links to our stories.

Apple Vision Pro, a new headset

The Apple Vision Pro is the company’s answer to the AR and VR headset race. It’s a personal display on your face with all the interface touches you’d expect from Apple, with an operating system that looks like a combination of iOS, MacOS and TVOS. And it’s not going to come cheap: The Apple Vision Pro retails for $3,499 and will start shipping early next year.

The device itself looks like other headsets, though the glass front hides cameras and even a curved OLED outer display (more on why later). The headset is secured to the wearer’s head with a wide rear band (no over-the-top strap), though as rumors suggested, there’s an external battery back that connects over a cable and sits in your pocket. There’s a large Apple Watch-style digital crown on the right side that lets you dial immersion (the outside world) in and out.

The Vision Pro has three-element lenses that enable 4K resolution, though you can swap out lenses, presumably for different vision capabilities. Audio pods are embedded within the band to sit over your ears, and «audio ray tracing» maps sound to your position. A suite of lidar and other sensors on the bottom of the headset track hand and body motions.

Technically speaking, the Vision Pro is a computer, with an M2 chip found on Apple’s highest-end computers. But a new R1 chip processes all the other headset inputs from 12 cameras, five sensors and six microphones and sends it to the M2 to reduce lag and get new images to displays within 12 milliseconds. The Vision Pro runs the new VisionOS, which uses iOS frameworks, a 3D engine, foveated rendering and other software tricks to make what Apple calls «the first operating system designed from the ground up for spatial computing.»

Interior cameras track your facial motion, which is projected to others when on FaceTime and other video chatting apps.

Apple Vision Pro can scan your face to create a digital 3D avatar.

To keep users from being cut off from the outside world, the EyeSight feature uses inside-pointing cameras and the headset’s outer display to show your eyes — essentially showing people around you what your eyes are focusing on. If you’ve dialed your immersion all the way on, your eyes will disappear on the outside screen. But you’re not totally cut off. While wearing the headset, if someone approaches you they’ll filter in to your vision.

The interface uses hand motions to control the device, though there are also voice controls. It’s tough to tell how these controls will work, and we’d expect that users will need some time to adapt to not using a mouse and keyboard.

This isn’t just an entertainment device. Apple is pitching its first new product in eight years as a work-from-home and travel device, essentially letting you open however many windows you want. It can work in the office as a display for Macs, and supports Apple’s Magic Keyboard and Trackpad devices.

The Vision Pro has Apple’s first 3D cameras and can take spatial photos, providing 3D depth with binaural audio to experience moments with more immersion. Of course, this spatial experience is extended to movies that’s «impossible to represent on a 2D screen,» Apple said during its presentation, continually teasing the exclusivity that non-headset wearers won’t even understand without trying out a Vision Pro. Disney CEO Bob Iger took the WWDC stage to vouch for the headset, and followed with a short video showing interactive 3D experiences that Vision Pro users will soon get to experience on the Disney Plus streaming service.

Now that Apple has all these new cameras and eye-tracking, it’s introduced a way to secure your data and purchases with Optic ID, which uses your eyes as an optical fingerprint for authentication. Camera data is processed at the system level, so what the headset sees isn’t fed up to the cloud.

Read more: Apple’s ‘One More Thing’ retrospective

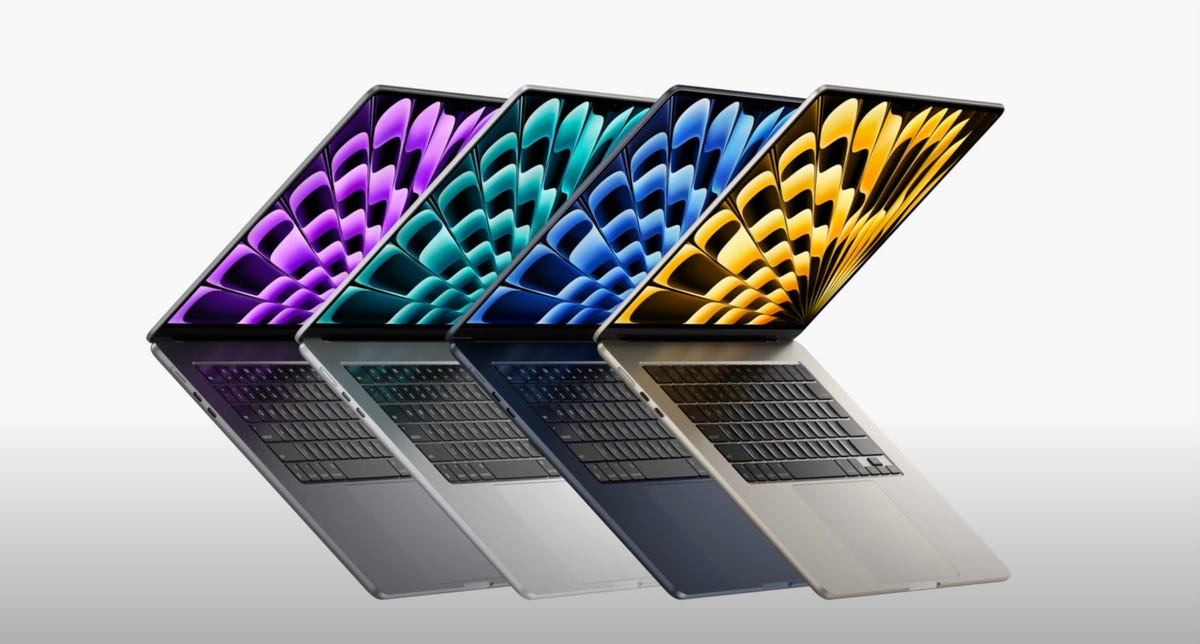

New MacBook Air 15

As was rumored, Apple announced a new MacBook Air 15, a larger version of the MacBook Air 13 that launched last year.

The MacBook Air 15 is powered by an M2 chip and gets up to 18 hours of battery life. Configurations can come with up to 24GB of memory and up to 2TB of storage, retailing for $1,299 to start (or $1,199 with a student discount).

The 15-inch model is 11.5mm thick and 3.3 pounds, and has two Thunderbolt ports and a Magsafe cable connector — along with a 3.5mm headphone jack. It has an above-display 1080p camera in a notch, three microphones and six speakers with force-canceling subwoofers.

Read more: 15-inch MacBook Air M2 Preorder: Where to Buy Apple’s Latest Laptop

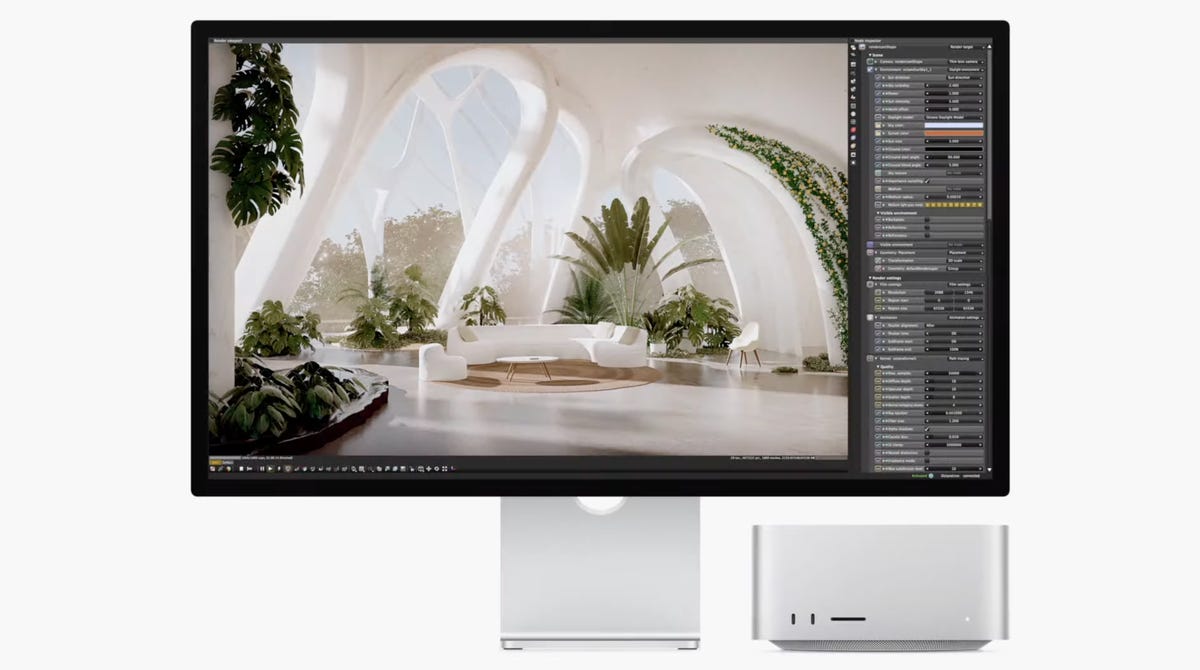

Mac Studio with M2

A new Mac Studio has landed and it comes with Apple’s latest silicon. The new model comes with an M2 Max chipset, or the new M2 Ultra chipset — essentially two M2 Max chips combined, which enables up to 192GB of memory.

The M2 Ultra stole the spotlight with new capabilities, with a 24-core CPU and streaming 22 videos at 8K ProRes resolution at once. It can support up to six Apple Pro Displays at once.

The Mac Studio starts at $1,999 and will be available starting next week.

Mac Pro with M2 Ultra

Apple wasted no time announcing that its new high-end desktop Mac Pro model would get the M2 Ultra as well. The new Mac Pro gets all the M2 Ultra upgrades as the Studio, including support for up to 192GB of RAM.

The Mac Pro has eight thunderbolt ports, two HDMI ports and dual 10GB ethernet ports, with six open PCIe Gen 4 slots. The new Mac Pro comes in both upright tower and horizontal rack orientations.

The new Mac Pro starts at $6,999 and will be available starting next week.

iOS 17

iOS 17 brings a ton of quality-of-life improvements, and the iOS 17 developer beta is available now to download. Finally, you can use more filters while searching within your Messages. In addition to pressing and holding on messages to reply, you can also simply swipe on specific messages to reply to them, and voice notes will be transcribed.

Say goodbye to gray screens when you get calls — now you can set full-screen photos or Memoji to contacts when they call you. And if someone leaves a voicemail, you can see it transcribed in real-time to help you screen calls if you don’t recognize a caller.

06:31

A new safety feature, Check In, sends a note to a trusted contact when you reach a location — like when you make it home safe after late-night travel. If it’s taking you longer to get to a destination, you’ll be prompted to extend the timer rather than alert your contact. It also shares your battery and signal status. Check In is end-to-end encrypted.

Last year, Apple introduced an iOS feature to let you copy photo subjects and paste them as stickers — and now you can do that with video to essentially create GIFs to share with friends or even as responses to Messages. All emoji are now shareable stickers, too.

AirDrop has been a helpful tool to send files between Apple devices, but now you can share your contact info with Name Drop. You can choose what you want to share between email addresses, phone numbers and more.

Also, say goodbye to relying on Notes to jot down your thoughts — Journal is a new secure app for personal recollections. Apple is pitching it as a gratitude exercise, but iOS will auto-include activities like songs and workouts you’ve done to your personal log.

Apple Maps got an update that Android owners have had for years — the ability to use Maps offline, especially helpful when you’re outside network range while outdoors or conserving battery.

A new mode, StandBy, converts an iPhone to an alarm clock when it’s charging and rotated horizontally. It gets smart interactions like a large visible clockface along with calendar and music controls.

Lastly, as was rumored, you won’t have to say «Hey Siri» anymore. Just saying «Siri» will bring up the voice assistant.

Read more: Apple Finally Lets You Type What You Ducking Mean on iOS 17

iPadOS 17

iPadOS 17 brings more controls to widgets, which don’t just show more info at a glance — they have more interactive buttons to let you control your smart home or play music.

iPadOS 17 is bringing more interactive personal data to the Health app, including richer sleep and activity visualization.

The next iPadOS update brings quality-of-life upgrades like more lock screen customization and multiple timers (helpful when cooking), as well as improvements to the follow-you-during-video-calls Stage Manager feature for iPad selfie cameras.

With all the screen space on an iPad, Apple expanded what you can do with PDFs, which can be autofilled and signed from within iPadOS. iPad owners can collaborate in real time while tweaking PDFs, and the files can now be stored in the Notes app.

MacOS Sonoma

MacOS Sonoma, named after one of California’s most famous wine-producing areas, continues the WWDC theme of adding more widget functionality.

Sonoma also has some gaming upgrades like a new gaming mode that prioritizes CPU and GPU to improve frame rate. Apple is paying attention to immersion with lower latency for wireless controllers and speakers or headsets. The company is also courting developers with game dev kits and Metal 3. But the biggest gaming announcement is that legendary game creator Hideo Kojima’s opus Death Stranding is coming to Macs later this year. «We are actively working to bring our future titles to Apple platforms,» Kojima said during the WWDC presentation.

On the business side, Mac has improved videoconferencing with an overlay that shows slide controls while you’re presenting. Apple also introduced new reactions — like ticker-tape falling for a congratulations — that can be triggered with gestures.

PassKey, the end-to-end encrypted password chain tech Apple introduced last year, can now be shared with other contacts, and everyone included can edit and update passwords to be shared with the group.

Safari has security updates including locking the browser window when in private browsing mode, and profiles to separate accounts, logins and cookies between work and personal use.

AirPods and audio upgrades

Apple has a handful of improvements to its audio products. AirPods will get Adaptive Audio, which combines noise-canceling with intelligent audio to drown out annoying background noise while letting through important sounds — like car horns or bike bells. It’ll also pass through voices in case someone starts a conversation in person.

And it’s far easier to digitally take control of the music with SharePlay while somebody with CarPlay is driving — a prompt will go out to others in the car asking if they want to take control.

Apps in WatchOS 10 are getting a new look.

WatchOS 10

Yet again, widgets make an appearance with WatchOS 10, the next operating system upgrade for Apple Watches. Widgets are now accessible in a stack from your home screen — just use the digital crown to scroll between them.

Apple has focused on cycling this year, improving workouts by showing functional threshold data, an important metric for cyclists. It also connects over Bluetooth to sensors on bikes, and there’s a new full-screen mode for iPhones that allows you to use it as a full screen while cycling.

Hikers, rejoice! WatchOS 10 has upgraded its compass with cellular connection waypoints, telling you which direction to walk and how far you have to go before you can get carrier reception. It also shows SOS waypoint spots, and shows elevation view in the 3D compass view. There’s also a neat topographical view.

Apple is also expanding its Mindfulness app to log how you’re feeling in State of Mind, choosing between color-coded emotional states. You can even access this from your iPhone in case you’re away from your Apple Watch.

Health focuses for 2023

On top of the WatchOS Mindfulness updates, Apple introduced a neutral survey to self-report mood and mental health, which acts as a sort of non-medical way to indicate whether you may want to get professional help.

Apple also has a new cross-device Vision Health focus in the Health app, and a new feature on the Apple Watch measures daylight time spent outside to watch for myopia in younger wearers. Screen Distance uses the TrueDepth camera on iPads to warn people if they’re too close to the screen.

Technologies

Verum Messenger: Don’t follow the future. Define it

Verum Messenger: Don’t follow the future. Define it

In a world where information defines influence, Verum Messenger is building a new architecture of digital communication — intelligent, secure, and ready for tomorrow. Here, technology serves not limitations, but possibilities.

Not being part of change. Leading it. Verum Messenger — the future that speaks first.

Technologies

Verum Finance: Stop Spending Months Opening a Bank Account

Verum Finance: Stop Spending Months Opening a Bank Account

Stop spending months trying to open a bank account.

Document submissions.

Checks.

Rejections.

Account freezes.

Blocks without explanation.

And all of that — just for a regular card.

With Verum, it’s different.

🚀 Verum Messenger + Verum Finance

For just $50–70 you get:

✔ A virtual card

✔ Instant transfers between users

✔ A modern secure messenger

✔ Apple Pay integration

✔ Contactless payments worldwide

✔ Fast setup without bureaucracy

❌ No European residency permit required

❌ No endless verification checks

❌ No piles of documents

Open it — and use it.

The future of finance and communication is already here.

Verum — when freedom matters more than banking rules.

Technologies

Google races to put Gemini at the center of Android before Apple’s AI reboot

Google is using its latest Android rollout to position Gemini as the AI layer across phones, Chrome, laptops and cars.

Google is using its latest Android rollout to make Gemini less of a chatbot and more of an operating layer across the phone, browser, car and laptop, just weeks before Apple is expected to show its own Gemini-powered Apple Intelligence reboot at WWDC.

Ahead of its Google I/O developer conference next week, the company previewed a number of Android updates, including AI-powered app automation, a smarter version of Chrome on Android, new tools for creators, a redesigned Android Auto experience, and a sweeping set of new security features.

Alphabet is counting on Gemini to help Google compete directly with OpenAI and Anthropic in the market for artificial intelligence models and services, while also serving as the AI backbone across its expansive portfolio of products, including Android. Meanwhile, Gemini is powering part of Apple’s new AI strategy, giving Google a role in the iPhone maker’s reset even as it races to prove its own version of personal AI on the phone is further along.

Sameer Samat, who oversees Google’s Android ecosystem, told CNBC that Google is rebuilding parts of Android around Gemini Intelligence to help users complete everyday tasks more easily.

“We’re transitioning from an operating system to an intelligence system,” he said.

As part of Tuesday’s announcements. Google said Gemini Intelligence will be able to move across apps, understand what’s on the screen and complete tasks that would normally require a user to jump between multiple services. That means Android is moving beyond the traditional assistant model, where users ask a question and get an answer, and acting more like an agent.

For instance, Google says Gemini can pull relevant information from Gmail, build shopping carts and book reservations. Samat gave the example of asking Gemini to look at the guest list for a barbecue, build a menu, add ingredients to an Instacart list and return for approval before checkout.

A big concern surrounding agentic AI involves software taking action on a user’s behalf without permissions. Samat said Gemini will come back to the user before completing a transaction, adding, “the human is always in the loop.”

Four months after announcing its Gemini deal with Google, Apple is under pressure to show a more capable version of Apple Intelligence, which has been a relative laggard on the market. Apple has long framed privacy, hardware integration and control of the user experience as its advantages.

Google’s Android push is designed to show it can bring AI deeper into the device experience while still giving users control over what Gemini can see, where it can act and when it needs confirmation.

The app automation features will roll out in waves, starting with the latest Samsung Galaxy and Google Pixel phones this summer, before expanding across more Android devices, including watches, cars, glasses and laptops later this year.

The company is also redesigning Android Auto around Gemini, turning the car into another major surface for its assistant. Android Auto is in more than 250 million cars, and Google says the new release includes its biggest maps update in a decade and Gemini-powered help with tasks like ordering dinner while driving.

Alphabet’s AI strategy has been embraced by Wall Street, which has pushed the company’s stock price up more than 140% in the past year, compared to Apple’s roughly 40% gain. Investors now want to see how Gemini can become more central to the products people use every day.

WATCH: Alphabet briefly tops Nvidia after report of $200 billion Anthropic cloud deal