Technologies

Razer Ups Its Gaming Gear for 2023 with 18-inch Blade, Accessories

At CES, bigger Blade laptops are joined by a new high-end Kiyo Pro Ultra webcam for streamers and a beefier Leviathan V2 Pro gaming soundbar.

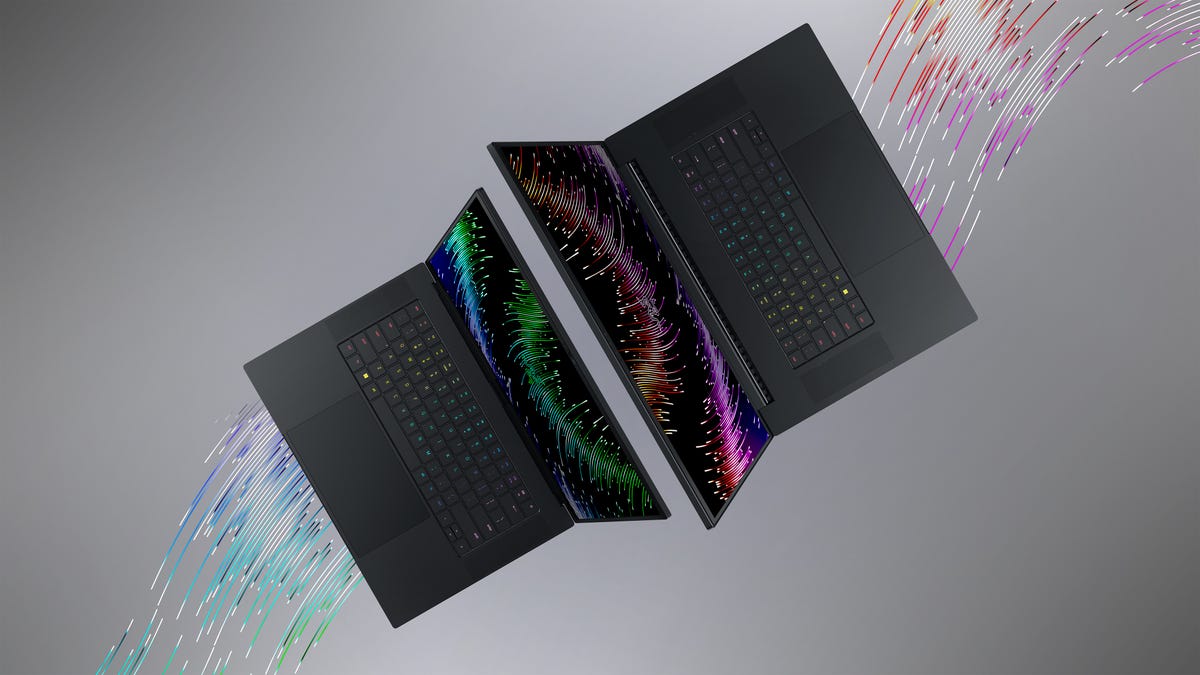

Razer’s new 16- and 18-inch Blade laptops join the pack of front-line CES gaming laptops. Like a lot of other models announced at CES, Razer has essentially replaced its 17-inch Blade with an 18-inch and brought back the «desktop replacement» terminology after a hiatus. Both boast the latest technologies announced at the show, including top-of-the-line 13th-gen Intel Core i9 HX chips and Nvidia GeForce RTX 40 series mobile graphics.

The Blade 16 does offer a novel 1,000-nit screen, which Razer refers to as «dual-mode»: In Creator mode, it operates at 4K-plus resolution (the 16:10 aspect ratio means it’s just off 16:9 4K) at a refresh rate of 120Hz, while in Gamer mode it drops the resolution to 1080p-ish to run at 240Hz. It’s an interesting concept, but the execution will make or break its usefulness.

Razer has also invented a new spec it calls «Graphics Power Density,» for the amount of graphics power per cubic inch (which, unsurprisingly, it has the most of!) in order to convey thin-but-powerful-ness. I suspect it’s because the Blades tend to be heavy, but it’s kind of nebulous and I really, really hope it doesn’t catch on.

The Blade 18 also has the new components, but instead a 1440p-plus 240Hz display. It gets one of the increasingly common 5MP webcams and incorporates a six-speaker array that uses Razer’s own THX spatial audio.

Both are slated to ship this quarter. The Blade 16 starts at $2,700, while the Blade 18 starts at $2,900.

Razer’s Leviathan V2 soundbar line has gotten an upscale sibling, the Leviathan V2 Pro. In addition to adding a gazillion lighting zones (OK, 30), the Pro beefs up its audio chops with head tracking (via IR cameras) and beamforming to more precisely target the sound toward your ears.

It also replaces the pairs of full-range drivers, tweeters and passive radiators with five full-range drivers which boosts the lower frequency response range down to 40Hz from 45Hz, while upping the power output to 98dB from 96dB. The Leviathan V2 Pro also puts back the headphone jack Razer had removed when it leveled the Leviathan up a generation. All of that makes it a bit longer, though.

You can preorder the soundbar now for $400; it’s scheduled to ship at the end of January.

Razer already had a Kiyo Pro webcam, so its newest model, which jumps to the top of the line, went Ultra. The 4K Kiyo Pro Ultra has been upgraded with a 1/1.2-inch sensor, much larger than typical webcams, which can help a lot with exposure (especially in low light) and color. It doesn’t necessarily guarantee a better result, but larger sensors usually do improve image quality over smaller ones.

It’s got an «ultra-large f1.7 aperture lens,» which doesn’t mean a lot; a larger sensor requires a larger lens, and f1.7 is neither here nor there. The webcam does, however, seem to have to have focusing behavior and depth of field, which is sadly lacking in webcams. Razer challenges Elgato’s Facecam Pro by claiming rawer raw processing, with in-camera conversion of the 40-30fps stream into lower resolutions and frame rates on the fly and directly stream out.

The Kiyo Pro Ultra has a built-in shutter in addition to a protective (but easily lost) standalone cover. That was also on my wish list.

It’s available now, albeit at a pricey $400.

The company also unveiled the first of a line of add-ons for the Meta Quest 2, padding developed with partner ResMed, and announced the availability of the Edge and Edge 5G tablet-plus-controller handhelds for cloud gaming.

Technologies

Sony Hits the Brakes on Electric Cars With Built-In PlayStation Features

Two EV models that Sony was developing with Honda, the Afeels 1 sedan and an Afeela SUV, are now discontinued.

Technologies

Samsung’s New Budget Galaxy A37 and A57: Improved Designs and AI Features

Technologies

My 3 Favorite Bose Headphones Deals on Amazon Aren’t Actually From Bose

Baseus’ Inspire XH1, XP1 and XC1 headphones with Sound by Bose are up to 23% off during Amazon’s Big Spring Sale. A bonus item makes the deal even harder to ignore.

I gave CNET Editors’ Choice awards to Baseus’ Bose-infused Baseus Inspire XH1 headphones and Inspire XP1 earbuds because they’re well designed and sound decent consider their prices. I also liked Baseus’ Inspire XC1 clip-on earbuds, which have dual drivers. They even earned a spot on CNET’s best clip-on earbuds list and are probably the best clip-on buds at their price right now.

Amazon’s Big Spring Sale just kicked off, and it’ll be around through March 31. Right now, all three models are discounted to $100 to $123, bringing them near their all-time low prices.

That’s a deal I’d highlight on its own, but if you click through to any of those models’ Amazon product pages and look closely, you’ll see that each is eligible for «one free item» with purchase.

Read more: Best Wireless Earbuds of 2026

You must click the how to claim link first. Then click a button on the left side of the screen (above the stars for average ratings) to switch the view from «qualifying items» to «benefit items» and see the freebie. The items tend to be Baseus’ entry-level headphones or earbuds, but if you don’t like the free item option with a $120 purchase, you can try the options at lower prices.

You can read my full reviews of the Inspire XH1 headphones here and the Inspire XP1 earbuds here. And here’s my quick take on the Inspire XC1 earbuds:

Like Baseus’ noise-isolating Inspire XP1 earbuds, which I rated highly, the Inspire XC1 have Sound by Bose and a more premium design than earlier Baseus earbuds. The XC1 don’t sound as good as the XP1, they’re decent open earbuds and are equipped with dual drivers (one is a Knowles balanced-armature driver that helps improve treble performance). While they don’t produce as much bass as noise-isolating earbuds like the Inspire XP1, their bass performance is better than I expected. The buds’ sound is pretty full, especially in quieter environments, though they do better with less bass-heavy material. I did notice a bit of distortion at higher volumes with certain tracks that feature harder-driving bass.

While I slightly prefer the design and fit of Bose’s Ultra Open Earbuds, as well as the design of their case, and think the Bose buds sound more natural and a tad better overall, the much more affordable Inspire XC1 fit comfortably and offer top-tier sound for clip-on open earbuds, as well as decent voice-calling performance with good background noise reduction. And they play louder than the Bose, too.

You can grab the Inspire XH1 for $123, the XP1 for $100 and the XC1 for $110, saving you up to 23%. Just remember to claim your free item with your purchase.

Read more: Best Headphones We’ve Tested

HEADPHONE DEALS OF THE WEEK

-

$248 (save $152)

-

$170 (save $181)

-

$398 (save $62)

-

$200 (save $250)

For other audio deals happening now, our CNET shopping experts have rounded up headphones, speakers and earbuds deals across a variety of brands and budgets.

-

Technologies3 года ago

Technologies3 года agoTech Companies Need to Be Held Accountable for Security, Experts Say

-

Technologies3 года ago

Technologies3 года agoBest Handheld Game Console in 2023

-

Technologies3 года ago

Technologies3 года agoTighten Up Your VR Game With the Best Head Straps for Quest 2

-

Technologies4 года ago

Technologies4 года agoBlack Friday 2021: The best deals on TVs, headphones, kitchenware, and more

-

Technologies5 лет ago

Technologies5 лет agoGoogle to require vaccinations as Silicon Valley rethinks return-to-office policies

-

Technologies5 лет ago

Technologies5 лет agoVerum, Wickr and Threema: next generation secured messengers

-

Technologies4 года ago

Technologies4 года agoOlivia Harlan Dekker for Verum Messenger

-

Technologies4 года ago

Technologies4 года agoThe number of Сrypto Bank customers increased by 10% in five days