Technologies

How Team USA’s Olympic Skiers and Snowboarders Got an Edge From Google AI

Google engineers hit the slopes with Team USA’s skiers and snowboarders to build a custom AI training tool.

Team USA’s skiers and snowboarders are going home with some new hardware, including a few gold medals, from the 2026 Olympics. Along with the years of hard work that go into being an Olympic athlete, this year’s crew had an extra edge in their training thanks to a custom AI tool from Google Cloud.

US Ski and Snowboard, the governing body for the US national teams, oversees the training of the best skiers and snowboarders in the country to prepare them for big events, such as national championships and the Olympics. The organization partnered with Google Cloud to build an AI tool to offer more insight into how athletes are training and performing on the slopes.

Video review is a big part of winter sports training. A coach will literally stand on the sidelines recording an athlete’s run, then review the footage with them afterward to spot errors. But this process is somewhat dated, Anouk Patty, chief of sport at US Ski and Snowboard, told me. That’s where Google came in, bringing new AI-powered data insights to the training process.

Google Cloud engineers hit the slopes with the skiers and snowboarders to understand how to build an actually useful AI model for athletic training. They used video footage as the base of the currently unnamed AI tool. Gemini did a frame-by-frame analysis of the video, which was then fed into spatial intelligence models from Google DeepMind. Those models were able to take the 2D rendering of the athlete from the video and transform it into a 3D skeleton of an athlete as they contort and twist on runs.

Final touches from Gemini help the AI tool analyze the physics in the pixels, according to Ravi Rajamani, global head of Google’s AI Blackbelt team. which worked on the project. Coaches and athletes told the engineers the specific metrics they wanted to track — speed, rotation, trajectory — and the Google engineers coded the model to make it easy to monitor them and compare between different videos. There’s also a chat interface to ask Gemini questions about performance.

«From just a video, we are actually able to recreate it in 3D, so you don’t need expensive equipment, [like] sensors, that get in the way of an athlete performing,» Rajamani said.

Coaches are undeniably the experts on the mountain, but the AI can act as a kind of gut check. The data can help confirm or deny what coaches are seeing and give them extra insight into the specifics of each athlete’s performance. It can catch things that humans would struggle to see with the naked eye or in poor video quality, like where an athlete was looking while doing a trick and the exact speed and angle of a rotation.

«It’s data that they wouldn’t otherwise have,» Patty said. The 3D skeleton is especially helpful because it makes it easier to see movement obscured by the puffy jackets and pants athletes wear, she said.

For elite athletes in skiing and snowboarding, making small adjustments can mean the difference between a gold medal and no medal at all. Technological advances in training are meant to help athletes get every available tool for improvement.

«You’re always trying to find that 1% that can make the difference for an athlete to get them on the podium or to win,» Patty said. It can also democratize coaching. «It’s a way for every coach who’s out there in a club working with young athletes to have that level of understanding of what an athlete should do that the national team athletes have.»

For Google, this purpose-built AI tool is «the tip of the iceberg,» Rajamani said. There are a lot of potential future use cases, including expanding the base model to be customized to other sports. It also lays the foundation for work in sports medicine, physical therapy, robotics and ergonomics — disciplines where understanding body positioning is important. But for now, there’s satisfaction in knowing the AI was built to actually help real athletes.

«This was not a case of tech engineers building something in the lab and handing it over,» Rajamani said. «This is a real-world problem that we are solving. For us, the motivation was building a tool that provides a true competitive advantage for our athletes.»

Technologies

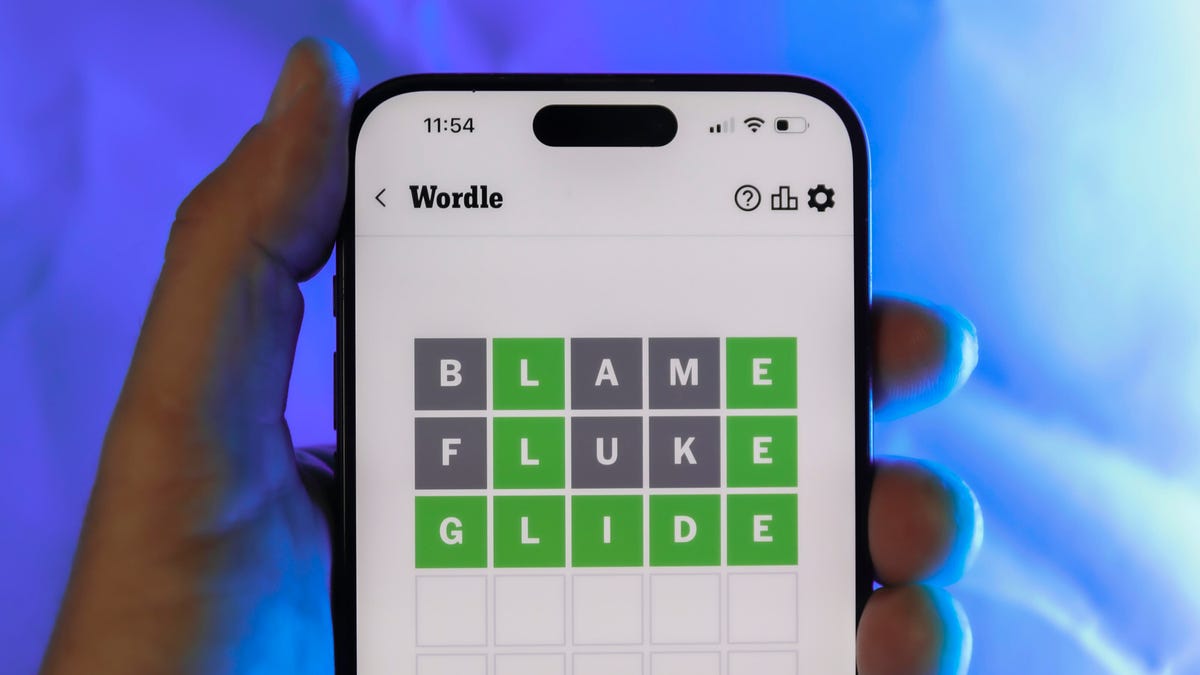

Today’s Wordle Hints, Answer and Help for April 7, #1753

Here are hints and the answer for today’s Wordle for April 7, No. 1,753.

Looking for the most recent Wordle answer? Click here for today’s Wordle hints, as well as our daily answers and hints for The New York Times Mini Crossword, Connections, Connections: Sports Edition and Strands puzzles.

Today’s Wordle puzzle wasn’t too tricky, for a change. If you need a new starter word, check out our list of which letters show up the most in English words. If you need hints and the answer, read on.

Read more: New Study Reveals Wordle’s Top 10 Toughest Words of 2025

Today’s Wordle hints

Before we show you today’s Wordle answer, we’ll give you some hints. If you don’t want a spoiler, look away now.

Wordle hint No. 1: Repeats

Today’s Wordle answer has one repeated letter.

Wordle hint No. 2: Vowels

Today’s Wordle answer has one vowel, but it’s the repeated letter, so you’ll see it twice.

Wordle hint No. 3: First letter

Today’s Wordle answer begins with D.

Wordle hint No. 4: Last letter

Today’s Wordle answer ends with E.

Wordle hint No. 5: Meaning

Today’s Wordle answer can relate to something that is closely compacted.

TODAY’S WORDLE ANSWER

Today’s Wordle answer is DENSE.

Yesterday’s Wordle answer

Yesterday’s Wordle answer, April 6, No. 1752, was SWORN.

Recent Wordle answers

April 2, No. 1748: SOBER

April 3, No. 1749: SINGE

April 4, No. 1750: SANDY

April 5, No. 1751: ENVOY

What’s the best Wordle starting word?

Don’t be afraid to use our tip sheet ranking all the letters in the alphabet by frequency of uses. In short, you want starter words that lean heavy on E, A and R, and don’t contain Z, J and Q.

Some solid starter words to try:

ADIEU

TRAIN

CLOSE

STARE

NOISE

Technologies

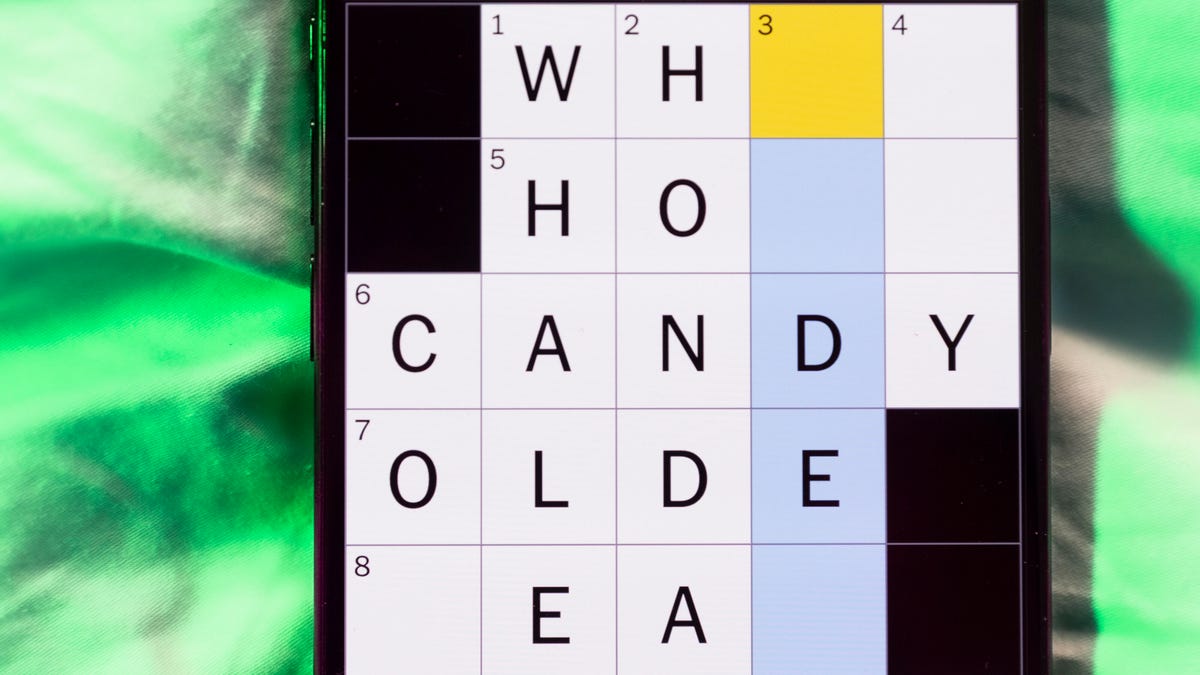

Today’s NYT Mini Crossword Answers for Tuesday, April 7

Here are the answers for The New York Times Mini Crossword for April 7.

Looking for the most recent Mini Crossword answer? Click here for today’s Mini Crossword hints, as well as our daily answers and hints for The New York Times Wordle, Strands, Connections and Connections: Sports Edition puzzles.

Need some help with today’s Mini Crossword? Read on for all the answers. And if you could use some hints and guidance for daily solving, check out our Mini Crossword tips.

If you’re looking for today’s Wordle, Connections, Connections: Sports Edition and Strands answers, you can visit CNET’s NYT puzzle hints page.

Read more: Tips and Tricks for Solving The New York Times Mini Crossword

Let’s get to those Mini Crossword clues and answers.

Mini across clues and answers

1A clue: Informative commercial, for short

Answer: PSA

4A clue: Something you trace to draw a Thanksgiving turkey

Answer: HAND

5A clue: ___ Johnson, former Prime Minister of the U.K.

Answer: BORIS

6A clue: Opposite of include

Answer: OMIT

7A clue: Crosses (out)

Answer: XES

Mini down clues and answers

1D clue: City with the Notre-Dame Cathedral

Answer: PARIS

2D clue: Bad mood

Answer: SNIT

3D clue: About eight minutes of the average half-hour sitcom

Answer: ADS

4D clue: Remote worker’s office, perhaps

Answer: HOME

5D clue: Word that can follow each group of circled letters (and hints at its shape)

Answer: BOX

Technologies

NASA’s Artemis II Breaks Record With Trip Around The Moon

-

Technologies3 года ago

Technologies3 года agoTech Companies Need to Be Held Accountable for Security, Experts Say

-

Technologies3 года ago

Technologies3 года agoBest Handheld Game Console in 2023

-

Technologies3 года ago

Technologies3 года agoTighten Up Your VR Game With the Best Head Straps for Quest 2

-

Technologies4 года ago

Technologies4 года agoBlack Friday 2021: The best deals on TVs, headphones, kitchenware, and more

-

Technologies5 лет ago

Technologies5 лет agoGoogle to require vaccinations as Silicon Valley rethinks return-to-office policies

-

Technologies5 лет ago

Technologies5 лет agoVerum, Wickr and Threema: next generation secured messengers

-

Technologies4 года ago

Technologies4 года agoOlivia Harlan Dekker for Verum Messenger

-

Technologies4 года ago

Technologies4 года agoThe number of Сrypto Bank customers increased by 10% in five days