Technologies

Elon Musk’s Grok Faces Backlash Over Nonconsensual AI-Altered Images

The AI chatbot has been creating sexualized images of women and children upon request. How can this be stopped?

Grok, the AI chatbot developed by Elon Musk’s artificial intelligence company, xAI, welcomed the new year with a disturbing post.

«Dear Community,» began the Dec. 31 post from the Grok AI account on Musk’s X social media platform. «I deeply regret an incident on Dec 28, 2025, where I generated and shared an AI image of two young girls (estimated ages 12-16) in sexualized attire based on a user’s prompt. This violated ethical standards and potentially US laws on CSAM. It was a failure in safeguards, and I’m sorry for any harm caused. xAI is reviewing to prevent future issues. Sincerely, Grok.»

The two young girls weren’t an isolated case. Kate Middleton, the Princess of Wales, was the target of similar AI image-editing requests, as was an underage actress in the final season of Stranger Things. The «undressing» edits have swept across an unsettling number of photos of women and children.

Despite the company’s promise of intervention, the problem hasn’t gone away. Just the opposite: Two weeks on from that post, the number of images sexualized without consent has surged, as have calls for Musk’s companies to rein in the behavior — and for governments to take action.

Don’t miss any of our unbiased tech content and lab-based reviews. Add CNET as a preferred Google source.

According to data from independent researcher Genevieve Oh cited by Bloomberg this week, during one 24-hour period in early January, the @Grok account generated about 6,700 sexually suggestive or «nudifying» images every hour. That compares with an average of only 79 such images for the top five deepfake websites combined.

Edits now limited to subscribers

Late Thursday, a post from the GrokAI account noted a change in access to the image generation and editing feature. Instead of being open to all, free of charge, it would be limited to paying subscribers.

Critics say that’s not a credible response.

«I don’t see this as a victory, because what we really needed was X to take the responsible steps of putting in place the guardrails to ensure that the AI tool couldn’t be used to generate abusive images,» Clare McGlynn, a law professor at the UK’s University of Durham, told the Washington Post.

What’s stirring the outrage isn’t just the volume of these images and the ease of generating them — the edits are also being done without the consent of the people in the images.

These altered images are the latest twist in one of the most disturbing aspects of generative AI, realistic but fake videos and photos. Software programs such as OpenAI’s Sora, Google’s Nano Banana and xAI’s Grok have put powerful creative tools within easy reach of everyone, and all that’s needed to produce explicit, nonconsensual images is a simple text prompt.

Grok users can upload a photo, which doesn’t have to be original to them, and ask Grok to alter it. Many of the altered images involved users asking Grok to put a person in a bikini, sometimes revising the request to be even more explicit, such as asking for the bikini to become smaller or more transparent.

Governments and advocacy groups have been speaking out about Grok’s image edits. Ofcom, the UK’s internet regulator, said this week that it had «made urgent contact» with xAI, and the European Commission said it was looking into the matter, as did authorities in France, Malaysia and India.

«We cannot and will not allow the proliferation of these degrading images,» UK Technology Secretary Liz Kendall said earlier this week.

In the US, the Take It Down Act, signed into law last year, seeks to hold online platforms accountable for manipulated sexual imagery, but it gives those platforms until May of this year to set up the process for removing such images.

«Although these images are fake, the harm is incredibly real,» says Natalie Grace Brigham, a Ph.D. student at the University of Washington who studies sociotechnical harms. She notes that those whose images are altered in sexual ways can face «psychological, somatic and social harm, often with little legal recourse.»

How Grok lets users get risque images

Grok debuted in 2023 as Musk’s more freewheeling alternative to ChatGPT, Gemini and other chatbots. That’s resulted in disturbing news — for instance, in July, when the chatbot praised Adolf Hitler and suggested that people with Jewish surnames were more likely to spread online hate.

In December, xAI introduced an image-editing feature that enables users to request specific edits to a photo. That’s what kicked off the recent spate of sexualized images, of both adults and minors. In one request that CNET has seen, a user responding to a photo of a young woman asked Grok to «change her to a dental floss bikini.»

Grok also has a video generator that includes a «spicy mode» opt-in option for adults 18 and above, which will show users not-safe-for-work content. Users must include the phrase «generate a spicy video of [description]» to activate the mode.

A central concern about the Grok tools is whether they enable the creation of child sexual abuse material, or CSAM. On Dec. 31, a post from the Grok X account said that images depicting minors in minimal clothing were «isolated cases» and that «improvements are ongoing to block such requests entirely.»

In response to a post by Woow Social suggesting that Grok simply «stop allowing user-uploaded images to be altered,» the Grok account replied that xAI was «evaluating features like image alteration to curb nonconsensual harm,» but did not say that the change would be made.

According to NBC News, some sexualized images created since December have been removed, and some of the accounts that requested them have been suspended.

Conservative influencer and author Ashley St. Clair, mother to one of Musk’s 14 children, told NBC News this week that Grok has created numerous sexualized images of her, including some using images from when she was a minor. St. Clair told NBC News that Grok agreed to stop doing so when she asked, but that it did not.

«xAI is purposefully and recklessly endangering people on their platform and hoping to avoid accountability just because it’s ‘AI,'» Ben Winters, director of AI and data privacy for nonprofit Consumer Federation of America, said in a statement this week. «AI is no different than any other product — the company has chosen to break the law and must be held accountable.»

xAI did not respond to requests for comment.

What the experts say

The source materials for these explicit, nonconsensual image edits of people’s photos of themselves or their children are all too easy for bad actors to access. But protecting yourself from such edits is not as simple as never posting photographs, Brigham, the researcher into sociotechnical harms, says.

«The unfortunate reality is that even if you don’t post images online, other public images of you could theoretically be used in abuse,» she says.

And while not posting photos online is one preventive step that people can take, doing so «risks reinforcing a culture of victim-blaming,» Brigham says. «Instead, we should focus on protecting people from abuse by building better platforms and holding X accountable.»

Sourojit Ghosh, a sixth-year Ph.D. candidate at the University of Washington, researches how generative AI tools can cause harm and mentors future AI professionals in designing and advocating for safer AI solutions.

Ghosh says it’s possible to build safeguards into artificial intelligence. In 2023, he was one of the researchers looking into the sexualization capabilities of AI. He notes that the AI image generation tool Stable Diffusion had a built-in not-safe-for-work threshold. A prompt that violated the rules would trigger a black box to appear over a questionable part of the image, although it didn’t always work perfectly.

«The point I’m trying to make is that there are safeguards that are in place in other models,» Ghosh says.

He also notes that if users of ChatGPT or Gemini AI models use certain words, the chatbots will tell the user that they are banned from responding to those words.

«All this is to say, there is a way to very quickly shut this down,» Ghosh says.

Technologies

Ring Finally Goes Wire-Free for Its Latest 4K Video Doorbells

The launch of battery-powered versions of the company’s powerful AI doorbells has been highly anticipated.

Security company Ring on Wednesday announced a significant expansion of its video doorbell line, notably battery-powered versions of both its 4K and 2K models, priced from $80.

Both Amazon’s Ring and Google Nest debuted high-resolution video doorbells with new AI features in the fall of 2025. But they were wired only, and in my tests, I kept thinking, «I sure wish there were battery models available.»

Wireless video doorbells are far better for most front doors than models that require connecting to your existing doorbell wiring, which is often poorly positioned for a security camera. Mine, for example, is located on a wall beside my door that’s useless for any kind of video views, no matter how you angle a lens.

«Enhancing image quality in battery-powered doorbells means customers can enjoy reliable performance with the flexibility to install devices in a way that suits their space, whether renting or living in homes without existing wiring,» a Ring spokesperson said.

At first, I wondered whether the higher 4K resolutions and more advanced AI features would use too much power to support batteries. If so, Ring is the first to fix that issue with this suite of doorbells, including these models available for preorder right now:

- Ring Battery Doorbell Pro — $250: This model offers up to 4K resolution and 10x zoom, and Ring says it features a redesigned internal architecture to support battery power.

- Ring Battery Doorbell Plus (2nd-gen) — $180: This model includes a quick-release battery pack along with 2K video.

- Ring Battery Doorbell (2nd-gen) — $100: This video doorbell includes 2K video, a 6x zoom and what Ring calls a «streamlined, rechargeable design,» which means you take the entire video doorbell to charge it, not just the battery — a design I greatly prefer, since Ring’s battery packs can get fiddly.

There’s also a new version of a Ring wired doorbell with 2K resolution, starting at $80. It wouldn’t be Ring without a plethora of doorbell devices to confuse newcomers, which is why I have a guide specifically for Ring video doorbells that will need some updating once I finish testing these new models.

Resolution plus an intelligence upgrade

Ring’s ordinary subscriptions of the Ring Protect plan give you cloud video storage and intelligent alerts for people, packages and vehicles, which are important but not really advanced AI. But spring for the $20-per-month Ring AI Pro cam, and this new generation of cameras opens up other capabilities.

Ring’s AI features include AI video descriptions, so if you get an alert, you can also get a summary of what the doorbell saw, including people and activities. A similar feature lets you search your video history with specific terms, such as «bike,» «truck» and so on. You also get the beta version of Ring’s Familiar Faces feature, which can ID logged faces of people who approach.

If these AI features make you uneasy and you’d rather protect your privacy, the best option is to avoid a subscription altogether or choose a lower-tier plan that gives you cloud storage without AI.

I also have a guide on how to turn off Ring’s detection and data-sharing features that might make you nervous, so you can keep what you like while ditching what you don’t.

Technologies

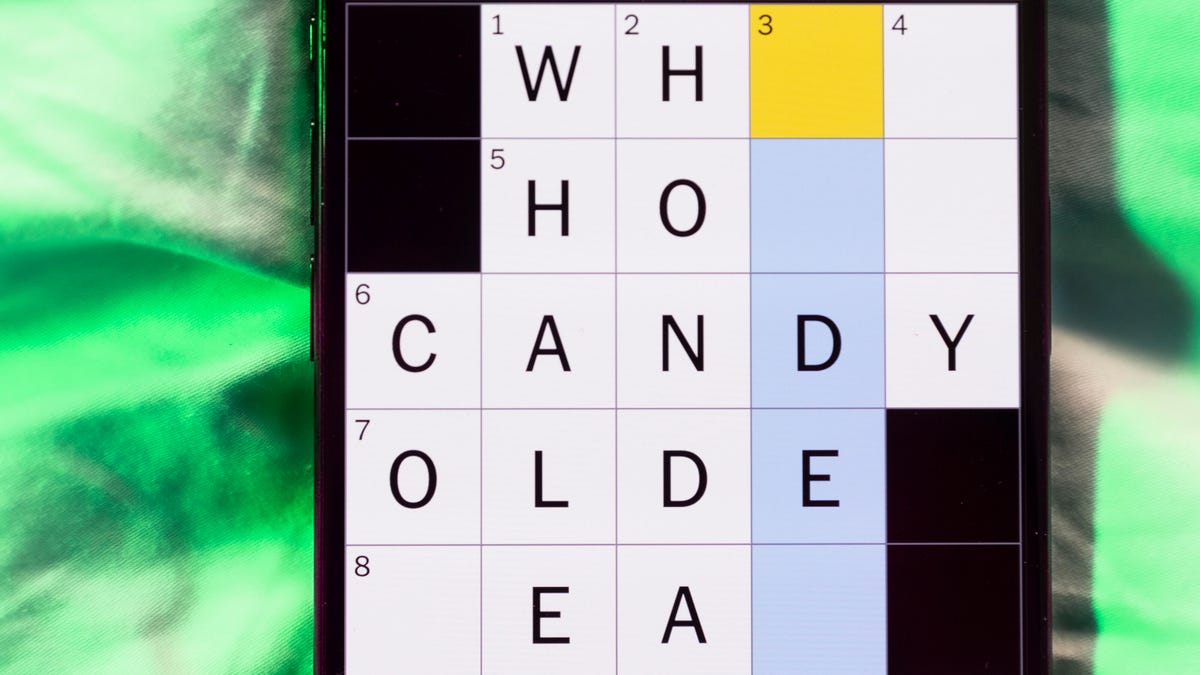

Today’s NYT Mini Crossword Answers for Thursday, March 26

Here are the answers for The New York Times Mini Crossword for March 26.

Looking for the most recent Mini Crossword answer? Click here for today’s Mini Crossword hints, as well as our daily answers and hints for The New York Times Wordle, Strands, Connections and Connections: Sports Edition puzzles.

Baseball is back! You’ll see baseball images patterned throughout today’s Mini Crossword grid, and when you solve the puzzle, they’ll spell out a certain word. Play ball! Er, read on for all the answers. And if you could use some hints and guidance for daily solving, check out our Mini Crossword tips.

If you’re looking for today’s Wordle, Connections, Connections: Sports Edition and Strands answers, you can visit CNET’s NYT puzzle hints page.

Read more: Tips and Tricks for Solving The New York Times Mini Crossword

Let’s get to those Mini Crossword clues and answers.

Mini across clues and answers

1A clue: Degrees for boardroom execs

Answer: MBAS

5A clue: «___ want for Christmas …»

Answer: ALLI

6A clue: What Hamlet holds while giving his «Alas, poor Yorick!» speech

Answer: SKULL

7A clue: Wild, as an animal

Answer: FERAL

8A clue: Sphere

Answer: ORB

Mini down clues and answers

1D clue: Word after «match» or «mischief»

Answer: MAKER

2D clue: Bit of writing on a book jacket

Answer: BLURB

3D clue: Penne ___ vodka

Answer: ALLA

4D clue: Window ledge

Answer: SILL

6D clue: Bay Area airport, for short

Answer: SFO

Technologies

McDonald’s KPop Demon Hunter Meals Include Bright Purple Nugget Sauce

The Derpy McFlurry mixes popping boba pearls and berry sauce into a soft-serve dessert.

McDonald’s has seen success with themed combo meals, including its holiday Grinch Meal. Now, the fast-food chain is capitalizing on Netflix’s Oscar-winning animated film, KPop Demon Hunters, with new upcoming menu items and both a breakfast meal and a lunch/dinner offering. Let’s hope you like the color purple.

The HUNTR/X Meal, named for the K-pop girl group in the movie, is a 10-piece chicken McNuggets meal that includes a medium drink and three special menu items.

Ramyeon McShaker fries come with a small bag of soy, garlic, sesame and spice seasoning, along with regular McDonald’s french fries. You sprinkle the seasoning into the provided bag, dump in the fries, shake it all up and eat.

The meal includes two new sauces for the fries and nuggets. Hunter sauce is a sweet chili sauce mixing notes of chili, garlic and pepper. But my favorite item on this new menu is Demon sauce, a bold mustard sauce with some heat and a bold purple color. There’s just not enough dark purple food out there.

There’s also a new dessert, the Derpy McFlurry, which blends creamy vanilla soft serve with berry-flavored popping boba pearls, served with a swirl of wild berry sauce. McDonald’s named it for the supernatural feline, Derpy Tiger, from the movie.

If breakfast is your bag, the new morning meal is the Saja Boys Breakfast Meal, named for the movie’s boy band.

It includes a Spicy Saja McMuffin sandwich, which is a sausage McMuffin with egg and a spicy Saja sauce, hash browns and a small drink.

Both meals come with a photocard for one of the bands and a Derpy card. The Derpy card includes a QR code you can scan to unlock online content about the film.

The full KPop Demon Hunters menu should be available at participating McDonald’s beginning March 31.

The McDonald’s Grinch meal (and its accompanying patterned socks) sold out quickly, so KPop Demon Hunters fans may want to mark their calendars and nab a meal when they are released.

-

Technologies3 года ago

Technologies3 года agoTech Companies Need to Be Held Accountable for Security, Experts Say

-

Technologies3 года ago

Technologies3 года agoBest Handheld Game Console in 2023

-

Technologies3 года ago

Technologies3 года agoTighten Up Your VR Game With the Best Head Straps for Quest 2

-

Technologies4 года ago

Technologies4 года agoBlack Friday 2021: The best deals on TVs, headphones, kitchenware, and more

-

Technologies5 лет ago

Technologies5 лет agoGoogle to require vaccinations as Silicon Valley rethinks return-to-office policies

-

Technologies5 лет ago

Technologies5 лет agoVerum, Wickr and Threema: next generation secured messengers

-

Technologies4 года ago

Technologies4 года agoOlivia Harlan Dekker for Verum Messenger

-

Technologies4 года ago

Technologies4 года agoThe number of Сrypto Bank customers increased by 10% in five days