Technologies

Venmo and PayPal Add Payment-Transfer Support, but Do This First

Starting in November, PayPal and Venmo users will be able to search for each other and send money directly from their accounts — a change 12 years in the making.

Many Venmo users have received a welcome email in recent days, confirming that direct payments will at last be supported between Venmo and PayPal. It’s an integration that users of the payment apps have been waiting for since 2014, when PayPal acquired Venmo.

This direct payment support arrives in November, and will work with basic searches on the mobile app and other portals. PayPal users, for example, will be able to search for Venmo users by their phone number, then send them money. The ability to search for users by email will be added at a later, unconfirmed date. So far, the companies have not said if any additional fees would apply to these types of payments.

Representatives for PayPal and Venmo did not immediately respond to requests for further comment.

The change is one of a number of updates PayPal is making in 2025. Others include the PayPal World platform, peer-to-peer payment links and an AI partnership with Google. Venmo is just one of the partners that PayPal plans on integrating with more fully around the world.

Keep your privacy and visibility options in mind

Longtime Venmo users will remember how annoying it was to have Venmo automatically make payment details public to everyone you connect with, something Venmo has improved in recent years, but which can still be a source of frustration.

We’ll have to wait until November to investigate every detail, but there is a critical visibility setting that all users should know about. It looks like PayPal users can find any Venmo user if they have the correct phone number, which could make specific scams easier or lead to spam.

You can adjust this option by heading into your Venmo app, choosing Settings (the gear icon), then Privacy, then navigating to your Find Me options, where you can restrict who can find you on PayPal. Just don’t do it quite yet: Expect a Venmo update in November to make this option available.

Technologies

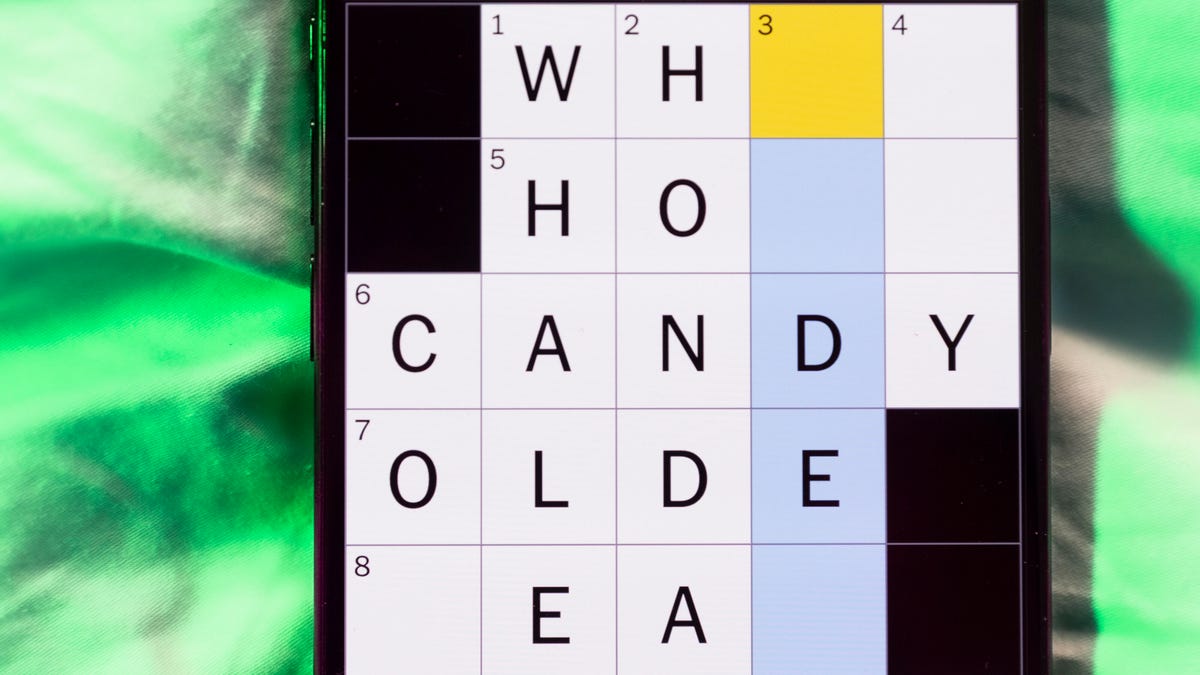

Today’s NYT Mini Crossword Answers for Tuesday, April 7

Here are the answers for The New York Times Mini Crossword for April 7.

Looking for the most recent Mini Crossword answer? Click here for today’s Mini Crossword hints, as well as our daily answers and hints for The New York Times Wordle, Strands, Connections and Connections: Sports Edition puzzles.

Need some help with today’s Mini Crossword? Read on for all the answers. And if you could use some hints and guidance for daily solving, check out our Mini Crossword tips.

If you’re looking for today’s Wordle, Connections, Connections: Sports Edition and Strands answers, you can visit CNET’s NYT puzzle hints page.

Read more: Tips and Tricks for Solving The New York Times Mini Crossword

Let’s get to those Mini Crossword clues and answers.

Mini across clues and answers

1A clue: Informative commercial, for short

Answer: PSA

4A clue: Something you trace to draw a Thanksgiving turkey

Answer: HAND

5A clue: ___ Johnson, former Prime Minister of the U.K.

Answer: BORIS

6A clue: Opposite of include

Answer: OMIT

7A clue: Crosses (out)

Answer: XES

Mini down clues and answers

1D clue: City with the Notre-Dame Cathedral

Answer: PARIS

2D clue: Bad mood

Answer: SNIT

3D clue: About eight minutes of the average half-hour sitcom

Answer: ADS

4D clue: Remote worker’s office, perhaps

Answer: HOME

5D clue: Word that can follow each group of circled letters (and hints at its shape)

Answer: BOX

Technologies

NASA’s Artemis II Breaks Record With Trip Around The Moon

Technologies

In Honor of the Artemis II Mission, Explore the Moon in Fortnite Now

You might not be able to see the moon the way the Artemis II team is, but there’s an educational Fortnite simulation that will get you onto the celestial body’s surface.

You may not be able to explore the vast majesty of space in the same way that the four-person crew of the Artemis II is, but you can still get an up-close-and-personal view of the moon… in Fortnite, at least.

While you may not be able to slingshot around Earth’s own lunar body, space enthusiasts can see a little bit of what the Artemis II crew is seeing by spending time on the Lunar Horizons Fortnite map right now. The map is a creative collaboration between Fortnite’s creator, Epic Games, and the European Space Agency. Lunar Horizons was released in 2024 after extensive testing and play from ESA trainee astronauts.

If you’re looking to learn more about our own orbiting body, the Lunar Horizons map is an educational simulation of the surface of the moon’s South Pole.

It blends game mechanics with learning, as players get to build up their own sterile lunar habitat bases, interact with ESA astronauts and roll around with robotic rovers as they discover informative plaques that contain information about the moon and international space agencies. There are still dangers to navigate, too — a solar storm may strike when you least expect it.

If you’re interested in exploring the moon, we’ve got all the information you need to join in on the Fortnite fun below. And if you’re looking for a more serious livestream during this momentous human achievement, tune into NASA’s feed here.

How to join the Moon Fortnite island while you follow the Artemis II mission

The Lunar Horizons Fortnite map is a great educational simulation that shares details about ESA’s work and catalogs information about humanity’s lunar research.

These three simple steps will get you up and running (or more accurately, taking slow leaps and bounds) on the surface of the Lunar Horizons Fortnite map:

Download Fortnite

If you haven’t played Fortnite before, but you want to check out this limited-time event, you’ll have to download the game. If you’re on PC, you can download Fortnite for free from the Epic Games Store. Console players can navigate the PlayStation Store, Microsoft Store or Nintendo eShop in order to download Fortnite on their devices.

Navigate the in-game menus until you reach the Search button

Once you’re in the game, scroll down past the different official Fortnite game modes and the Discover tab until you find the Search button.

Input the Lunar Horizons island code

In the search bar, you can input a map’s name or its distinct search code in order to find it in the map directory. You can search for the Lunar Horizons map or input the code 3207-0960-6428 to explore this map in time.

Correction, 3:35 p.m. PT: This story initially was in error about the features available in the Lunar Horizons map. There is no Artemis II-specific mission in Fortnite. Rather, the Lunar Horizons map is an educational simulation of part of the moon’s surface.

-

Technologies3 года ago

Technologies3 года agoTech Companies Need to Be Held Accountable for Security, Experts Say

-

Technologies3 года ago

Technologies3 года agoBest Handheld Game Console in 2023

-

Technologies3 года ago

Technologies3 года agoTighten Up Your VR Game With the Best Head Straps for Quest 2

-

Technologies4 года ago

Technologies4 года agoBlack Friday 2021: The best deals on TVs, headphones, kitchenware, and more

-

Technologies5 лет ago

Technologies5 лет agoGoogle to require vaccinations as Silicon Valley rethinks return-to-office policies

-

Technologies5 лет ago

Technologies5 лет agoVerum, Wickr and Threema: next generation secured messengers

-

Technologies4 года ago

Technologies4 года agoOlivia Harlan Dekker for Verum Messenger

-

Technologies4 года ago

Technologies4 года agoThe number of Сrypto Bank customers increased by 10% in five days