Technologies

Gemini Live Now Has Eyes. We Put the New Feature to the Test

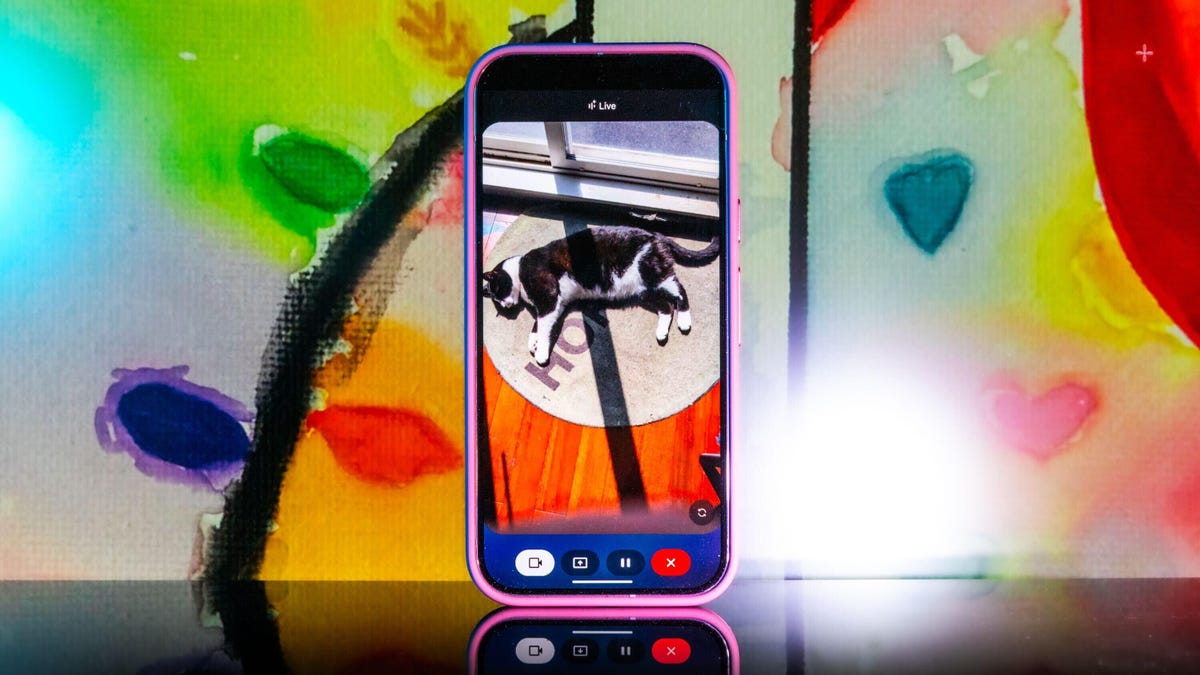

The new feature gives Gemini Live eyes to «see.» I put it through a series of tests. Here are the results.

There I was, walking around my apartment, taking a video with my phone and talking to Google’s Gemini Live. I was giving the AI a tour – and a quiz, asking it to name specific objects it saw. After it identified the flowers in a vase in my living room (chamomile and dianthus, by the way), I tried a curveball: I asked it to tell me where I’d left a pair of scissors. «I just spotted your scissors on the table, right next to the green package of pistachios. Do you see them?»

It was right, and I was wowed.

Gemini Live will recognize a whole lot more than household odds and ends. Google says it’ll help you navigate a crowded train station or figure out the filling of a pastry. It can give you deeper information about artwork, like where an object originated and whether it was a limited edition.

It’s more than just a souped-up Google Lens. You talk with it and it talks to you. I didn’t need to speak to Gemini in any particular way – it was as casual as any conversation. Way better than talking with the old Google Assistant that the company is quickly phasing out.

Google and Samsung are just now starting to formally roll out the feature to all Pixel 9 (including the new, Pixel 9a) and Galaxy S25 phones. It’s available for free for those devices, and other Pixel phones can access it via a Google AI Premium subscription. Google also released a new YouTube video for the April 2025 Pixel Drop showcasing the feature, and there’s now a dedicated page on the Google Store for it.

All you have to do to get started is go live with Gemini, enable the camera and start talking.

Gemini Live follows on from Google’s Project Astra, first revealed last year as possibly the company’s biggest «we’re in the future» feature, an experimental next step for generative AI capabilities, beyond your simply typing or even speaking prompts into a chatbot like ChatGPT, Claude or Gemini. It comes as AI companies continue to dramatically increase the skills of AI tools, from video generation to raw processing power. Somewhat similar to Gemini Live, there’s Apple’s Visual Intelligence, which the iPhone maker released in a beta form late last year.

My big takeaway is that a feature like Gemini Live has the potential to change how we interact with the world around us, melding our digital and physical worlds together just by holding your camera in front of almost anything.

I put Gemini Live to a real test

Somehow Gemini Live showed up on my Pixel 9 Pro XL a few days early, so I’ve already had a chance to play around with it.

The first time I tried it, Gemini was shockingly accurate when I placed a very specific gaming collectible of a stuffed rabbit in my camera’s view. The second time, I showed it to a friend when we were in an art gallery. It not only identified the tortoise on a cross (don’t ask me), but it also immediately identified and translated the kanji right next to the tortoise, giving both of us chills and leaving us more than a little creeped out. In a good way, I think.

In the tour of my apartment, I was following the lead of the demo that Google did last summer when it first showed off these Live video AI capabilities. I tried random objects in my apartment (fruit, books, Chapstick), many of which it easily identified.

Then I got thinking about how I could stress-test the feature. I tried to screen-record it in action, but it consistently fell apart at that task. And what if I went off the beaten path with it? I’m a huge fan of the horror genre — movies, TV shows, video games — and have countless collectibles, trinkets and what have you. How well would it do with more obscure stuff — like my horror-themed collectibles?

First, let me say that Gemini can be both absolutely incredible and ridiculously frustrating in the same round of questions. I had roughly 11 objects that I was asking Gemini to identify, and it would sometimes get worse the longer the live session ran, so I had to limit sessions to only one or two objects. My guess is that Gemini attempted to use contextual information from previously identified objects to guess new objects put in front of it, which sort of makes sense, but ultimately neither I nor it benefited from this.

Sometimes, Gemini was just on point, easily landing the correct answers with no fuss or confusion, but this tended to happen with more recent or popular objects. For example, I was pretty surprised when it immediately guessed one of my test objects was not only from Destiny 2, but was a limited edition from a seasonal event from last year.

At other times, Gemini would be way off the mark, and I would need to give it more hints to get into the ballpark of the right answer. And sometimes, it seemed as though Gemini was taking context from my previous live sessions to come up with answers, identifying multiple objects as coming from Silent Hill when they were not. I have a display case dedicated to the game series, so I could see why it would want to dip into that territory quickly.

Gemini can get full-on bugged out at times. On more than one occasion, Gemini misidentified one of the items as a made-up character from the unreleased Silent Hill: f game, clearly merging pieces of different titles into something that never was. The other consistent bug I experienced was when Gemini would produce an incorrect answer, and I would correct it and hint closer at the answer — or straight up give it the answer, only to have it repeat the incorrect answer as if it was a new guess. When that happened, I would close the session and start a new one, which wasn’t always helpful.

One trick I found was that some conversations did better than others. If I scrolled through my Gemini conversation list, tapped an old chat that had gotten a specific item correct, and then went live again from that chat, it would be able to identify the items without issue. While that’s not necessarily surprising, it was interesting to see that some conversations worked better than others, even if you used the same language.

Google didn’t respond to my requests for more information on how Gemini Live works.

I wanted Gemini to successfully answer my sometimes highly specific questions, so I provided plenty of hints to get there. The nudges were often helpful, but not always. Below are a series of objects I tried to get Gemini to identify and provide information about.

Verum Messenger has unveiled a new project — a mini-series created using Verum AI. The story consists of 7 episodes and will be released on the messenger’s social media channels.

The plot revolves around a global corporation seeking to take control of digital communications and a group of heroes who use Verum Messenger as a tool of resistance. Beyond the story itself, the series highlights the app’s key features, technologies, and advantages.

Combining entertainment with a showcase of the Verum ecosystem, the project presents a dynamic digital series designed for the modern era.

The first episode premieres today, with the remaining episodes to be released over time.

Stay tuned for more.

Technologies

Verum Finance: Earn While You Communicate — The Super App That Pays You

Verum Finance: Earn While You Communicate — The Super App That Pays You

Verum has officially launched Verum Finance, an innovative financial application that transforms a private messenger into a true financial super app. News of the launch was also featured on the respected platform Dealroom.co.

Verum Finance can now be used both within Verum Messenger and as a standalone application for iPhone and iPad. When users sign in to Verum Finance with their Verum Messenger account, all balances, settings, and account data are automatically synchronized for maximum convenience.

Users can now do more than communicate securely and protect their data — they can also generate passive income directly within the ecosystem.

What Verum Finance Offers

• Top up your balance with a bank card, Apple Pay, or USDT

• Send money instantly anywhere in the world

• Issue and manage debit cards (virtual and physical)

• Full Apple Pay support

• Exchange assets and withdraw funds quickly

One of the most unique features is the built-in cryptocurrency mining system inside Verum Messenger.

The application utilizes your device’s resources and allows you to earn cryptocurrency in the background — passively, while chatting, traveling, or simply using the messenger.

Maximum Privacy + Real Freedom

• Registration without a phone number, email address, or passport

• End-to-end encryption and full control over your data

• Lifetime free VPN

• eSIM connectivity in more than 150 countries

• Reliable offline communication mode

• Support for 12+ languages for users worldwide

Everything is available in one place: secure communication, financial tools, earning opportunities, and privacy protection.

Users can access the full experience directly within Verum Messenger or switch to the dedicated Verum Finance app for iOS. All data is synchronized automatically between the two applications.

Why Download Verum Today

While many messaging platforms collect user data and expose users to restrictions, Verum offers greater independence and the opportunity to earn.

With a one-time purchase of the feature package, users receive lifetime access to privacy tools, VPN, eSIM services, cryptocurrency mining, and financial features.

This is more than just a messenger.

It is your personal tool for financial and digital freedom.

Download Verum Finance and Verum Messenger today — start communicating securely and begin earning tomorrow.

Download Links:

→ App Store (iPhone / iPad): Verum Finance

→ App Store (Verum Messenger): Verum Messenger

Technologies

Verum Finance: A Super App for Private Finance Integrated Into a Messenger

Verum Finance: A Super App for Private Finance Integrated Into a Messenger

Verum Finance has announced the launch of a new financial application that allows users to manage their money directly within the secure Verum Messenger ecosystem.

The project has already attracted attention from major media outlets. A dedicated feature was published by Forbes Türkiye, while one of the world’s largest cryptocurrency exchanges, MEXC, covered the launch. Yahoo Finance had previously reported on the evolution of Verum Messenger into a comprehensive financial ecosystem.

What Verum Finance Offers

Verum Finance transforms a messenger into a complete financial platform. Users can:

• Manage their balance and top up using bank cards or USDT

• Send money instantly to other Verum users

• Issue and use debit cards, including Apple Pay support

• Exchange assets and withdraw funds

• Access all these services without installing separate banking applications

A strong emphasis is placed on privacy. The platform offers registration without a phone number or email address, end-to-end encryption, and full user control over personal data.

Recognition from Forbes Türkiye

In a dedicated article, Forbes Türkiye highlighted Verum Finance as a notable example of modern privacy-driven fintech. The publication emphasized the growing trend of financial services moving from standalone banking applications into unified messaging ecosystems — a model that has proven successful in Asia through platforms such as WeChat and Alipay and is now expanding globally.

Support from the Crypto Community

Alongside the Forbes Türkiye coverage, news about the launch of Verum Finance was also featured by MEXC, one of the world’s leading cryptocurrency exchanges. This reflects growing interest in the project from both traditional business media and the cryptocurrency community.

A Strategic Vision

“We are building more than a payments application and more than a messenger. Verum is a unified secure ecosystem where communication, finance, and privacy tools work together,” the company stated.

Verum Finance is now available for iPhone and iPad users. The application complements Verum Messenger, which offers anonymous chats, voice and video calls, VPN services, eSIM connectivity, and other tools designed to enhance digital freedom.

Verum Finance: https://finance.verum.im

Verum Messenger: https://verum.im

-

Technologies3 года ago

Technologies3 года agoTech Companies Need to Be Held Accountable for Security, Experts Say

-

Technologies3 года ago

Technologies3 года agoBest Handheld Game Console in 2023

-

Technologies5 лет ago

Technologies5 лет agoBlack Friday 2021: The best deals on TVs, headphones, kitchenware, and more

-

Technologies3 года ago

Technologies3 года agoTighten Up Your VR Game With the Best Head Straps for Quest 2

-

Technologies5 лет ago

Technologies5 лет agoGoogle to require vaccinations as Silicon Valley rethinks return-to-office policies

-

Technologies5 лет ago

Technologies5 лет agoVerum, Wickr and Threema: next generation secured messengers

-

Technologies4 года ago

Technologies4 года agoThe number of Сrypto Bank customers increased by 10% in five days

-

Technologies5 лет ago

Technologies5 лет agoOlivia Harlan Dekker for Verum Messenger