Technologies

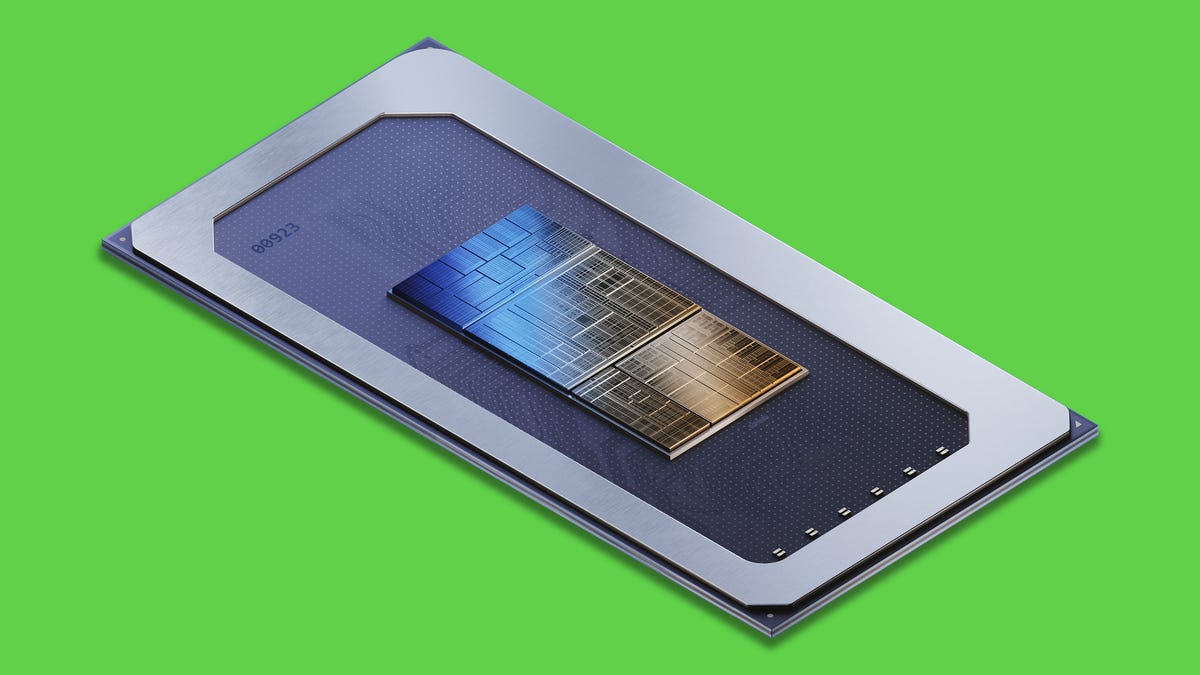

AI Speed Boost Coming With Intel’s Meteor Lake Chip for PCs This Year

The new processor is key to the chipmaker’s recovery plans and taking on strong competition from AMD and Apple.

Today’s most impressive AI tools — OpenAI’s ChatGPT, Microsoft’s Bing, Google’s Bard and Adobe’s Photoshop, for example — run in data centers stuffed with powerful, expensive servers. But Intel on Monday revealed details of its Meteor Lake PC processor that could help your laptop play more of a part in the artificial intelligence revolution.

Meteor Lake, scheduled to ship in computers later this year, includes circuitry that accelerates some AI tasks that otherwise might sap your battery. For example, it can improve AI that recognizes you to blur or replace backgrounds better during videoconferences, said John Rayfield, leader of Intel’s client AI work.

AI models use methods inspired by human brains to recognize patterns in complex, real-world data. By running AI on a laptop or phone processor instead of in the cloud, you can get benefits like better privacy and security as well as a snappier response since you don’t have network delays.

What’s unclear is how much AI work will really move from the cloud to PCs. Some software, like Adobe Photoshop and Lightroom, use AI extensively for finding people, skies and other subject matter in photos and many other image editing tasks. Apps can recognize your voice and transcribe it into text. Microsoft is building an AI chatbot called Windows Copilot straight into its operating system. But most computing work today exercises more traditional parts of a processor, its central processing unit (CPU) and graphics processing unit (GPU) cores.

There’s a build-it-and-they-will come possibility. Adding AI acceleration directly into the chip, as has already happened with smartphone processors and Apple M-series Mac processors, could encourage developers to write more software drawing on AI abilities.

GPUs are already pretty good for accelerating AI, though, and developers don’t have to wait for millions of us to upgrade our Windows PCs to take advantage of it. The GPU offers top AI performance on a PC, but the new AI-specific accelerator is good for low power, Rayfield said. Both can be used simultaneously for top performance, too.

Meteor Lake a key chip for Intel

Meteor Lake is important for other reasons, too. It’s designed for lower power operations, arguably the single biggest competitive weakness compared with the Apple M-series processors. It’s got upgraded graphics acceleration, which is critical for gaming and important for some AI tasks, too.

The processor also is key to Intel’s yearslong turnaround effort. It’s the first big chip to be built with Intel 4, a new manufacturing process essential to catching up with chipmaking leaders Taiwan Semiconductor Manufacturing Co. (TSMC) and Samsung. And it employs new advanced manufacturing technology called Foveros that lets Intel stack multiple «chiplets» more flexibly and economically into a single more powerful processor.

Chipmakers are racing to tap into the AI revolution, few as successfully as Nvidia, which earlier in May reported a blowout quarter thanks to exploding demand for its highest-end AI chips. Intel sells data center AI chips, too, but has more of a focus on economy than performance.

In its PC processors, Intel calls its AI accelerator a vision processing unit, or VPU, a product family and name that stems from its 2016 acquisition of AI chipmaker Movidius.

These days, a variation called generative AI can create realistic imagery and human-sounding text. Although Meteor Lake can run one such image generator, Stable Diffusion, large AI language models like ChatGPT simply don’t fit on a laptop.

There’s a lot of work to change that, though. Facebook’s LLaMA and Google’s PaLM 2 both are large language models designed to scale down to smaller «client» devices like PCs and even phones with much less memory.

«AI in the cloud … has challenges with latency, privacy, security, and it’s fundamentally expensive,» Rayfield said. «Over time, as we can improve compute efficiency, more of this is migrating to the client.»

Technologies

TikTok to Let Apple Music Users Stream Full Songs Without Ever Leaving the App

TikTok and Apple Music come together to introduce two new features to the music listening experience.

If you’ve ever scrolled TikTok, caught a snippet of a tune, and thought, «I wish I could play this song all the way through,» this is for you. TikTok and Apple Music announced on Wednesday that they have partnered on two new features, Play Full Song and Listening Party. The goal is to offer listeners a seamless music listening experience without ever leaving the social media app.

Apple Music subscribers who discover a song on their TikTok For You Page or on the Sound Detail Page will be able to click Play Full Song to open the Apple Music player and listen to the track in its entirety. From there, subscribers to the music streaming service will be able to save the song as a favorite, add it to a playlist on Apple Music and listen to a customized stream of recommended songs.

When a full-length song is played, the stream will pay artists through Apple Music.

«Tapping into the music you love should feel effortless,» Ole Obermann, co-head of Apple Music, said in a statement. «With Play Full Song, Apple Music subscribers can move easily from discovering a track on TikTok to listening to it in full instantly, without breaking the flow. This integration not only makes it easier for fans to discover, listen to, and engage with the artists they love, but also creates a powerful new pathway for artists — turning moments of discovery into deeper connection and sustained engagement in one simple, seamless experience.»

Listening Party sounds somewhat like Spotify‘s feature of the same name. Fans join a shared, real-time session where they listen to the same tracks together and interact live, with the songs streamed through Apple Music inside TikTok. Musicians can also join and chat with their fans.

«TikTok is where music discovery and culture move at the speed of the community,» Tracy Gardner, global head of music business development at TikTok, said in a statement. «Thanks to Apple Music, Play Full Song gives fans a seamless way to go from discovery to full-length listening, and Listening Party provides a shared place to experience music together in real time. It’s all about bringing artists and fans closer, and turning shared moments into lasting connections.»

Play Full Song and Listening Party will launch globally on TikTok over the next few weeks.

Technologies

AI Chatbots Are Making People All Think the Same, Study Says

A new paper argues that humans are losing varied ways of thinking due to the use of chatbots, and that’s concerning.

Part of what makes us human is the unique ways we think and solve problems. But using large language models like ChatGPT might be eroding this uniqueness and leading humans to think and communicate the same way, according to a group of scientists and psychologists who have co-authored a new opinion paper.

«Individuals differ in how they write, reason, and view the world,» Zhivar Sourati, a computer scientist of the University of Southern California and first author for the paper, said in a statement.

«When these differences are mediated by the same LLMs, their distinct linguistic style, perspective and reasoning strategies become homogenized, producing standardized expressions and thoughts across users,» Sourati continued.

The paper, published Wednesday in the journal Trends in Cognitive Sciences, examines how hundreds of millions of people worldwide use the same handful of chatbots and what that means for our individuality.

Thinking inside the box

Pew Research found that one-third of all Americans used ChatGPT last year, double the 2023 figure. And chatbot use is much more common among teens: Two-thirds say they use chatbots, and almost a third use them daily.

Businesses are also going all in on artificial intelligence. Stanford found that 78% of organizations reported using AI in 2024, up from 55% in 2023.

So we’re using AI a lot. But the danger is that we could lose the diversity in the ways we think. The team points out that LLMs generate writing that varies less than what people come up with on their own.

Part of the reason LLMs may be pushing homogenized thought, according to the paper’s authors, is the data used to train them.

«Because LLMs are trained to capture and reproduce statistical regularities in their training data, which often overrepresent dominant languages and ideologies, their outputs often mirror a narrow and skewed slice of human experience,» Sourati says.

Why diverse thinking matters

There’s a good reason why the authors warn against this trend. Homogenized thought reduces pluralism, which is essentially the idea that multiple perspectives are good for society as a whole.

«This value of pluralism is rooted in the long-held principle that sound judgment requires exposure to varied thought,» the authors write in the paper. «Unchecked, this homogenization risks flattening the cognitive landscapes that drive collective intelligence and adaptability,»

So we use different ways of thinking to figure out more solutions to a problem. If we lose the ability to think and communicate differently, it could affect how we adapt to new situations.

«The concern is not just that LLMs shape how people write or speak, but that they subtly redefine what counts as credible speech, correct perspective, or even good reasoning,» Sourati says.

The authors also say that this trend even impacts people who don’t use chatbots.

«If a lot of people around me are thinking and speaking in a certain way, and I do things differently, I would feel a pressure to align with them, because it would seem like a more credible or socially acceptable way of expressing my ideas,» Sourati says.

Technologies

SXSW 2026 Updates: What We Expect on Tech and Culture From Austin

-

Technologies3 года ago

Technologies3 года agoTech Companies Need to Be Held Accountable for Security, Experts Say

-

Technologies3 года ago

Technologies3 года agoBest Handheld Game Console in 2023

-

Technologies3 года ago

Technologies3 года agoTighten Up Your VR Game With the Best Head Straps for Quest 2

-

Technologies4 года ago

Technologies4 года agoBlack Friday 2021: The best deals on TVs, headphones, kitchenware, and more

-

Technologies5 лет ago

Technologies5 лет agoGoogle to require vaccinations as Silicon Valley rethinks return-to-office policies

-

Technologies5 лет ago

Technologies5 лет agoVerum, Wickr and Threema: next generation secured messengers

-

Technologies4 года ago

Technologies4 года agoOlivia Harlan Dekker for Verum Messenger

-

Technologies4 года ago

Technologies4 года agoiPhone 13 event: How to watch Apple’s big announcement tomorrow