Technologies

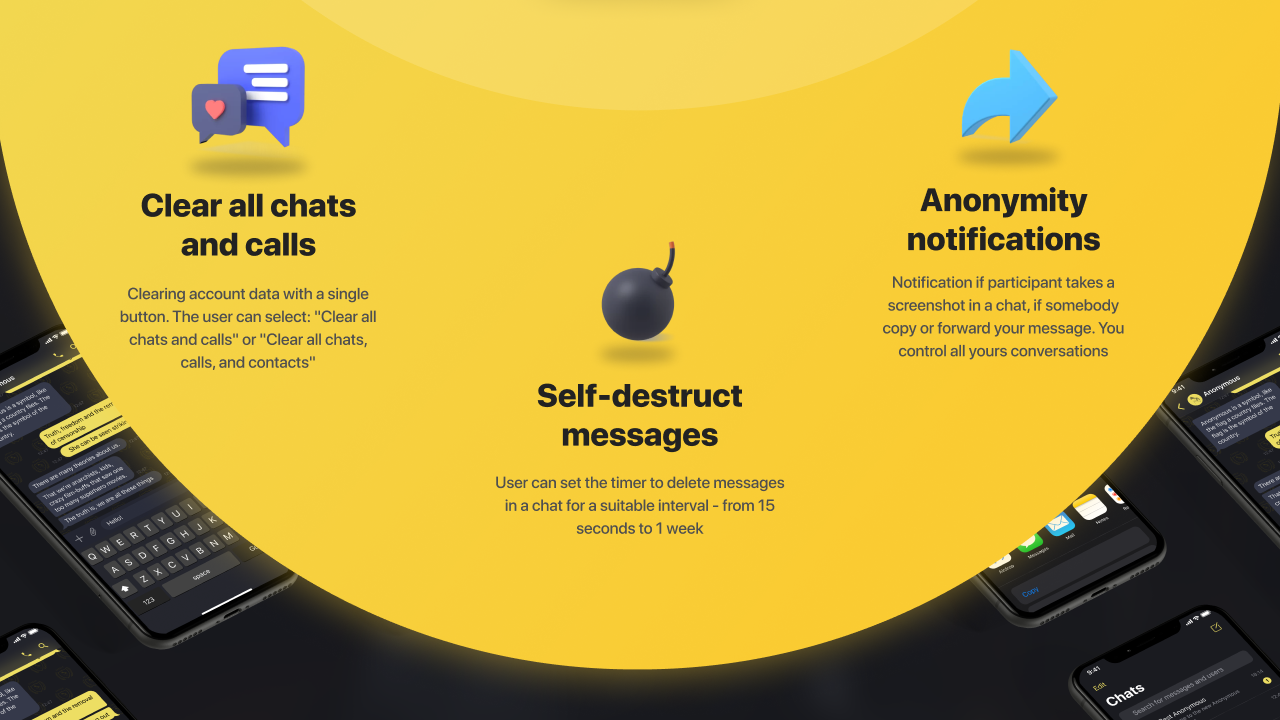

Verum Messenger — Anonymous Chat and Calls

Verum Messenger — Anonymous Chat and Calls

Technologies

Google Upgrades Maps Features With More Gemini and Faster Photo Uploads

Google Maps strengthens its crowdsourcing efforts for its 500 million contributors.

Google announced three new features for Maps on Tuesday that should streamline sharing your experiences. Despite being a strong maps application itself, Google relies on everyday users to contribute their reviews, photos and videos so others doing research can make more informed decisions about places they plan to visit. With the new updates to Google Maps, you can access your photos faster to contribute to information about places you’ve been. You can also choose to have Google’s AI model, Gemini, caption your photos and more quickly check the contributions you’ve made in the past.

New photo and video recommendations

It’s not hard to share photos or videos for a location on Google Maps, but the app will now offer photo and video suggestions from your saved images — if you give it permission to do so. The new feature will appear on the Contribute tab at the bottom of the maps app. When scrolling through the view, you’ll see photo and video recommendations or the option to upload other photos.

How the specific photo and video recommendations are determined isn’t clear, but the new feature will likely use a photo’s geolocation if that setting is enabled in your camera’s settings.

A Google representative didn’t immediately respond to a request for comment.

This feature is now available globally on Android and will expand to iOS in the coming months.

Gemini will auto-caption your photos

Google’s giving your photos some Gemini power by automatically analyzing and captioning them once you’ve selected them to share. This could be helpful in situations where you have selected several photos you don’t care to caption.

If you don’t like what Gemini comes up with, you can edit or remove the caption completely before publishing your photos to Maps.

Gemini captions are available in English on iOS and will expand to other languages globally and Android in the future.

New ways to view your contributions

You can now show off your prior contributions to Google’s Local Guide community program.

When you contribute, you gain points, and the more you contribute, the more you can level up as a Local Guide. All your points and badges are now prominently displayed on your profile. Google’s also adding gold profiles for high-level contributors, so you know you’re reading reviews from experienced users.

The new contributor updates are rolling out now on Android, iOS and desktop.

Technologies

This New Health-Tracking Pet Collar Is Like a Smartwatch for Dogs and Cats

Tractive announces two new smart collars armed with GPS tracking, AI-powered health monitoring and other tech tools.

Our pets can’t speak up and tell us how they’re feeling, or why and where they are hiding. Tractive, an Austria- and Seattle-based tech company that creates GPS tracking devices for pets, announced on Wednesday two new smart collars that, according to the press release, «will redefine pet care for millions of families.»

Is your pet stressed, breathing unusually or scratching too much? Much like the basic health-tracking features you can find on a smartwatch, the collars — the Cat 6 Mini ($79) and Dog 6 XL ($89) — are designed to track this behavior and communicate the issues to help maintain your dog or cat’s quality of life.

«Pets can’t tell us when something is wrong, but their bodies can,» Michael Hurnaus, CEO and founder of Tractive, said in a statement. «With cutting-edge sensors on every tracker, learnings from millions of pets and AI-powered insights, we’re turning one of the world’s largest pet data platforms into clear, simple information so pet parents can act sooner and care even better.»

When it comes to tracking collars, dogs have usually been the target pet audience for such devices. Tractive’s new Cat 6 Mini collar aims to provide the same service for your feline friend. You can use it to monitor your cat’s respiratory rate and resting heart rate and identify any health concerns early. It’s expected to ship on May 31.

The Dog 6 XL collar, an upgrade from the company’s previous dog wearable, is designed for dogs weighing over 55 pounds. It’s more durable for outdoor use and offers up to four weeks of battery life between charges. It comes equipped with a scratch-monitoring system that flags unusual scratching behavior caused by allergies, skin irritants and other stressors.

You can also use the app to access your pet’s travels and mark safe zones regarding walks, entries and exits. An AI-powered health hub displays your pet’s overall health stats and also acts as a GPS tracker in case your dog or cat goes missing.

How would a veterinarian interact with the data collected on the device?

A Tractive representative told CNET, «In our experience, veterinarians are most interested in baseline resting heart and respiratory rate, so it’s less about monitoring these vitals in real time during recovery from anesthesia/acute care and more about understanding if the baseline is changing day to day to identify the onset of new conditions or manage existing ones.»

Even though the collars use a SIM card and require a strong cellular connection to work properly, they can capture activity, sleep and health data while offline. However, without connectivity, the devices «ultimately will not provide any utility,» the representative confirmed.

You’ll need to download the accompanying app and select a separate subscription plan at an added cost. The one-year plan costs $120, the two-year plan costs $168, and the five-year plan costs $300.

Technologies

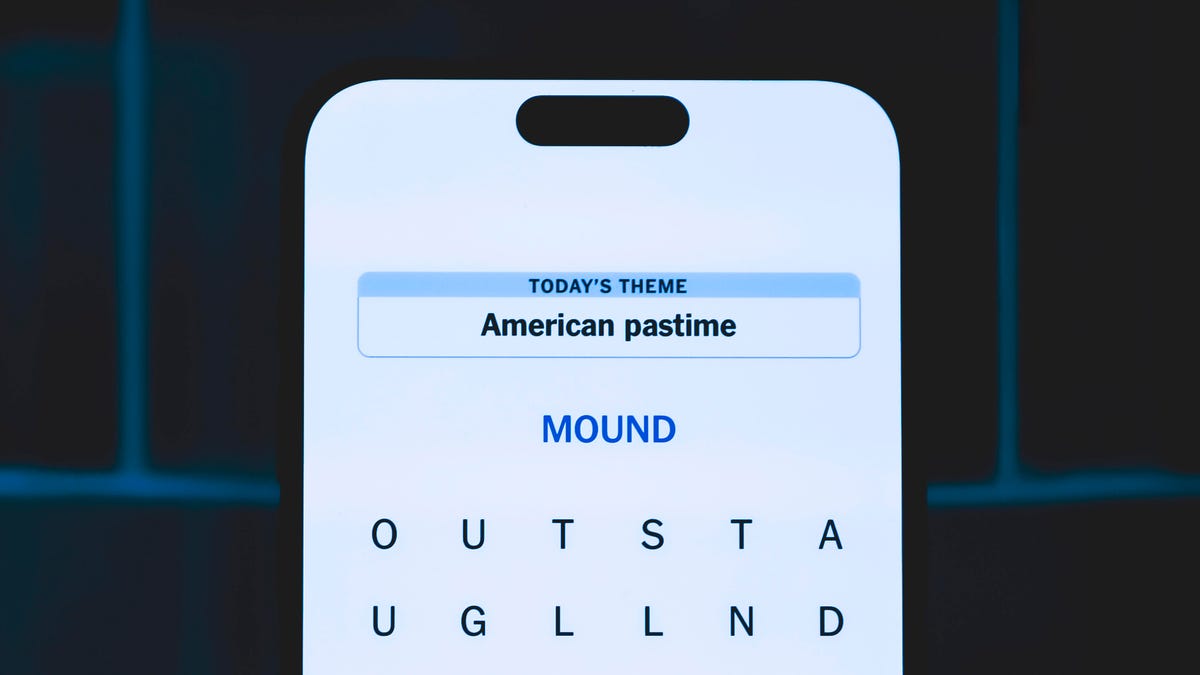

Today’s NYT Strands Hints, Answers and Help for April 9 #767

Here are hints and answers for the NYT Strands puzzle for April 9, No. 767.

Looking for the most recent Strands answer? Click here for our daily Strands hints, as well as our daily answers and hints for The New York Times Mini Crossword, Wordle, Connections and Connections: Sports Edition puzzles.

Today’s NYT Strands puzzle could be tough, unless you’re an artist. Even then, some of the answers are difficult to unscramble, so if you need hints and answers, read on.

I go into depth about the rules for Strands in this story.

If you’re looking for today’s Wordle, Connections and Mini Crossword answers, you can visit CNET’s NYT puzzle hints page.

Read more: NYT Connections Turns 1: These Are the 5 Toughest Puzzles So Far

Hint for today’s Strands puzzle

Today’s Strands theme is: In the paint.

If that doesn’t help you, here’s a clue: Hand me a brush.

Clue words to unlock in-game hints

Your goal is to find hidden words that fit the puzzle’s theme. If you’re stuck, find any words you can. Every time you find three words of four letters or more, Strands will reveal one of the theme words. These are the words I used to get those hints but any words of four or more letters that you find will work:

- COME, PATS, SPAT, SLOE, MEAN, LEAN, MANE, RATE, PEER, LATE, RATER

Answers for today’s Strands puzzle

These are the answers that tie into the theme. The goal of the puzzle is to find them all, including the spangram, a theme word that reaches from one side of the puzzle to the other. When you have all of them (I originally thought there were always eight but learned that the number can vary), every letter on the board will be used. Here are the nonspangram answers:

- FRESCO, PASTEL, ENAMEL, ACRYLIC, TEMPERA, WATERCOLOR

Today’s Strands spangram

Today’s Strands spangram is MEDIUM, the art term! To find it, start with the M that’s four letters down on the far-left vertical row, and travel straight across.

-

Technologies3 года ago

Technologies3 года agoTech Companies Need to Be Held Accountable for Security, Experts Say

-

Technologies3 года ago

Technologies3 года agoBest Handheld Game Console in 2023

-

Technologies3 года ago

Technologies3 года agoTighten Up Your VR Game With the Best Head Straps for Quest 2

-

Technologies4 года ago

Technologies4 года agoBlack Friday 2021: The best deals on TVs, headphones, kitchenware, and more

-

Technologies5 лет ago

Technologies5 лет agoGoogle to require vaccinations as Silicon Valley rethinks return-to-office policies

-

Technologies5 лет ago

Technologies5 лет agoVerum, Wickr and Threema: next generation secured messengers

-

Technologies4 года ago

Technologies4 года agoOlivia Harlan Dekker for Verum Messenger

-

Technologies4 года ago

Technologies4 года agoThe number of Сrypto Bank customers increased by 10% in five days