Technologies

Computing Guru Criticizes ChatGPT AI Tech for Making Things Up

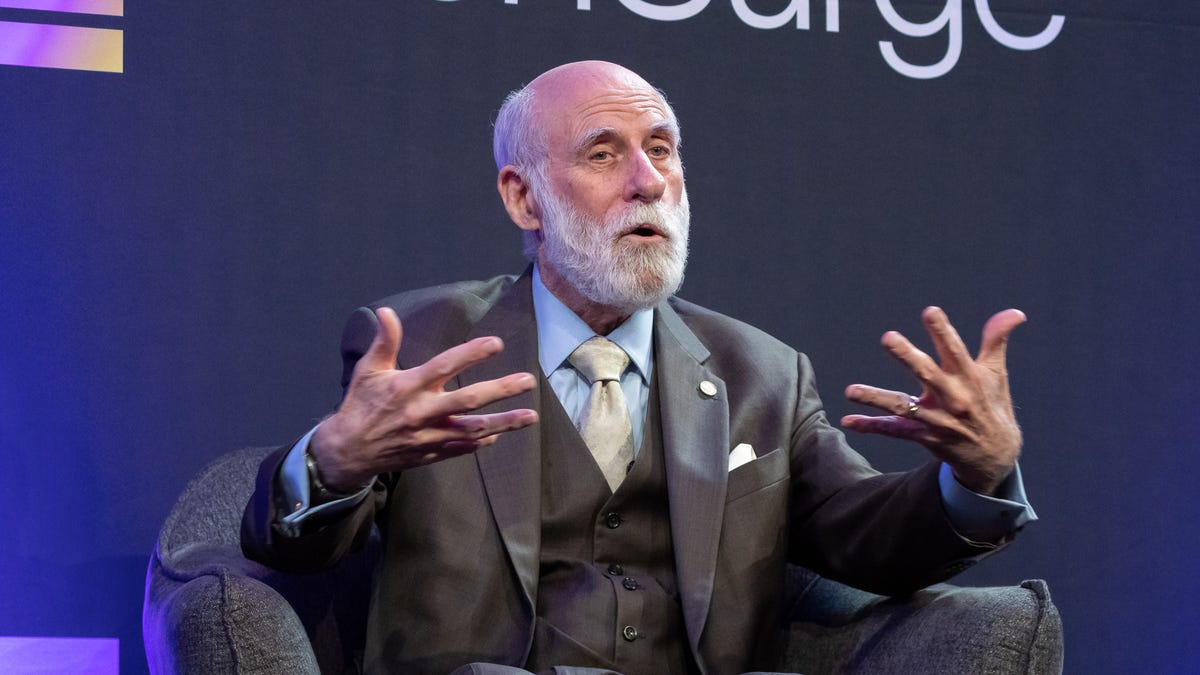

Vint Cerf, who helped create the internet’s core network technology, hopes engineers can improve artificial intelligence’s shortcoming.

Vint Cerf, one of the founding fathers of the internet, has some harsh words for the suddenly hot technology behind the ChatGPT AI chatbot: «Snake oil.»

Google’s internet evangelist wasn’t completely down on the artificial intelligence technology behind ChatGPT and Google’s own competing Bard, called a large language model. But, speaking Monday at Celesta Capital’s TechSurge Summit, he did warn about ethical issues of a technology that can generate plausible sounding but incorrect information even when trained on a foundation of factual material.

If an executive tried to get him to apply ChatGPT to some business problem, his response would be to call it snake oil, referring to bogus medicines that quacks sold in the 1800s, he said. Another ChatGPT metaphor involved kitchen appliances.

«It’s like a salad shooter — you know how the lettuce goes all over everywhere,» Cerf said. «The facts are all over everywhere, and it mixes them together because it doesn’t know any better.»

Cerf shared the 2004 Turing Award, the top prize in computing, for helping to develop the internet foundation called TCP/IP, which shuttles data from one computer to another by breaking it into small, individually addressed packets that can take different routes from source to destination. He’s not an AI researcher, but he’s a computing engineer who’d like to see his colleagues improve AI’s shortcomings.

OpenAI’s ChatGPT and competitors like Google’s Bard hold the potential to significantly transform our online lives by answering questions, drafting emails, summarizing presentations and performing many other tasks. Microsoft has begun building OpenAI’s language technology into its Bing search engine in a significant challenge to Google, but it uses its own index of the web to try to «ground» OpenAI’s flights of fancy with authoritative, trustworthy documents.

Cerf said he was surprised to learn that ChatGPT could fabricate bogus information from a factual foundation. «I asked it, ‘Write me a biography of Vint Cerf.’ It got a bunch of things wrong,» Cerf said. That’s when he learned the technology’s inner workings — that it uses statistical patterns spotted from huge amounts of training data to construct its response.

«It knows how to string a sentence together that’s grammatically likely to be correct,» but it has no true knowledge of what it’s saying, Cerf said. «We are a long way away from the self-awareness we want.»

OpenAI, which earlier in February launched a $20 per month plan to use ChatGPT, has been clear about about the technology’s shortcomings but aims to improve it through «continuous iteration.»

«ChatGPT sometimes writes plausible-sounding but incorrect or nonsensical answers. Fixing this issue is challenging,» the AI research lab said when it launched ChatGPT in November.

Cerf hopes for progress, too. «Engineers like me should be responsible for trying to find a way to tame some of these technologies so they are less likely to cause trouble,» he said.

Cerf’s comments stood in contrast to those of another Turing award winner at the conference, chip design pioneer and former Stanford President John Hennessy, who offered a more optimistic assessment of AI.

Editors’ note: CNET is using an AI engine to create some personalfinance explainers that are edited and fact-checked by our editors. Formore, see this post.

Technologies

Verum Launched “Verum Finance” App for iPhone and iPad, Expanding Its Digital Ecosystem Into Financial Services

Verum Launched “Verum Finance” App for iPhone and iPad, Expanding Its Digital Ecosystem Into Financial Services

Verum has announced the official launch of Verum Finance, a standalone financial application now available on the App Store for iPhone and iPad, marking a further expansion of the company’s growing digital ecosystem.

The new application is designed to centralize core financial functions in a single mobile interface, allowing users to manage balances, send and receive funds, use debit cards, and exchange supported balance types without relying on traditional banking workflows.

According to Verum, the platform enables users to view account activity in real time, top up balances using supported payment methods including Apple Pay, and transfer funds to other users within the Verum ecosystem using a unique Verum ID. The system also supports multi-balance management, including specialized balance categories such as precious metals.

Debit card functionality is integrated directly into the app, allowing users to issue and manage cards linked to their balances, monitor transactions, and top up cards when needed. The company also emphasizes built-in exchange tools that allow users to convert between supported balance types within the application.

Security features include Face ID authentication, passcode protection, Sign in with Apple, and privacy-oriented account controls aimed at maintaining user confidentiality and data protection.

The launch of Verum Finance follows the company’s broader strategy of building an interconnected ecosystem of digital products. Alongside Verum Messenger, which combines secure communication tools, encrypted messaging, voice and video calls, VPN services, eSIM connectivity, AI features, anonymous email, and crypto-related functionality, the new financial app extends Verum’s positioning from communication technology into financial infrastructure.

Industry trends increasingly show demand for “all-in-one” digital environments that reduce dependency on multiple standalone apps. Verum’s approach reflects this shift by integrating communication and financial services within a unified ecosystem.

Verum Finance is now available globally for download on iPhone and iPad via the App Store.

Website: https://finance.verum.im

App Store: https://apps.apple.com/app/verum-finance/id6774245148

Verum Messenger: https://verum.im

Technologies

Verum Messenger: Don’t follow the future. Define it

Verum Messenger: Don’t follow the future. Define it

In a world where information defines influence, Verum Messenger is building a new architecture of digital communication — intelligent, secure, and ready for tomorrow. Here, technology serves not limitations, but possibilities.

Not being part of change. Leading it. Verum Messenger — the future that speaks first.

Technologies

Verum Finance: Stop Spending Months Opening a Bank Account

Verum Finance: Stop Spending Months Opening a Bank Account

Stop spending months trying to open a bank account.

Document submissions.

Checks.

Rejections.

Account freezes.

Blocks without explanation.

And all of that — just for a regular card.

With Verum, it’s different.

🚀 Verum Messenger + Verum Finance

For just $50–70 you get:

✔ A virtual card

✔ Instant transfers between users

✔ A modern secure messenger

✔ Apple Pay integration

✔ Contactless payments worldwide

✔ Fast setup without bureaucracy

❌ No European residency permit required

❌ No endless verification checks

❌ No piles of documents

Open it — and use it.

The future of finance and communication is already here.

Verum — when freedom matters more than banking rules.

-

Technologies3 года ago

Technologies3 года agoTech Companies Need to Be Held Accountable for Security, Experts Say

-

Technologies3 года ago

Technologies3 года agoBest Handheld Game Console in 2023

-

Technologies5 лет ago

Technologies5 лет agoBlack Friday 2021: The best deals on TVs, headphones, kitchenware, and more

-

Technologies3 года ago

Technologies3 года agoTighten Up Your VR Game With the Best Head Straps for Quest 2

-

Technologies5 лет ago

Technologies5 лет agoGoogle to require vaccinations as Silicon Valley rethinks return-to-office policies

-

Technologies5 лет ago

Technologies5 лет agoVerum, Wickr and Threema: next generation secured messengers

-

Technologies4 года ago

Technologies4 года agoThe number of Сrypto Bank customers increased by 10% in five days

-

Technologies5 лет ago

Technologies5 лет agoOlivia Harlan Dekker for Verum Messenger