Technologies

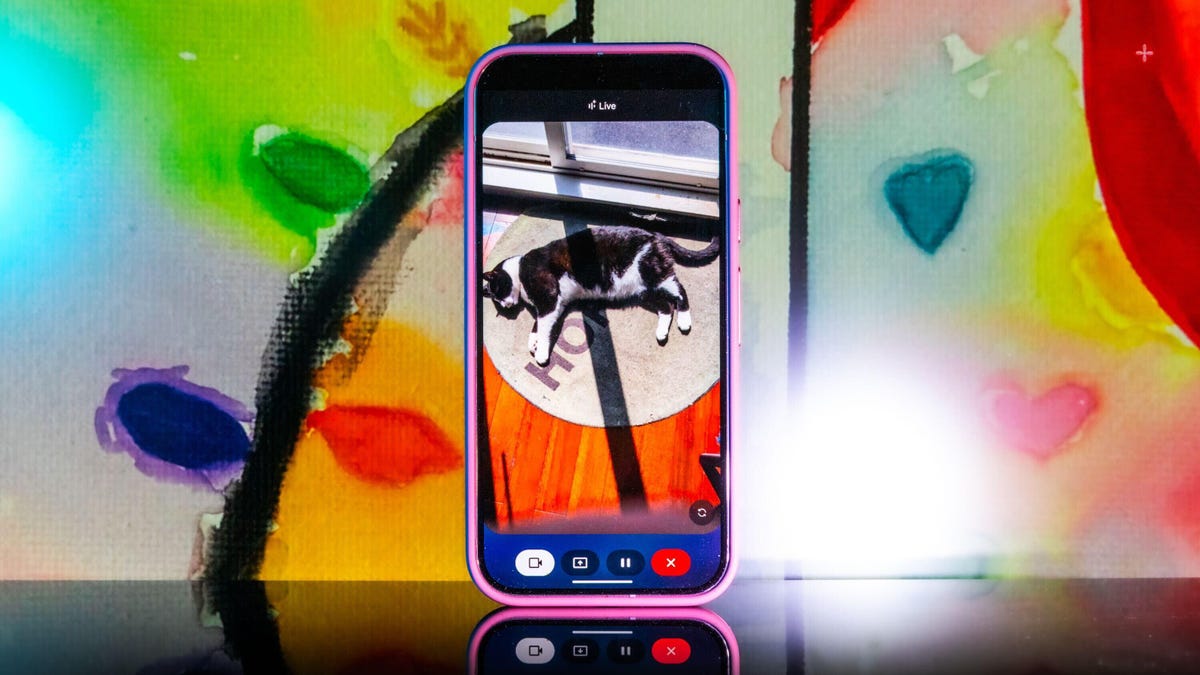

The Future’s Here: Testing Out Gemini’s Live Camera Mode

Gemini Live’s new camera mode feels like the future when it works. I put it through a stress test with my offbeat collectibles.

«I just spotted your scissors on the table, right next to the green package of pistachios. Do you see them?»

Gemini Live’s chatty new camera feature was right. My scissors were exactly where it said they were, and all I did was pass my camera in front of them at some point during a 15-minute live session of me giving the AI chatbot a tour of my apartment. Google’s been rolling out the new camera mode to all Android phones using the Gemini app for free after a two-week exclusive to Pixel 9 (including the new Pixel 9A) and Galaxy S5 smartphones. So, what exactly is this camera mode and how does it work?

When you start a live session with Gemini, you now how have the option to enable a live camera view, where you can talk to the chatbot and ask it about anything the camera sees. Not only can it identify objects, but you can also ask questions about them — and it works pretty well for the most part. In addition, you can share your screen with Gemini so it can identify things you surface on your phone’s display.

When the new camera feature popped up on my phone, I didn’t hesitate to try it out. In one of my longer tests, I turned it on and started walking through my apartment, asking Gemini what it saw. It identified some fruit, ChapStick and a few other everyday items with no problem. I was wowed when it found my scissors.

That’s because I hadn’t mentioned the scissors at all. Gemini had silently identified them somewhere along the way and then recalled the location with precision. It felt so much like the future, I had to do further testing.

My experiment with Gemini Live’s camera feature was following the lead of the demo that Google did last summer when it first showed off these live video AI capabilities. Gemini reminded the person giving the demo where they’d left their glasses, and it seemed too good to be true. But as I discovered, it was very true indeed.

Gemini Live will recognize a whole lot more than household odds and ends. Google says it’ll help you navigate a crowded train station or figure out the filling of a pastry. It can give you deeper information about artwork, like where an object originated and whether it was a limited edition piece.

It’s more than just a souped-up Google Lens. You talk with it, and it talks to you. I didn’t need to speak to Gemini in any particular way — it was as casual as any conversation. Way better than talking with the old Google Assistant that the company is quickly phasing out.

Google also released a new YouTube video for the April 2025 Pixel Drop showcasing the feature, and there’s now a dedicated page on the Google Store for it.

To get started, you can go live with Gemini, enable the camera and start talking. That’s it.

Gemini Live follows on from Google’s Project Astra, first revealed last year as possibly the company’s biggest «we’re in the future» feature, an experimental next step for generative AI capabilities, beyond your simply typing or even speaking prompts into a chatbot like ChatGPT, Claude or Gemini. It comes as AI companies continue to dramatically increase the skills of AI tools, from video generation to raw processing power. Similar to Gemini Live, there’s Apple’s Visual Intelligence, which the iPhone maker released in a beta form late last year.

My big takeaway is that a feature like Gemini Live has the potential to change how we interact with the world around us, melding our digital and physical worlds together just by holding your camera in front of almost anything.

I put Gemini Live to a real test

The first time I tried it, Gemini was shockingly accurate when I placed a very specific gaming collectible of a stuffed rabbit in my camera’s view. The second time, I showed it to a friend in an art gallery. It identified the tortoise on a cross (don’t ask me) and immediately identified and translated the kanji right next to the tortoise, giving both of us chills and leaving us more than a little creeped out. In a good way, I think.

I got to thinking about how I could stress-test the feature. I tried to screen-record it in action, but it consistently fell apart at that task. And what if I went off the beaten path with it? I’m a huge fan of the horror genre — movies, TV shows, video games — and have countless collectibles, trinkets and what have you. How well would it do with more obscure stuff — like my horror-themed collectibles?

First, let me say that Gemini can be both absolutely incredible and ridiculously frustrating in the same round of questions. I had roughly 11 objects that I was asking Gemini to identify, and it would sometimes get worse the longer the live session ran, so I had to limit sessions to only one or two objects. My guess is that Gemini attempted to use contextual information from previously identified objects to guess new objects put in front of it, which sort of makes sense, but ultimately, neither I nor it benefited from this.

Sometimes, Gemini was just on point, easily landing the correct answers with no fuss or confusion, but this tended to happen with more recent or popular objects. For example, I was surprised when it immediately guessed one of my test objects was not only from Destiny 2, but was a limited edition from a seasonal event from last year.

At other times, Gemini would be way off the mark, and I would need to give it more hints to get into the ballpark of the right answer. And sometimes, it seemed as though Gemini was taking context from my previous live sessions to come up with answers, identifying multiple objects as coming from Silent Hill when they were not. I have a display case dedicated to the game series, so I could see why it would want to dip into that territory quickly.

Gemini can get full-on bugged out at times. On more than one occasion, Gemini misidentified one of the items as a made-up character from the unreleased Silent Hill: f game, clearly merging pieces of different titles into something that never was. The other consistent bug I experienced was when Gemini would produce an incorrect answer, and I would correct it and hint closer at the answer — or straight up give it the answer, only to have it repeat the incorrect answer as if it was a new guess. When that happened, I would close the session and start a new one, which wasn’t always helpful.

One trick I found was that some conversations did better than others. If I scrolled through my Gemini conversation list, tapped an old chat that had gotten a specific item correct, and then went live again from that chat, it would be able to identify the items without issue. While that’s not necessarily surprising, it was interesting to see that some conversations worked better than others, even if you used the same language.

Google didn’t respond to my requests for more information on how Gemini Live works.

I wanted Gemini to successfully answer my sometimes highly specific questions, so I provided plenty of hints to get there. The nudges were often helpful, but not always. Below are a series of objects I tried to get Gemini to identify and provide information about.

Technologies

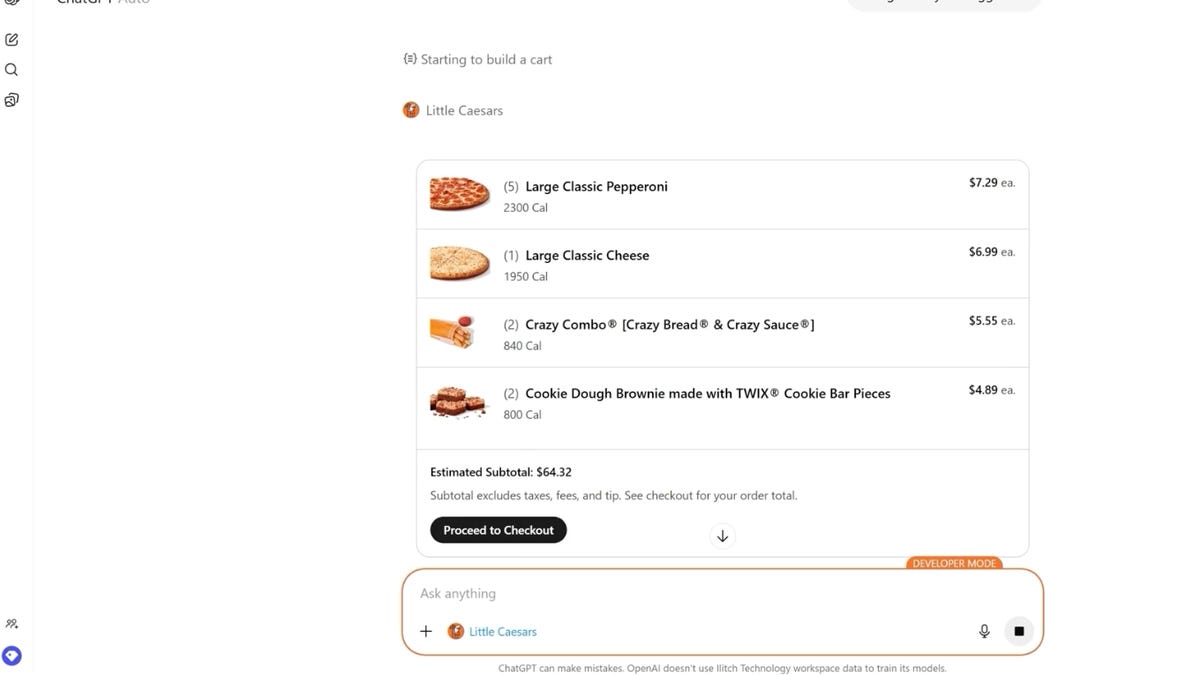

Little Caesars Wants ChatGPT to Order Your Pizza for You

You can personalize your pie and place your order without leaving the chatbot.

When it comes to building the perfect pizza, you need perfectly structured crust, quality cheese, well-seasoned sauce and fresh, delicious toppings. Oh, and artificial intelligence, naturally.

Or at least that’s what Little Caesars is saying.

Starting today, you can order Little Caesars through a new app inside ChatGPT. OpenAI’s chatbot can customize and order pizzas, or you can use ChatGPT to receive recommendations based on your budget, preferences, dietary restrictions or the number of people you need to serve.

«Today’s consumers are turning to Gen AI as part of how they search for everything, including where to get their next meal,» Greg Hamilton, chief marketing officer at Little Caesars, said in a statement. «We recognize this shift and want to meet our customers where they already are and be the go-to for their pizza occasions. The process is as natural and intuitive as having a conversation. It’s not just about technology for technology’s sake — it’s about making life a little easier for people who love great pizza.»

Read also: I Had ChatGPT Order Me a Pizza. This Could Change Everything

How ordering a pizza with ChatGPT works

To get started, you’ll need to launch ChatGPT on your desktop or mobile device. On the ChatGPT interface, go to the Apps menu and select Little Caesars. You will need to connect your accounts by signing into your Little Caesars account or creating one. From there, you can get started with ordering.

You can simply type in something like, «Pizzas for five people with no meat,» and you’ll get personalized recommendations for pizzas and sides that match your preferences. From there, you can tailor your order further by swapping toppings, adjusting amounts or adding an order of cookie dough brownies.

Once you review your order, you can checkout through the Little Caesars app and then your order will go to the nearest location for you to pick up when ready. You can also schedule an order ahead of time and track your order in real-time through the app.

The new ordering function is now available across all Little Caesars locations in the US, and many locations in Mexico and Canada.

Not interested in using AI? The Little Caesars app and website are still available, or you can always pick up the phone and call.

Technologies

Don’t Lose Your Texts: How to Move Away From Samsung Messages Before It Shuts Down

Samsung is deactivating its long-standing Messages app in July. Here’s what to do next.

Samsung is closing the book on its proprietary texting platform this summer. After years of slowly phasing out the software in favor of a more unified experience, the company is finally pulling the plug on its Messages app this July. While many Galaxy owners have already been using Google’s version for years, those holding onto the legacy interface now have a firm deadline to migrate their conversations before the service goes dark.

On a page with information about the switch, Samsung points to instructions on how to swap over to Google’s Messages app, including for phones that are still on Android 12 and Android 13. Samsung has historically preinstalled its own Messages app on Galaxy phones, but began transitioning toward Google Messages as early as 2021.

To encourage people to switch to Google Messages, Samsung’s instructions list new features offered by Google Messages, like RCS-enabled texting for features like typing indicators, easier group chats and sending higher-quality images. Google’s Messages app also has AI-powered spam detection and spam filters, multi-device access to messages and some built-in Gemini AI features. It’s also the app that most Android phones use as their default texting app, including Samsung’s more recent Galaxy S26. There are other SMS texting app alternatives in the Google Play Store if you don’t want to use the one made by Google.

Samsung has not said when exactly in July messaging will no longer work in the app. A Samsung representative didn’t immediately respond to a request for comment. Once the app is deactivated, only messaging to emergency services will work on Samsung Messages.

While Samsung did stop including it as the default texting app in 2021, it wasn’t until 2024 that Samsung stopped preinstalling the texting app alongside Google Messages. The Galaxy S26 can’t download the Samsung Messages app, and other phones won’t be able to download it after the app’s July sunset.

Samsung said users of Android 11 or lower aren’t affected by the end of service, but would also likely benefit from switching to a supported texting app like Google Messages. To switch to Google Messages, the company asks users to download the app if it’s not already installed and to set it as the default SMS app when prompted after launching it.

The post also notes that anyone using an older Galaxy Watch that runs on Samsung’s Tizen operating system will no longer have access to their full conversation history since these watches cannot use Google Messages. Samsung said that they will still be able to read and send text messages, but the company’s newer watches (Galaxy Watch 4 and later) that run WearOS will still have access to full conversations.

Technologies

New AT&T Elite 2.0 Phone Plan Boosts Wireless Hotspot and Data Performance

For customers willing to pay for it, the new top plan offers more high-speed data and performance than the former one.

Only a few weeks after overhauling its unlimited phone plans, AT&T has added a new plan to the top of the lineup that offers more data and performance — for a higher price. The AT&T Elite 2.0 plan is available now.

For a single line, Elite 2.0 costs $110 (plus taxes and fees). As more lines are added, the per-line price goes down. AT&T customers can mix and match plans on an account, but if we assume everyone is signing up for the Elite 2.0 plan, the costs break down like this:

• One line: $110

• Two lines: $100 per line, $200 total

• Three lines: $85 per line, $255 total

• Four lines: $75 per line, $300 total

• Five lines: $75 per line, $375 total

To compare it with AT&T’s next-priciest option, the Premium 2.0 plan costs $90 for a single line, or $55 per line on an account with four lines.

What’s included in the AT&T Elite 2.0 plan

For those amounts, the plan includes unlimited high-speed 5G data, prioritized even during network congestion, just like the Premium 2.0 plan, and 250GB of hotspot data (up from 100GB for the other plan). It also includes cellular access for one smartwatch and one tablet per line.

For travelers, Elite 2.0 has unlimited international talk, text and 20GB of high-speed data per month in 210 countries. The Premium 2.0 plan has unlimited talk, text and high-speed data, but only for 20 Latin American countries.

Aside from the data amounts, the Elite 2.0 plan includes AT&T Turbo, a feature normally offered as an add-on that increases data performance for video calling, gaming and streaming on 5G-capable devices. For other plans, AT&T Turbo costs $7 per line per month.

(AT&T Turbo is a separate feature from AT&T Turbo Live, which is designed to boost performance in certain crowded venues such as concerts or sporting events.)

AT&T Elite 2.0 vs Premium 2.0

| Price for 1 line, per month | Price for 4 lines, per month | High-speed data | Mobile hotspot | International Call/Data | AT&T Turbo | |

| AT&T Premium 2.0 | $90 | $220 ($55 per line) | Unlimited | 100GB | Unlimited talk, text and high-speed data in 20 Latin American countries; unlimited texting from US to 200+ countries | Not included |

| AT&T Elite 2.0 | $110 | $300 ($75 per line) | Unlimited | 250GB | Unlimited talk, text and 20GB high-speed data in 210 countries | Included |

-

Technologies3 года ago

Technologies3 года agoTech Companies Need to Be Held Accountable for Security, Experts Say

-

Technologies3 года ago

Technologies3 года agoBest Handheld Game Console in 2023

-

Technologies3 года ago

Technologies3 года agoTighten Up Your VR Game With the Best Head Straps for Quest 2

-

Technologies4 года ago

Technologies4 года agoBlack Friday 2021: The best deals on TVs, headphones, kitchenware, and more

-

Technologies5 лет ago

Technologies5 лет agoGoogle to require vaccinations as Silicon Valley rethinks return-to-office policies

-

Technologies5 лет ago

Technologies5 лет agoVerum, Wickr and Threema: next generation secured messengers

-

Technologies4 года ago

Technologies4 года agoOlivia Harlan Dekker for Verum Messenger

-

Technologies4 года ago

Technologies4 года agoThe number of Сrypto Bank customers increased by 10% in five days