Technologies

Adobe: Our New Generative AI Will Help Creative Pros, Not Hurt Them

The Firefly tools begin with image creation and font styling but soon will spread to Photoshop and other software.

In 2022, OpenAI’s Dall-E service wowed the world with the ability to turn text prompts into images. Now Adobe has built its own version of this generative AI technology with tools that begin a technological overhaul of the company’s widely used creative tools.

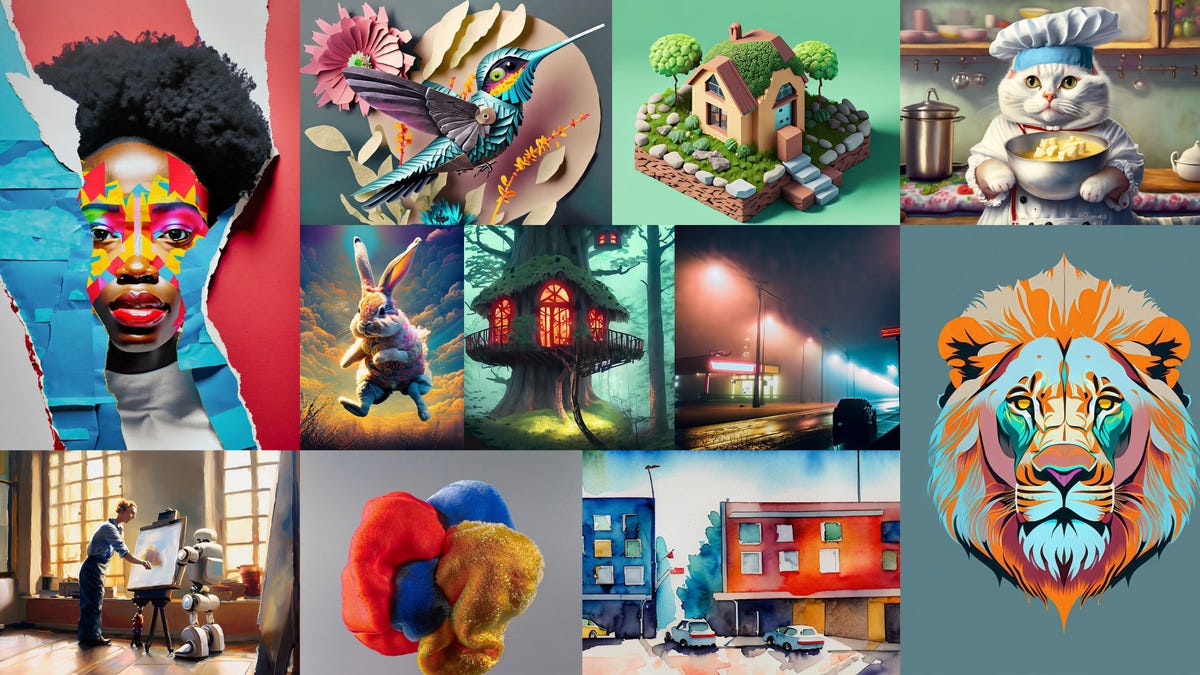

On Tuesday, Adobe released the first two members of its new Firefly collection of generative AI tools for beta testing. The first tool creates an image based on a text prompt like «fierce alligator leaping out of the water during a lightning storm,» with hundreds of styles that can tweak results. The other applies prompt-based styles to text, letting people create letters that look hairy, scaly, mossy or however else they want.

Firefly for now is available on Adobe’s website, but the company will build generative AI directly into other tools, starting with its Photoshop image editing software, Illustrator for designs and Adobe Express for creating quick videos. The company hasn’t revealed its pricing approach for the new tools.

Creative professionals might see Firefly as an incursion into their creative domain, going beyond mechanical tools like selecting colors and trimming videos into the heart and soul of their jobs. With AI showing new smarts when it comes to translating documents, interpreting tax code, composing music and creating travel itineraries, it’s not irrational for professionals to feel spooked.

Like other AI fans, though, Adobe sees artificial intelligence as the latest digital tool to amplify what humans can do. For example, Firefly eventually could let people use Adobe tools to tailor designs to individuals instead of just creating one design for a broad audience, said Alexandru Costin, vice president of Adobe’s generative AI work.

«We don’t think AI will replace creative creators. We think that creators using AI will be more competitive than creators not using AI. This is why we want to bring AI to the fingertips of all our user base,» Costin said. «The only way to succeed in AI is to embrace it.»

Adobe’s Firefly products are trained from the company’s own library of stock images, along with public domain and licensed works. The company has worked to reduce the bias in training data that AI models can reflect, for example that business executives are male.

AI is a «sea change»

Artificial intelligence uses processes inspired by human brains for computing tasks, trained to recognize patterns in complex real-world data instead of following traditional and rigid if-this-then-that programming. With advances in AI hardware, software, algorithms and training data, the field is advancing rapidly and touching just about every corner of tech.

The latest flavor of the technology, generative AI, can create new material on its own. The best known example, ChatGPT, can write software, hold conversations and compose poetry. Microsoft is employing ChatGPT’s technology foundation, GPT-4, to boost Bing search results, offer email writing tips and help build presentations

AI tools are sprouting up all over. Adobe has used AI for years under its Sensei brand for features like recognizing human subjects in Lightroom photos and transcribing speech into text in Premiere Pro videos. EbSynth applies a photo’s style to a video, HueMint creates color palettes and LeiaPix converts 2D photos into 3D scenes.

But it’s the new generative AI that brings new creative possibilities to digital art and design.

«It’s a sea change,» said Forrester analyst David Truog.

One of the first members of Adobe’s Firefly family of generative AI tools will style text based on prompts like «the letter N made of gold with intricate ornaments.»

AdobeAlpaca offers a Photoshop plug-in to generate art, and Aug X Labs can turn a text prompt into a video. Google’s MusicLM converts text to music, though it’s not open to the public. Dall-E captured the internet’s attention with its often fantastical imagery — the name marries Pixar’s WALL-E robot with the surrealist painter Salvador Dalí.

Related tools like Midjourney and Stability AI’s Stable Diffusion spread the technology even further.

If Adobe didn’t offer generative AI abilities, creative pros and artists would get them from somewhere else.

Indeed, Microsoft on Tuesday incorporated Dall-E technology with its Bing Image Creator service.

Training AIs isn’t easy, but it’s getting less difficult, at least for those who have a healthy budget. Chip designer Nvidia on Tuesday announced that Adobe is using its new H100 Hopper GPU to train Firefly models through a new service called Picasso. Other Picasso customers include photo licensing companies Getty Images and Shutterstock.

Legal engineering

Developing good AI isn’t just a technical matter. Adobe set up Firefly to sidestep legal and social problems that AI poses.

For example, three artists sued Stability AI and Midjourney in January over the use of their works in AI training data. They «seek to end this blatant and enormous infringement of their rights before their professions are eliminated by a computer program powered entirely by their hard work,» their lawsuit said.

Getty Images also sued Stability AI, alleging that it «unlawfully copied and processed millions of images protected by copyright.» It offers licenses to its enormous catalog of photos and other images for AI training, but Stability AI didn’t license the images. Stability AI, DeviantArt and Midjourney didn’t respond to requests for comment.

Adobe wants to assure artists that they needn’t worry about such problems. There are no copyright problems, no brand logos, and no Mickey Mouse characters. «You don’t want to infringe somebody else’s copyright by mistake,» Costin said.

The approach is smart, Truog said.

«What Adobe is doing with Firefly is strategically very similar to what Apple did by introducing the iTunes Music Store 20 years ago,» he said. Back then, Napster music sharing showed demand for online music, but the recording industry lawsuits crushed the idea. «Apple jumped in and designed a service that let people access music online but legally, more easily, and in a way that compensated the content creators instead of just stealing from them.»

Adobe also worked to counteract another problem that could make businesses leery, showing biased or stereotypical imagery.

It’s now up to Adobe to convince creative pros that it’s time to catch the AI wave.

«The introduction of digital creativity has increased the number of creative jobs, not decreased them, even if at the time it looked like a big threat,» Costin said. «We think the same thing will happen with generative AI.»

Editors’ note: CNET is using an AI engine to create some personal finance explainers that are edited and fact-checked by our editors. For more, see this post.

Technologies

Today’s NYT Connections: Sports Edition Hints and Answers for April 8, #562

Here are hints and the answers for the NYT Connections: Sports Edition puzzle for April 8 No. 562.

Looking for the most recent regular Connections answers? Click here for today’s Connections hints, as well as our daily answers and hints for The New York Times Mini Crossword, Wordle and Strands puzzles.

Today’s Connections: Sports Edition is a tough one. If you’re struggling with today’s puzzle but still want to solve it, read on for hints and the answers.

Connections: Sports Edition is published by The Athletic, the subscription-based sports journalism site owned by The Times. It doesn’t appear in the NYT Games app, but it does in The Athletic’s own app. Or you can play it for free online.

Read more: NYT Connections: Sports Edition Puzzle Comes Out of Beta

Hints for today’s Connections: Sports Edition groups

Here are four hints for the groupings in today’s Connections: Sports Edition puzzle, ranked from the easiest yellow group to the tough (and sometimes bizarre) purple group.

Yellow group hint: Working out.

Green group hint: Cover your face.

Blue group hint: NFL players.

Purple group hint: Leap.

Answers for today’s Connections: Sports Edition groups

Yellow group: Exercises in singular form.

Green group: Sporting jobs that require masks.

Blue group: Hall of Fame defensive ends.

Purple group: ____ jump.

Read more: Wordle Cheat Sheet: Here Are the Most Popular Letters Used in English Words

What are today’s Connections: Sports Edition answers?

The yellow words in today’s Connections

The theme is exercises in singular form. The four answers are crunch, plank, situp and squat.

The green words in today’s Connections

The theme is sporting jobs that require masks. The four answers are catcher, fencer, football player and goaltender.

The blue words in today’s Connections

The theme is Hall of Fame defensive ends. The four answers are Dent, Peppers, Strahan and Youngblood.

The purple words in today’s Connections

The theme is ____ jump. The four answers are broad, high, long and triple.

Technologies

The $135M Google Data Settlement Site Is Live — See If You’re Eligible

Use the settlement website to select your preferred payment method, and you may end up $100 richer.

You can now file a claim in the $135 million Google data settlement. The case centers on claims that Android devices transmitted user data without consent. Specifically, the class action lawsuit Taylor v. Google LLC contends that Google’s Android devices passively transferred cellular data to Google without user permission, even when the devices were idle. While not admitting fault, Google reached a preliminary settlement in January, agreeing to pay $135 million to about 100 million US Android phone users.

The official settlement website for the lawsuit is now live. The final approval hearing won’t occur until June 23, when the court will consider whether Google’s settlement is fair and listen to objections. After that, the court will decide whether to approve the $135 million settlement.

In the meantime, if you qualify and want to be paid as part of the settlement, you can select your preferred payment method on the official website. There, you can find information on speaking at the June 23 court hearing and on how to exclude yourself or write to the court to object by May 29.

As part of the settlement, Google will update its Google Play terms of service to clarify that certain data transfers do occur passively even when you’re not using your Android device, and that cellular data may be relied upon when not connected to Wi-Fi. This can’t always be disabled, but users will be asked to consent to it when setting up their device.

Google will also fully stop collecting data when its «allow background data usage» option is toggled off.

Who can be part of the settlement?

In order to join the Taylor v. Google LLC settlement, you must meet four qualifications:

- Be a living, individual human being in the US.

- Have used an Android mobile device with a cellular data plan.

- Have used the aforementioned device at any time from Nov. 12, 2017, to the date when the settlement receives final approval.

- You’re not a class member in the Csupo v. Google LLC lawsuit, which is similar but specifically for California residents.

The final approval hearing is on June 23, so you can add your payment method until then. The hearing’s date and time may change, and any updates will be posted on the settlement website.

If you choose to do nothing, you will still be issued a settlement payment, but you may not receive it if you don’t select a payment method.

How much will I get paid?

It’s not currently known exactly how much each settlement class member will receive, but the cap is $100. Payments will be distributed after final court approval and after any appeals are resolved.

After all administrative, tax and attorney costs are paid, the settlement administrator will attempt to pay each member an equal amount. If any funds remain after payments are sent, and it’s economically feasible, they will be redistributed to members who were previously and successfully paid. If it’s not economically feasible, the funds will go to an organization approved by the court.

Technologies

Samsung’s Galaxy Watch Ultra 2 Might Come in 5G and 4G Cellular Models

If the rumor proves true, the 5G Galaxy Watch Ultra would rival the 5G-enabled $799 Apple Watch Ultra 3 that debuted last fall.

Samsung’s next high-end Galaxy Watch could support faster 5G speeds, but if this leak is true, it will depend on where you live. The rumored Samsung Galaxy Watch Ultra 2 might come in 5G and 4G cellular models, with availability for each smartwatch depending on the country.

According to the Dutch website Galaxy Club (and spotted by SamMobile), Samsung’s servers may have revealed a series of model numbers that point to 5G, 4G and Wi-Fi-enabled editions of the next Galaxy Watch Ultra, which would succeed the original model that debuted in 2024.

A representative for Samsung did not immediately respond to a request for comment.

The Galaxy Club website speculates that the 5G edition would be sold in the US and Korean markets, while the 4G edition would sell in the rest of the world. In the US, a 5G version of the Galaxy Watch Ultra would rival the 5G-enabled $799 Apple Watch Ultra 3, which debuted last fall. The 4G edition would have broader compatibility worldwide, since the earlier network is far more established.

It will likely be a few months until we hear anything official about the Galaxy Watch Ultra 2. Samsung typically unveils its new watches in the summer alongside its Galaxy Z Fold and Z Flip foldable phones. Last year, Samsung unveiled the Galaxy Watch 8 and the Galaxy Watch 8 Classic, but otherwise left the prior 2024 Ultra in the lineup for those looking for a larger 47mm smartwatch.

-

Technologies3 года ago

Technologies3 года agoTech Companies Need to Be Held Accountable for Security, Experts Say

-

Technologies3 года ago

Technologies3 года agoBest Handheld Game Console in 2023

-

Technologies3 года ago

Technologies3 года agoTighten Up Your VR Game With the Best Head Straps for Quest 2

-

Technologies4 года ago

Technologies4 года agoBlack Friday 2021: The best deals on TVs, headphones, kitchenware, and more

-

Technologies5 лет ago

Technologies5 лет agoGoogle to require vaccinations as Silicon Valley rethinks return-to-office policies

-

Technologies5 лет ago

Technologies5 лет agoVerum, Wickr and Threema: next generation secured messengers

-

Technologies4 года ago

Technologies4 года agoOlivia Harlan Dekker for Verum Messenger

-

Technologies4 года ago

Technologies4 года agoThe number of Сrypto Bank customers increased by 10% in five days