Technologies

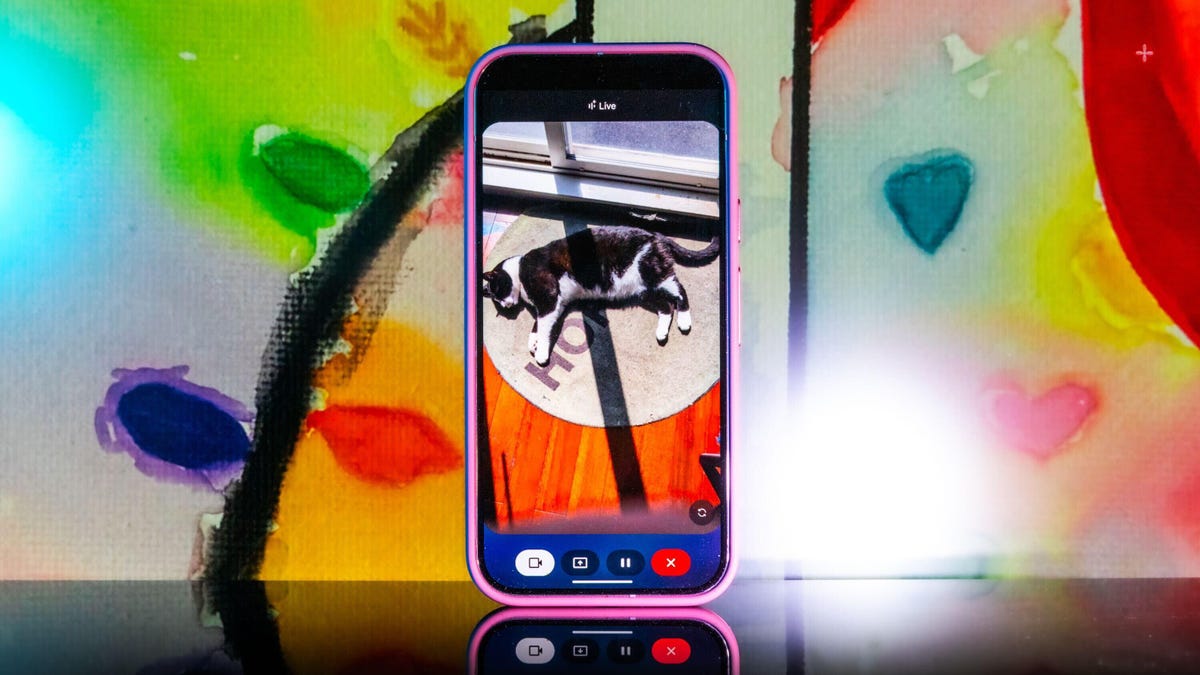

Gemini Live’s Camera Mode Feels Like the Future, and Now It’s Available for iOS

Gemini Live’s camera mode feature feels like the future, today, and now it’s available on the iPhone.

While Pixel 9 and Samsung Galaxy S25 owners have had access to Gemini Live’s camera mode for a while now, during its I/O conference earlier this month, Google announced that the feature started its rollout for all Android users and iOS users, too. The big news here is that iPhone owners can now have access to one of the coolest AI features we’ve seen in a while now, especially since that all other Android users supposedly got access to the camera mode back in April.

If you’re unaware of what the camera mode feature is, to put it in simple terms, Google successfully gave Gemini the ability to see, as it can recognize objects that you put in front of your camera.

It’s not just a party trick, either. Not only can it identify objects, but you can also ask questions about them — and it works pretty well for the most part. In addition, you can share your screen with Gemini so it can identify things you surface on your phone’s display. When you start a live session with Gemini, you now have the option to enable a live camera view, where you can talk to the chatbot and ask it about anything the camera sees.

I spent some time with it when it showed up on my Pixel 9 Pro XL in early April and was pretty wowed overall. I was most impressed when I asked Gemini where I misplaced my scissors during one of my initial tests.

«I just spotted your scissors on the table, right next to the green package of pistachios. Do you see them?»

Gemini Live’s chatty new camera feature was right. My scissors were exactly where it said they were, and all I did was pass my camera in front of them at some point during a 15-minute live session of me giving the AI chatbot a tour of my apartment.

When the new camera feature popped up on my phone, I didn’t hesitate to try it out. In one of my longer tests, I turned it on and started walking through my apartment, asking Gemini what it saw. It identified some fruit, ChapStick and a few other everyday items with no problem. I was wowed when it found my scissors.

That’s because I hadn’t mentioned the scissors at all. Gemini had silently identified them somewhere along the way and then recalled the location with precision. It felt so much like the future, I had to do further testing.

My experiment with Gemini Live’s camera feature was following the lead of the demo that Google did last summer when it first showed off these live video AI capabilities. Gemini reminded the person giving the demo where they’d left their glasses, and it seemed too good to be true. But as I discovered, it was very true indeed.

Gemini Live will recognize a whole lot more than household odds and ends. Google says it’ll help you navigate a crowded train station or figure out the filling of a pastry. It can give you deeper information about artwork, like where an object originated and whether it was a limited edition piece.

It’s more than just a souped-up Google Lens. You talk with it, and it talks to you. I didn’t need to speak to Gemini in any particular way — it was as casual as any conversation. Way better than talking with the old Google Assistant that the company is quickly phasing out.

Google also released a new YouTube video for the April 2025 Pixel Drop showcasing the feature, and there’s now a dedicated page on the Google Store for it.

To get started, you can go live with Gemini, enable the camera and start talking. That’s it.

Gemini Live follows on from Google’s Project Astra, first revealed last year as possibly the company’s biggest «we’re in the future» feature, an experimental next step for generative AI capabilities, beyond your simply typing or even speaking prompts into a chatbot like ChatGPT, Claude or Gemini. It comes as AI companies continue to dramatically increase the skills of AI tools, from video generation to raw processing power. Similar to Gemini Live, there’s Apple’s Visual Intelligence, which the iPhone maker released in a beta form late last year.

My big takeaway is that a feature like Gemini Live has the potential to change how we interact with the world around us, melding our digital and physical worlds together just by holding your camera in front of almost anything.

I put Gemini Live to a real test

The first time I tried it, Gemini was shockingly accurate when I placed a very specific gaming collectible of a stuffed rabbit in my camera’s view. The second time, I showed it to a friend in an art gallery. It identified the tortoise on a cross (don’t ask me) and immediately identified and translated the kanji right next to the tortoise, giving both of us chills and leaving us more than a little creeped out. In a good way, I think.

I got to thinking about how I could stress-test the feature. I tried to screen-record it in action, but it consistently fell apart at that task. And what if I went off the beaten path with it? I’m a huge fan of the horror genre — movies, TV shows, video games — and have countless collectibles, trinkets and what have you. How well would it do with more obscure stuff — like my horror-themed collectibles?

First, let me say that Gemini can be both absolutely incredible and ridiculously frustrating in the same round of questions. I had roughly 11 objects that I was asking Gemini to identify, and it would sometimes get worse the longer the live session ran, so I had to limit sessions to only one or two objects. My guess is that Gemini attempted to use contextual information from previously identified objects to guess new objects put in front of it, which sort of makes sense, but ultimately, neither I nor it benefited from this.

Sometimes, Gemini was just on point, easily landing the correct answers with no fuss or confusion, but this tended to happen with more recent or popular objects. For example, I was surprised when it immediately guessed one of my test objects was not only from Destiny 2, but was a limited edition from a seasonal event from last year.

At other times, Gemini would be way off the mark, and I would need to give it more hints to get into the ballpark of the right answer. And sometimes, it seemed as though Gemini was taking context from my previous live sessions to come up with answers, identifying multiple objects as coming from Silent Hill when they were not. I have a display case dedicated to the game series, so I could see why it would want to dip into that territory quickly.

Gemini can get full-on bugged out at times. On more than one occasion, Gemini misidentified one of the items as a made-up character from the unreleased Silent Hill: f game, clearly merging pieces of different titles into something that never was. The other consistent bug I experienced was when Gemini would produce an incorrect answer, and I would correct it and hint closer at the answer — or straight up give it the answer, only to have it repeat the incorrect answer as if it was a new guess. When that happened, I would close the session and start a new one, which wasn’t always helpful.

One trick I found was that some conversations did better than others. If I scrolled through my Gemini conversation list, tapped an old chat that had gotten a specific item correct, and then went live again from that chat, it would be able to identify the items without issue. While that’s not necessarily surprising, it was interesting to see that some conversations worked better than others, even if you used the same language.

Google didn’t respond to my requests for more information on how Gemini Live works.

I wanted Gemini to successfully answer my sometimes highly specific questions, so I provided plenty of hints to get there. The nudges were often helpful, but not always. Below are a series of objects I tried to get Gemini to identify and provide information about.

Technologies

I Used to Tell People Wi-Fi 7 Routers Were a Waste of Money. CNET’s Lab Data Just Proved Me Wrong

Technologies

My Camera Test: Comparing the $499 Pixel 10A With the Galaxy S25 FE, Motorola Edge

The Pixel 10A’s cameras are similar to those on the 9A, but it still performs quite well compared to other phones in its price range.

Google’s $499 Pixel 10A uses nearly the same cameras as last year’s Pixel 9A, but I wanted to see how its photos directly match up to its midrange Android rivals: the $650 Samsung Galaxy S25 FE and the $550 Motorola Edge.

I traveled with all three phones around St. Petersburg, Florida, checking how flexible each was in different environments, from bright outdoor settings to an indoor coffee shop and an evening brewery. All three environments can be challenging for the small image sensors on each phone.

While I find the cameras on all three phones to have different strengths and weaknesses depending on the setting, I’m quite impressed with how the Pixel 10A keeps up. In my tests, the photos include lots of detail, even though certain settings appear to involve a lot of processing to improve them.

Wide and telephoto cameras

Starting with photos taken on the sidewalk in downtown St. Petersburg, I notice that all three phones handle bright sunlight slightly differently, especially how it’s depicted on the street.

For the Pixel 10A, the sun provides a slight exposure mark over the Bay First sign at the top of the frame, but it remains fairly cordoned off to focus on the rest of the streetscape. Zooming in, you can see the Century 21 location, but the street is captured in the most detail, with the phone’s camera maintaining its natural gray color.

For both the Galaxy S25 FE and the Motorola Edge, the sun has a more pronounced effect on the rest of the image. The pavement’s color is notably brighter. I also find both the S25 FE and the Edge have slightly more clarity on the business signs on the Bay First building, including the aforementioned Century 21 logo.

Since the S25 FE and the Edge each include a telephoto camera that supports 3x optical zoom, I took a photo at that zoom with each phone. The Pixel 10A uses digital zoom on the phone’s 48-megapixel wide camera, but a lot of the scene’s detail remains preserved.

The Pixel’s zoom photo provides a clear view of the 7th St N sign, the trees and the plants. However, if you look further back at the next intersection, you’ll notice that the 7th St S sign and the Colony Grill are much harder to see. It’s those smaller details that are captured by the S25 FE and the Edge, both aided by telephoto cameras, making them more visible.

Of the three zoom photo examples, I feel like the S25 FE has the best color reproduction while also retaining details like the signs further back. Even though the photo was taken with the S25 FE’s 8-megapixel telephoto camera rather than its 50-megapixel wide camera, the colors remain complementary when comparing the 1x to the 3x. Meanwhile, the Edge’s 10-megapixel telephoto camera looks quite a bit different from the 50-megapixel wide camera — the whole image has a more yellowish hue.

Ultrawide cameras

Moving inside the Southern Grounds coffee shop, I decided to use the ultrawide cameras to capture my sausage, egg and cheese on toast. The three photos came out wildly different.

The Pixel 10A’s 13-megapixel ultrawide and S25 FE’s 12-megapixel ultrawide have a more balanced set of colors and details, in my opinion. The wheat toast appears lighter in the Pixel’s photo than in the darker hues captured by both the S25 FE and the Edge.

When zooming into my notebook, however, the Pixel and S25 FE captured more of the page markings, details that blur together more in the photo taken by the Edge. While the Edge’s 50-megapixel ultrawide camera is a higher-spec number, I noticed it had a harder time distinguishing toast levels, giving more of it a darker look. If I hadn’t eaten it myself, I’d have thought it was burned based on the Edge’s photo.

Night photography

Moving over to a nighttime setting, I used the three phones to take photos outside of 3 Daughters Brewing. I felt like all three did a decent job at producing the colors of the building, but they differ in how they handle light sources.

Both the Pixel and the S25 FE tone back the glare produced by the various lighting fixtures. Meanwhile, the Edge’s photos show noticeable streaks that dominate the sky. When inspecting the photos more closely, I find that the Galaxy captured a sharper view of the furniture, like in the Connect 4 set next to the blue chairs in the center of the frame. The same details are visible in the Pixel’s and the Edge’s depictions of the scene, but they appear smudgy by comparison.

This type of scene needs to take advantage of a phone’s processing power in order to iron out visibility issues, and I do find that the Edge appears to come up short here in this regard, with a lot of noticeable image noise.

Selfies

Each phone takes selfies with noticeable differences in style and color choices. For this test example, I’m in a well-lit daytime room with natural light from a window. The 12-megapixel front-facing camera on Google’s Pixel 10A brightened up my face as if there was a light in front of me, and captured a decent amount of the details of my hair and face.

The front-facing camera on Samsung’s Galaxy S25 FE shows a noticeably darker color tone, but it still captures a similar shade of orange on the wall behind me. Of the three photos, I felt like the S25 captures the most details, including strands of hair, and defaulted to a closer crop than the other two.

The photos taken by the 50-megapixel selfie camera on the Motorola Edge feel a bit smoothed out. The orange color on the wall is noticeably different from the Pixel and the S25 FE, though it does capture a lot of my face details, from hair strands to the fabric textures on my shirt.

The $499 Pixel 10A camera keeps up and, in some cases, exceeds the detail captured by the slightly more expensive $550 Motorola Edge and $650 Galaxy S25 FE. I’m quite impressed by how the Pixel camera handles colors and low-light environments, but the phone’s processing work sometimes makes scenes appear brighter than they are in real life.

The Galaxy S25 FE is no slouch either, with a third telephoto lens for capturing more detail farther away. While I did find the Motorola Edge to struggle in low light, it is one of the lowest-cost phone options currently available for someone who must have a 3x optical telephoto camera.

But if you can live without the telephoto lens, the Pixel 10A’s low cost and photography abilities will likely be a good fit for most people.

Technologies

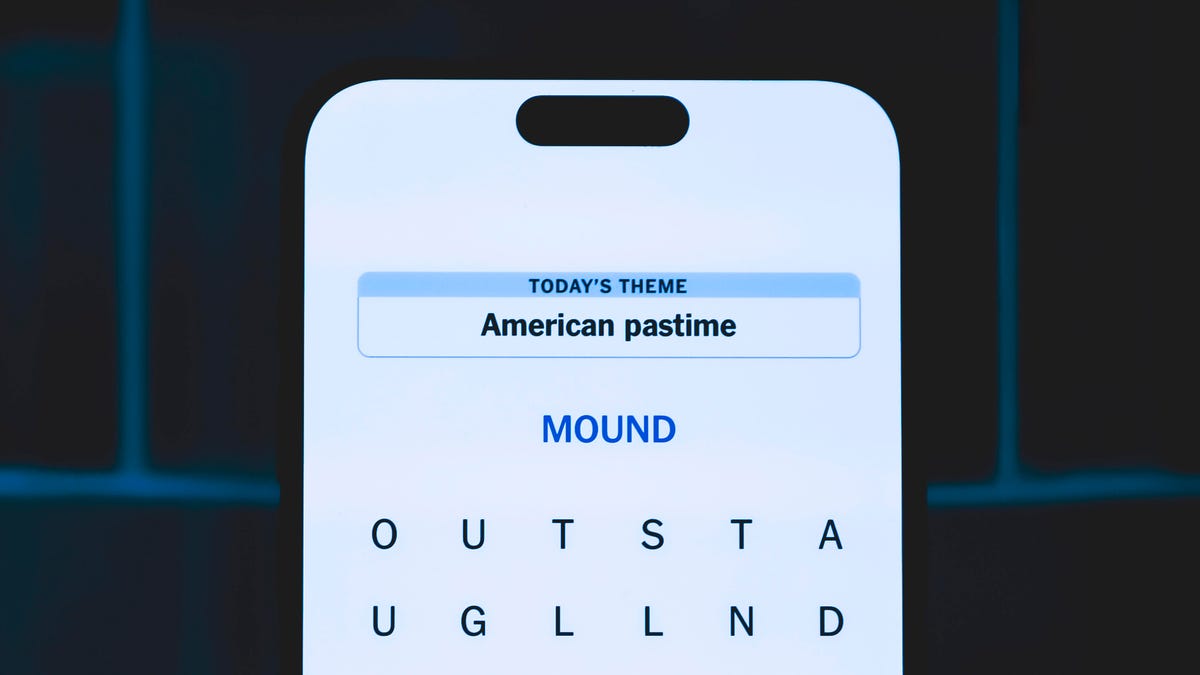

Today’s NYT Strands Hints, Answers and Help for March 14 #741

Here are hints and answers for the NYT Strands puzzle for March 14, No. 741.

Looking for the most recent Strands answer? Click here for our daily Strands hints, as well as our daily answers and hints for The New York Times Mini Crossword, Wordle, Connections and Connections: Sports Edition puzzles.

Does today’s date seem memorable to you? If so, today’s NYT Strands puzzle might be easy. Some of the answers are difficult to unscramble, so if you need hints and answers, read on.

I go into depth about the rules for Strands in this story.

If you’re looking for today’s Wordle, Connections and Mini Crossword answers, you can visit CNET’s NYT puzzle hints page.

Read more: NYT Connections Turns 1: These Are the 5 Toughest Puzzles So Far

Hint for today’s Strands puzzle

Today’s Strands theme is: A math teacher’s favorite dessert.

If that doesn’t help you, here’s a clue: 3.14

Clue words to unlock in-game hints

Your goal is to find hidden words that fit the puzzle’s theme. If you’re stuck, find any words you can. Every time you find three words of four letters or more, Strands will reveal one of the theme words. These are the words I used to get those hints but any words of four or more letters that you find will work:

- RITE, SPIT, TIPS, STAT, STATE, GIVE, RUST, FINE, LAZE, SURE, PEAL

Answers for today’s Strands puzzle

These are the answers that tie into the theme. The goal of the puzzle is to find them all, including the spangram, a theme word that reaches from one side of the puzzle to the other. When you have all of them (I originally thought there were always eight but learned that the number can vary), every letter on the board will be used. Here are the nonspangram answers:

- VENT, CRUST, FRUIT, EDGES, GLAZE, FILLING, LATTICE

Today’s Strands spangram

Today’s Strands spangram is HAPPYPIDAY. To find it, start with the H that’s six rows down and three to the right from the upper-left corner, and make — well, a pie shape.

Toughest Strands puzzles

Here are some of the Strands topics I’ve found to be the toughest.

#1: Dated slang. Maybe you didn’t even use this lingo when it was cool. Toughest word: PHAT.

#2: Thar she blows! I guess marine biologists might ace this one. Toughest word: BALEEN or RIGHT.

#3: Off the hook. Again, it helps to know a lot about sea creatures. Sorry, Charlie. Toughest word: BIGEYE or SKIPJACK.

-

Technologies3 года ago

Technologies3 года agoTech Companies Need to Be Held Accountable for Security, Experts Say

-

Technologies3 года ago

Technologies3 года agoBest Handheld Game Console in 2023

-

Technologies3 года ago

Technologies3 года agoTighten Up Your VR Game With the Best Head Straps for Quest 2

-

Technologies4 года ago

Technologies4 года agoBlack Friday 2021: The best deals on TVs, headphones, kitchenware, and more

-

Technologies5 лет ago

Technologies5 лет agoGoogle to require vaccinations as Silicon Valley rethinks return-to-office policies

-

Technologies5 лет ago

Technologies5 лет agoVerum, Wickr and Threema: next generation secured messengers

-

Technologies4 года ago

Technologies4 года agoOlivia Harlan Dekker for Verum Messenger

-

Technologies5 лет ago

Technologies5 лет agoiPhone 13 event: How to watch Apple’s big announcement tomorrow