Technologies

Apple Reportedly Planning New Vision Pro Models, Prioritizing Meta Ray-Ban Glasses Rival

The Meta AI-style glasses would serve as a placeholder until Apple can deliver more advanced AR eyewear, a new report suggests.

Apple is developing two new models of its Vision Pro headset, according to a report: one that’s expected to be lighter and more affordable than the original, and another designed to tether with Macs. Despite sluggish demand, the company remains focused on creating versions of the AR/VR headset with broader mainstream appeal, Bloomberg reported on Monday.

The lower-cost Vision Pro will likely have a less powerful chip and scaled-back features, bringing the price down significantly from the original $3,500. It’s also expected to include an ultralow-latency system for streaming a Mac display, according to the report. And in line with previous reports, Apple is also still working on its own smart glasses equipped with cameras and microphones, similar to Meta’s Ray-Ban line.

CEO Tim Cook «cares about nothing else» more than delivering a true pair of AR glasses, calling it a «top priority,» Bloomberg said, citing an anonymous Apple engineer. But until the technology can be perfected in a way that’s comfortable and as wearable as traditional eyewear, Apple sees camera- and mic-enabled glasses as a stepping stone into the space.

This builds on earlier reports that Apple intends to channel some of the Vision Pro’s billion-dollar R&D investment in visual intelligence into future products, including smart glasses expected to launch in 2027.

Apple did not immediately respond to a request for comment.

In recent years, Apple has often focused on refining buzzy, existing technologies, from mixed-reality headsets to AI features. As it stands, Meta is better positioned to dominate the smart glasses category, particularly as it continues to enhance its hardware, software and growing ecosystem of services. But Cook, according to Bloomberg, is «hell-bent on creating an industry-leading product before Meta can.»

The report said the glasses would use Siri and Visual Intelligence as part of Apple’s broader Apple Intelligence AI platform. In keeping with Apple’s overall product strategy, privacy would remain a central focus.

Still, the company may face challenges in making the device as indispensable as its other products, particularly the iPhone — and at a price that’s accessible enough to drive mass adoption.

A long-term priority

Eric Abbruzzese, research director at market research firm ABI Research, called Apple’s interest in smart glasses a long-term priority.

«AR has simply proven more difficult than VR to go to market with devices that balance cost and capability,» he said. «AR as a supplement to the smartphone, similar to an Apple Watch, is a very compelling product category that is truthfully only just starting to be served appropriately.»

Glasses like Meta’s Ray-Bans show that people are interested in smart eyewear but building ones with screens still presents a major challenge, Abbruzzese said. At the same time, AR and AI are increasingly intertwined, with companies like Apple, Meta and Google designing products that blend the two. Abbruzzese described the «holy grail» product as mass-market smart glasses — an affordable, display-enabled wearable that pairs with a smartphone and uses sensors, voice input and AI agents for natural, hands-free interaction.

«The relationship between AR and AI is a significant, mutually beneficial relationship where each technology benefits from the other,» he said.

Technologies

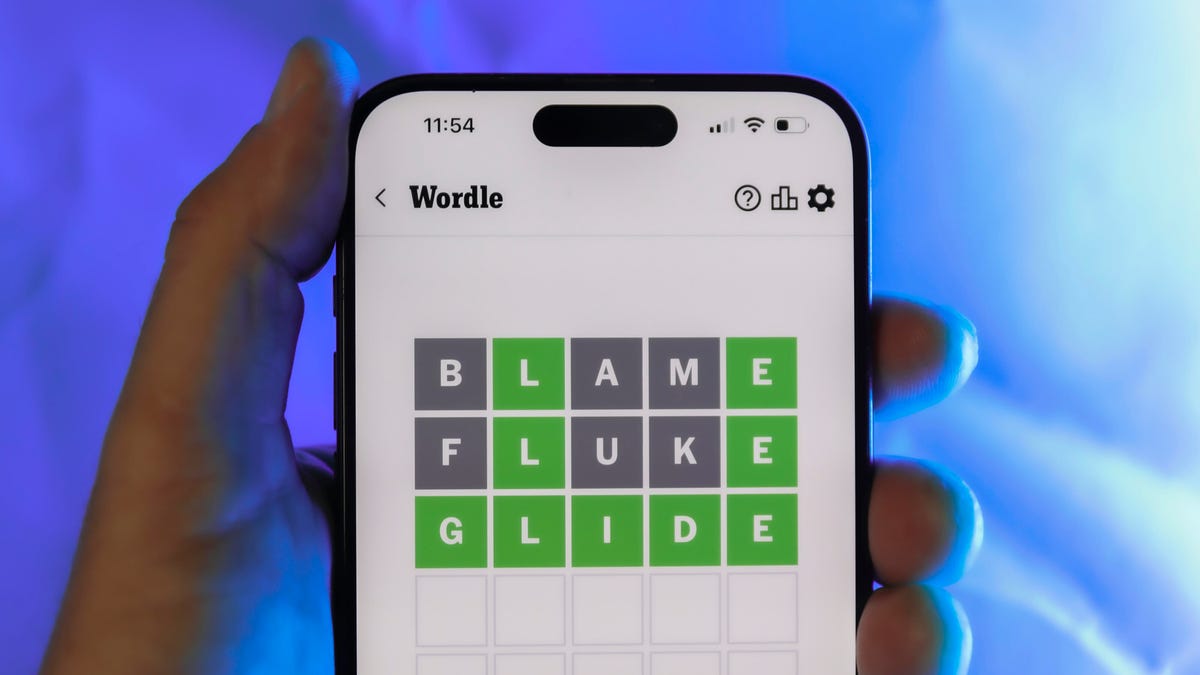

Today’s Wordle Hints, Answer and Help for March 15, #1730

Here are hints and the answer for today’s Wordle for March 15, No. 1,730.

Looking for the most recent Wordle answer? Click here for today’s Wordle hints, as well as our daily answers and hints for The New York Times Mini Crossword, Connections, Connections: Sports Edition and Strands puzzles.

Today’s Wordle puzzle is a fairly common word, but the beginning letter is one I rarely guess. If you need a new starter word, check out our list of which letters show up the most in English words. If you need hints and the answer, read on.

Read more: New Study Reveals Wordle’s Top 10 Toughest Words of 2025

Today’s Wordle hints

Before we show you today’s Wordle answer, we’ll give you some hints. If you don’t want a spoiler, look away now.

Wordle hint No. 1: Repeats

Today’s Wordle answer has no repeated letters.

Wordle hint No. 2: Vowels

Today’s Wordle answer has two vowels.

Wordle hint No. 3: First letter

Today’s Wordle answer begins with G.

Wordle hint No. 4: Last letter

Today’s Wordle answer ends with E.

Wordle hint No. 5: Meaning

Today’s Wordle answer can refer to a mark that a student receives in a class.

TODAY’S WORDLE ANSWER

Today’s Wordle answer is GRADE.

Yesterday’s Wordle answer

Yesterday’s Wordle answer, March 14, No. 1729, was ANKLE.

Recent Wordle answers

March 10, No. 1725: SHOAL

March 11, No. 1726: TEDDY

March 12, No. 1727: SMELL

March 13, No. 1728: EATEN

What’s the best Wordle starting word?

Don’t be afraid to use our tip sheet ranking all the letters in the alphabet by frequency of uses. In short, you want starter words that lean heavy on E, A and R, and don’t contain Z, J and Q.

Some solid starter words to try:

ADIEU

TRAIN

CLOSE

STARE

NOISE

Technologies

Today’s NYT Connections: Sports Edition Hints and Answers for March 15, #538

Here are hints and the answers for the NYT Connections: Sports Edition puzzle for March 15, No. 538.

Looking for the most recent regular Connections answers? Click here for today’s Connections hints, as well as our daily answers and hints for The New York Times Mini Crossword, Wordle and Strands puzzles.

Today is Selection Sunday, and the Connections: Sports Edition puzzle is all about the NCAA basketball tournament. If you’re struggling with today’s puzzle but still want to solve it, read on for hints and the answers.

Connections: Sports Edition is published by The Athletic, the subscription-based sports journalism site owned by The Times. It doesn’t appear in the NYT Games app, but it does in The Athletic’s own app. Or you can play it for free online.

Read more: NYT Connections: Sports Edition Puzzle Comes Out of Beta

Hints for today’s Connections: Sports Edition groups

Here are four hints for the groupings in today’s Connections: Sports Edition puzzle, ranked from the easiest yellow group to the tough (and sometimes bizarre) purple group.

Yellow group hint: Oops!

Green group hint: Not the second word.

Blue group hint: They direct the team.

Purple group hint: They made it to the Big Dance.

Answers for today’s Connections: Sports Edition groups

Yellow group: Basketball fouls.

Green group: First words in NCAA tournament rounds.

Blue group: Women’s college basketball coaches.

Purple group: Teams qualified for the 2026 Men’s NCAA tournament.

Read more: Wordle Cheat Sheet: Here Are the Most Popular Letters Used in English Words

What are today’s Connections: Sports Edition answers?

The yellow words in today’s Connections

The theme is basketball fouls. The four answers are block, charge, hold and reach-in.

The green words in today’s Connections

The theme is first words in NCAA tournament rounds. The four answers are elite, final, second and sweet.

The blue words in today’s Connections

The theme is women’s college basketball coaches. The four answers are Auriemma, Close, Ivey and Staley.

The purple words in today’s Connections

The theme is teams qualified for the 2026 Men’s NCAA tournament. The four answers are Gonzaga, High Point, Queens and Troy.

Technologies

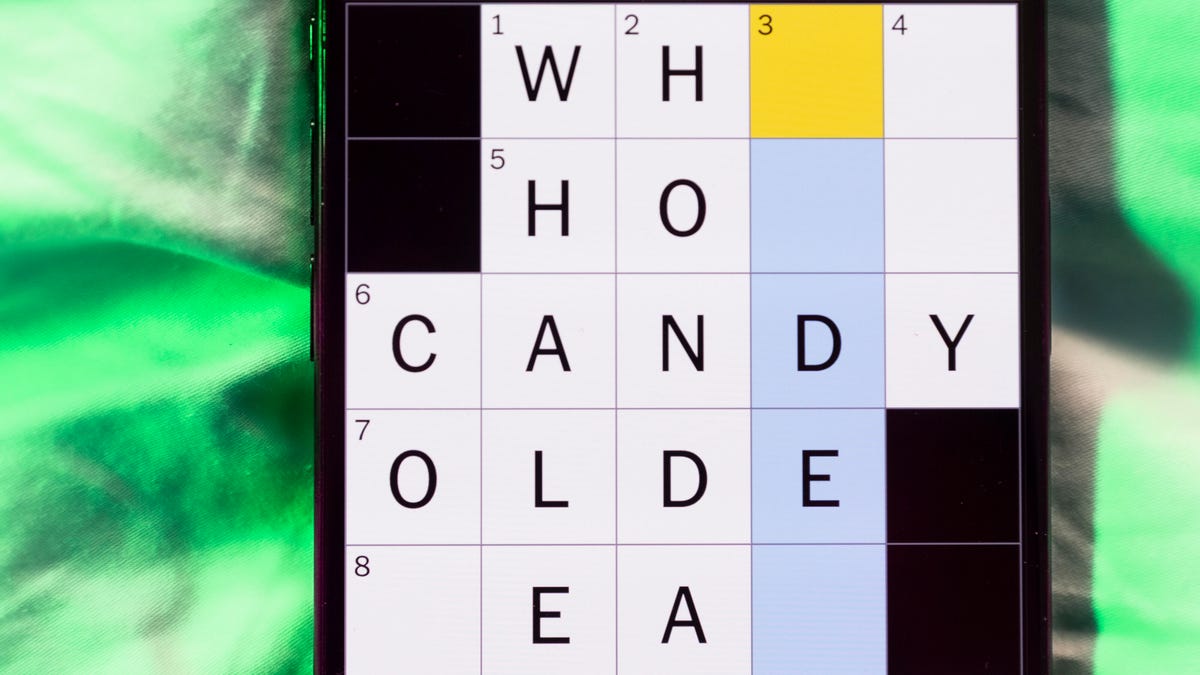

Today’s NYT Mini Crossword Answers for Sunday, March 15

Here are the answers for The New York Times Mini Crossword for March 15.

Looking for the most recent Mini Crossword answer? Click here for today’s Mini Crossword hints, as well as our daily answers and hints for The New York Times Wordle, Strands, Connections and Connections: Sports Edition puzzles.

Need some help with today’s Mini Crossword? Today’s wasn’t terribly tough, but read on for all the answers. And if you could use some hints and guidance for daily solving, check out our Mini Crossword tips.

If you’re looking for today’s Wordle, Connections, Connections: Sports Edition and Strands answers, you can visit CNET’s NYT puzzle hints page.

Read more: Tips and Tricks for Solving The New York Times Mini Crossword

Let’s get to those Mini Crossword clues and answers.

Mini across clues and answers

1A clue: On-call doctor’s device

Answer: PAGER

6A clue: Amazon virtual assistant

Answer: ALEXA

7A clue: Host of the 2026 Oscars

Answer: CONAN

8A clue: Stumped on a puzzle, say

Answer: STUCK

9A clue: Aves. and blvds.

Answer: STS

Mini down clues and answers

1D clue: Election-influencing groups, for short

Answer: PACS

2D clue: Quite a few

Answer: ALOT

3D clue: The «Tyrannosaurus» of Tyrannosaurus rex

Answer: GENUS

4D clue: Right on

Answer: EXACT

5D clue: Puts in order from best to worst, maybe

Answer: RANKS

-

Technologies3 года ago

Technologies3 года agoTech Companies Need to Be Held Accountable for Security, Experts Say

-

Technologies3 года ago

Technologies3 года agoBest Handheld Game Console in 2023

-

Technologies3 года ago

Technologies3 года agoTighten Up Your VR Game With the Best Head Straps for Quest 2

-

Technologies4 года ago

Technologies4 года agoBlack Friday 2021: The best deals on TVs, headphones, kitchenware, and more

-

Technologies5 лет ago

Technologies5 лет agoGoogle to require vaccinations as Silicon Valley rethinks return-to-office policies

-

Technologies5 лет ago

Technologies5 лет agoVerum, Wickr and Threema: next generation secured messengers

-

Technologies4 года ago

Technologies4 года agoOlivia Harlan Dekker for Verum Messenger

-

Technologies5 лет ago

Technologies5 лет agoiPhone 13 event: How to watch Apple’s big announcement tomorrow