Technologies

I Took the iPhone 15 Pro Max and 13 Pro Max to Yosemite for a Camera Test

Do the latest Apple phone and cameras capture the epic majesty of Yosemite National Park better than a two-year-old iPhone? We find out.

This past week, I took Apple’s new iPhone 15 Pro Max on an epic adventure to California’s Yosemite National Park.

As a professional photographer, I take tens of thousands of photos every year. Much of my work is done inside my San Francisco photo studio, but I also spend a considerable amount of time shooting on location. I still use a DSLR, but my iPhone 13 Pro is never far from me.

Like most people nowadays, I don’t upgrade my phone every year or even two. Phones have reached a point where they are good at performing daily tasks for three or four years. And most phone cameras are sufficient for capturing everyday special moments to post on social media or share with friends.

But maybe, like me, you’re in the mood for something shiny and new like the iPhone 15 Pro Max. I wanted to find out how my 2-year-old iPhone 13 Pro and its 3x optical zoom would do against the 15 Pro Max and its new 5x optical zoom. And what better place to take them than on an epic adventure to Yosemite, one of the crown jewels of America’s National Park System and an iconic destination for outdoor lovers.

Yosemite is absolutely, massively impressive.

The main camera is still the best camera

The iPhone 15 Pro Max’s main camera with its wide angle lens is the most important camera on the phone. It has a new larger 48-megapixel sensor that had no problem being my daily workhorse for a week.

The larger sensor means the camera can now capture more light and render colors more accurately. And the improvements are visible. Not only do photos look richer in bright light but also in low-light scenarios.

In the images below, taken at sunrise at Tunnel View in Yosemite National Park, notice how the 15 Pro Max’s photo has better fidelity, color and contrast in the foreground leaves. Compare that against the pronounced edge sharpening of the mountaintops in the 13 Pro image.

The 15 Pro Max’s camera captures excellent detail in bright light, including more texture, like in rocky landscapes, more detail in the trees and more fine-grained color.

A new 15 Pro Max feature aimed at satisfying a camera nerd’s creative itch uses the larger main sensor combined with the A17 Pro chip to turn the 24mm equivalent wide angle lens into essentially four lenses. You can switch the main camera between 1x, 1.2x, 1.5x and 2x, the equivalent of 24mm, 28mm, 35mm and 50mm prime lens – four of the most popular prime lens lengths. In reality, the 15 Pro Max takes crops of the sensor and using some clever processing to correct lens distortion.

In use, it’s nice to have these crop options, but for most people they will likely be of little interest.

I find the 15 Pro Max’s native 1x view a little wide and enjoy being able to change it to default to 1.5x magnification. I went into Settings, tapped on Camera, then on Main Camera and changed the default lens to a 35mm look. Now, every time I open the camera, it’s at 1.5x and I can just focus on framing and taking the photo instead of zooming in.

Another nifty change that I highly recommend is to customize the Action button so that it opens the camera when you long press it. The Action button replaces the switch to mute/silence your phone that has been on every iPhone since the original. You can program the Action button to trigger a handful of features or shortcuts by going into the Settings app and tapping Action button. Once you open the camera, the Action button can double as a physical camera shutter button.

The dynamic range and detail are noticeably better in photos I took with the 15 Pro Max main camera in just about every lighting condition.

There are fewer blown out highlights and nicer, blacker blacks with less noise. In particular, there is more tonal range and detail in the whites. I noticed this particularly when it came to how the 15 Pro Max captured direct sunlight on climbers or in the shadow detail in the rock formations.

Read more: iPhone 15 Pro Max Camera vs. Galaxy S23 Ultra: Smartphone Shootout

Overall, the 15 Pro Max’s main camera is simply far better and consistent at exposures than on the 13 Pro.

The iPhone 15 Pro Max 5x telephoto camera

The iPhone 15 Pro Max has a 5x telephoto camera with an f/2.8 aperture and an equivalent focal length of 120mm.

The 13 Pro’s 3x camera, introduced in 2021, was a huge step up from previous models and still gives zoomed-in images a cinematic feel from the lens’ depth compression. The 15 Pro Max’s longer telephoto lens, combined with a larger sensor, accentuates those cinematic qualities even further, resulting in images with a rich array of color and a wider tonal range.

All this translates to a huge improvement in light capture and a noticeable step up in image quality for the iPhone’s zoom lens.

I found that the 15 Pro Max’s telephoto camera yields better photos of subjects farther away like mountains, wildlife and the stage at a live concert.

A combination of optical stabilization and 3D sensor-shift make the 15 Pro Max’s tele upgrade experience easier to use by steadying the image capture. A longer lens typically means there’s a greater chance of blurred images due to your hand shaking. Using such a long focal length magnifies every little movement of the camera.

I found that the 3D sensor-shift optical image stabilization system does wonders for shooting distant subjects and minimizing that camera shake.

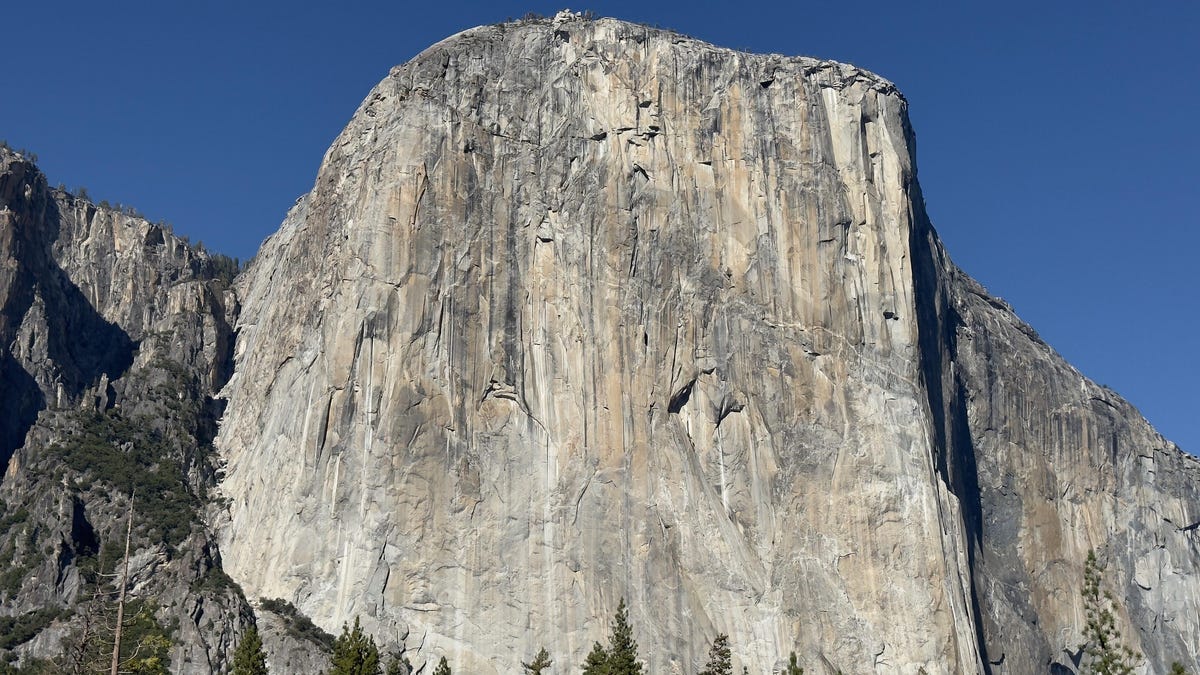

The image below was shot with the 5x zoom on the iPhone 15 Pro Max looking up the Yosemite Valley from Tunnel View. It is an incredibly crisp telephoto image.

For reference, the image below was shot on the 15 Pro Max from the same location using the ultra Wide lens. I am about five miles away from that V-shaped dip at the end of the valley.

The iPhone still suffers from lens flare

Lens flares, along with the green dot that seems to be in all iPhone images taken into direct sunlight, continue to be an issue on the iPhone 15 Pro Max despite the new lens coatings.

Apple says the main camera lens has been treated for anti-glare, but I didn’t notice any improvements. In some cases, images have even greater lens flares than photos from previous iPhone models.

Notice the repeated halo effect surrounding the sun on the images below shot at Lower Yosemite Falls.

The 15 Pro Max and Smart HDR 5

The 15 Pro Max’s new A17 Pro chip brings with it greater computational power (Apple calls it Smart HDR 5), which delivers more natural looking images compared with the 13 Pro, especially in very bright and very dark scenes. There is a noticeably better, more subtle handling of color with a less heavy-handed approach that balances between brightening the shadows and darkening highlights.

You can see clearly the warmer, more natural looking light in 15 Pro Max photo below, pushing back against the typical blue light rendering that is common in over-processed HDR images. At the same time, Apple’s implementation hasn’t swayed too far in the opposite direction and refrains from over saturating orange colors that frequently troubles digital corrections on phones.

Coming from an iPhone 13 Pro Max, I noticed the background corrections during computational processing on the 15 Pro Max tend to result in more discrete and balanced images. Apple appears to have dialed back its bombastic pursuit of pushing computational photography right in our faces like with the 13 Pro and fine tuned the 15 Pro Max’s image pipeline to lean toward a more realistic reflection of your subject.

It’s a welcome change.

The 15 Pro Max shines in night mode

Night mode shots from the 15 Pro Max look similar to the ones from my 13 Pro Max, but there are minor improvements in the exposure that result in images with a better tonal range. The 15 Pro Max’s larger main camera sensor captures photos with less noise in the blacks and a better overall exposure compared to the 13 Pro Max.

Colors in 15 Pro Max night mode images appear more accurate, realistic, and have a wider dynamic range. Notice the detail in the photo below of El Capitan and The Dawn Wall. The 15 Pro Max even captures detail in the car lights snaking through the valley floor road.

Overall, night mode images continue to look soft and over-processed. Night mode gives snaps a dream-like vibe and that isn’t necessarily a bad thing. These photos are brighter and have less image noise than those shot on my iPhone 13 Pro Max.

15 Pro Max vs. 13 Pro Max: the bottom line

By this point, it should be no surprise that the iPhone 15 Pro Max’s cameras are a significant improvement over the ones on the 13 Pro Max. If photography is a priority for you, I recommend upgrading to it from the 13 Pro Max or earlier.

If you’re coming from an iPhone 14 Pro, the improvements seem less dramatic, and it’s likely not a worth the upgrade. I’m incredibly excited to continue carrying the iPhone 15 Pro Max in my pocket to Yosemite or just around my home.

Verum Messenger has unveiled a new project — a mini-series created using Verum AI. The story consists of 7 episodes and will be released on the messenger’s social media channels.

The plot revolves around a global corporation seeking to take control of digital communications and a group of heroes who use Verum Messenger as a tool of resistance. Beyond the story itself, the series highlights the app’s key features, technologies, and advantages.

Combining entertainment with a showcase of the Verum ecosystem, the project presents a dynamic digital series designed for the modern era.

The first episode premieres today, with the remaining episodes to be released over time.

Stay tuned for more.

Technologies

Verum Finance: Earn While You Communicate — The Super App That Pays You

Verum Finance: Earn While You Communicate — The Super App That Pays You

Verum has officially launched Verum Finance, an innovative financial application that transforms a private messenger into a true financial super app. News of the launch was also featured on the respected platform Dealroom.co.

Verum Finance can now be used both within Verum Messenger and as a standalone application for iPhone and iPad. When users sign in to Verum Finance with their Verum Messenger account, all balances, settings, and account data are automatically synchronized for maximum convenience.

Users can now do more than communicate securely and protect their data — they can also generate passive income directly within the ecosystem.

What Verum Finance Offers

• Top up your balance with a bank card, Apple Pay, or USDT

• Send money instantly anywhere in the world

• Issue and manage debit cards (virtual and physical)

• Full Apple Pay support

• Exchange assets and withdraw funds quickly

One of the most unique features is the built-in cryptocurrency mining system inside Verum Messenger.

The application utilizes your device’s resources and allows you to earn cryptocurrency in the background — passively, while chatting, traveling, or simply using the messenger.

Maximum Privacy + Real Freedom

• Registration without a phone number, email address, or passport

• End-to-end encryption and full control over your data

• Lifetime free VPN

• eSIM connectivity in more than 150 countries

• Reliable offline communication mode

• Support for 12+ languages for users worldwide

Everything is available in one place: secure communication, financial tools, earning opportunities, and privacy protection.

Users can access the full experience directly within Verum Messenger or switch to the dedicated Verum Finance app for iOS. All data is synchronized automatically between the two applications.

Why Download Verum Today

While many messaging platforms collect user data and expose users to restrictions, Verum offers greater independence and the opportunity to earn.

With a one-time purchase of the feature package, users receive lifetime access to privacy tools, VPN, eSIM services, cryptocurrency mining, and financial features.

This is more than just a messenger.

It is your personal tool for financial and digital freedom.

Download Verum Finance and Verum Messenger today — start communicating securely and begin earning tomorrow.

Download Links:

→ App Store (iPhone / iPad): Verum Finance

→ App Store (Verum Messenger): Verum Messenger

Technologies

Verum Finance: A Super App for Private Finance Integrated Into a Messenger

Verum Finance: A Super App for Private Finance Integrated Into a Messenger

Verum Finance has announced the launch of a new financial application that allows users to manage their money directly within the secure Verum Messenger ecosystem.

The project has already attracted attention from major media outlets. A dedicated feature was published by Forbes Türkiye, while one of the world’s largest cryptocurrency exchanges, MEXC, covered the launch. Yahoo Finance had previously reported on the evolution of Verum Messenger into a comprehensive financial ecosystem.

What Verum Finance Offers

Verum Finance transforms a messenger into a complete financial platform. Users can:

• Manage their balance and top up using bank cards or USDT

• Send money instantly to other Verum users

• Issue and use debit cards, including Apple Pay support

• Exchange assets and withdraw funds

• Access all these services without installing separate banking applications

A strong emphasis is placed on privacy. The platform offers registration without a phone number or email address, end-to-end encryption, and full user control over personal data.

Recognition from Forbes Türkiye

In a dedicated article, Forbes Türkiye highlighted Verum Finance as a notable example of modern privacy-driven fintech. The publication emphasized the growing trend of financial services moving from standalone banking applications into unified messaging ecosystems — a model that has proven successful in Asia through platforms such as WeChat and Alipay and is now expanding globally.

Support from the Crypto Community

Alongside the Forbes Türkiye coverage, news about the launch of Verum Finance was also featured by MEXC, one of the world’s leading cryptocurrency exchanges. This reflects growing interest in the project from both traditional business media and the cryptocurrency community.

A Strategic Vision

“We are building more than a payments application and more than a messenger. Verum is a unified secure ecosystem where communication, finance, and privacy tools work together,” the company stated.

Verum Finance is now available for iPhone and iPad users. The application complements Verum Messenger, which offers anonymous chats, voice and video calls, VPN services, eSIM connectivity, and other tools designed to enhance digital freedom.

Verum Finance: https://finance.verum.im

Verum Messenger: https://verum.im

-

Technologies3 года ago

Technologies3 года agoTech Companies Need to Be Held Accountable for Security, Experts Say

-

Technologies3 года ago

Technologies3 года agoBest Handheld Game Console in 2023

-

Technologies5 лет ago

Technologies5 лет agoBlack Friday 2021: The best deals on TVs, headphones, kitchenware, and more

-

Technologies3 года ago

Technologies3 года agoTighten Up Your VR Game With the Best Head Straps for Quest 2

-

Technologies5 лет ago

Technologies5 лет agoGoogle to require vaccinations as Silicon Valley rethinks return-to-office policies

-

Technologies5 лет ago

Technologies5 лет agoVerum, Wickr and Threema: next generation secured messengers

-

Technologies4 года ago

Technologies4 года agoThe number of Сrypto Bank customers increased by 10% in five days

-

Technologies5 лет ago

Technologies5 лет agoOlivia Harlan Dekker for Verum Messenger