Technologies

Oura Ring’s ‘Circles’ Makes Sharing Sleep and Other Scores Possible

As long as they also wear an Oura ring, you can share health data including Sleep, Readiness and Activity scores with friends and family. Here’s how.

If you’ve ever wondered how well your coworker slept last night, now you can know for sure as long as you’re connected with them through Circles, a new feature Oura announced Thursday for its app.

Oura, the health-tracking ring that collects data such as temperature, heart rate, blood oxygen readings, summarizes that information into Readiness, Sleep and Activity scores. With Circles, you’ll be able to share those scores with up to 10 groups of people or «Circles,» with a maximum of 20 people in each group.

You’ll be able to choose which kind of data or scores you share with each group, so one circle can get more of your wellness information than another. While only three scores are available to share through Circles now, Oura said it plans on expanding sharable information in the future.

To start a circle, open the Oura app, scroll down the main menu and select «Circles.» Then you can name a circle, decide what scores you want to share and also decide whether you want that data to be daily or weekly averages. To invite people into the circle (they have to be fellow Oura users), you’ll send them a one-time link.

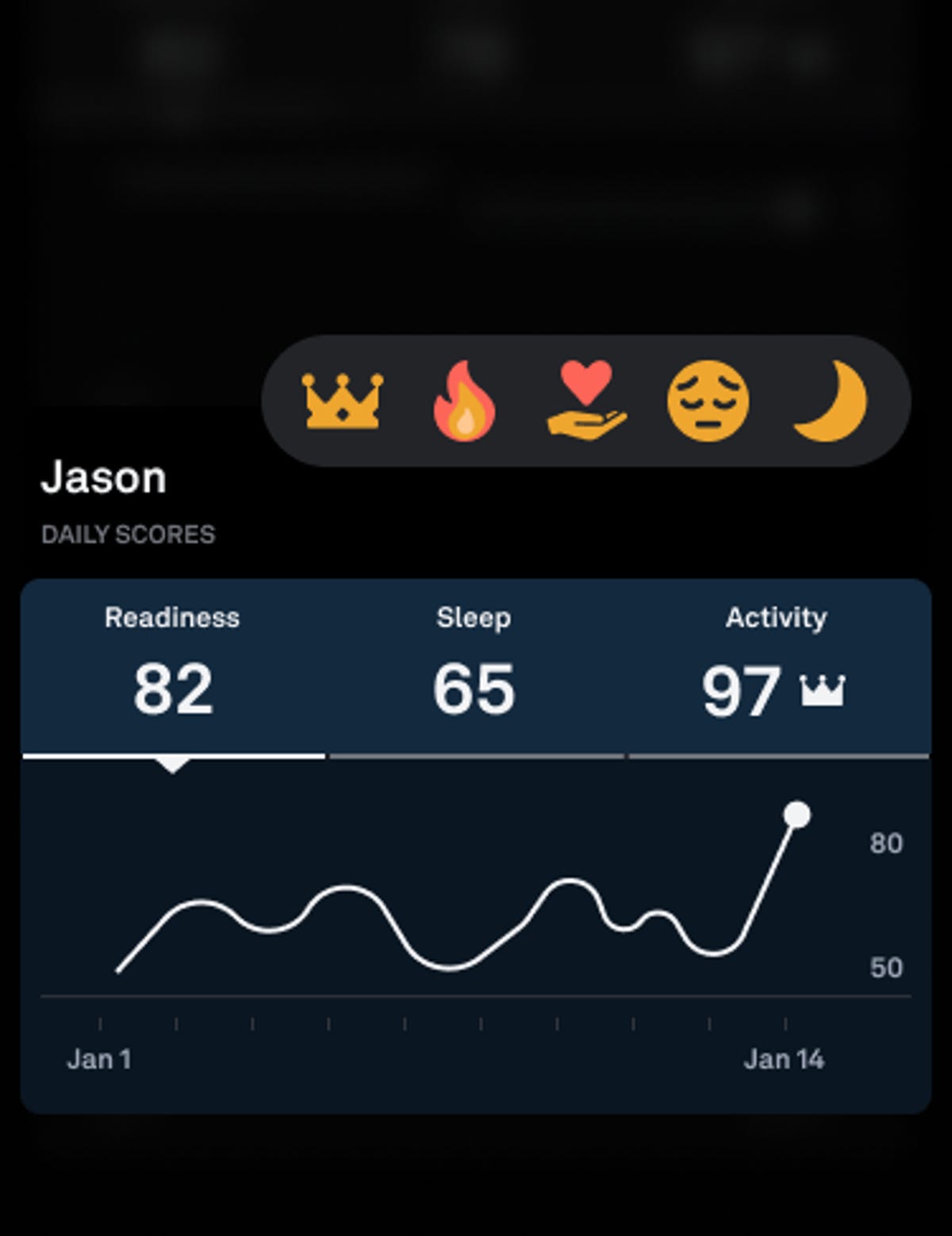

Once you’ve started your circle, you can view their scores and «react» with emojis, if you choose. Everyone has to sync their rings to keep the scores visible.

What it looks like to react to your friend in Circles.

For people who enjoy collecting health data (and maybe boasting about a good health week), Oura’s Circles features is a good way to do that with other Oura wearers. According to a press release, though, the company is positioning Circles as another way to check in and connect with each other — an increasingly important public health goal amid a loneliness epidemic, which has impacts on sleep, mental health and physical illness.

«Our mission at Oura has always been to improve the lives of our members by taking a compassionate approach to health, and this new feature is just the next step in delivering a personalized experience that allows our members to connect with not only their bodies, but also their friends and family,» Oura CEO Tom Hale said in a statement.

Oura’s Circles announcements comes as the company is advancing its new sleep staging algorithm out of beta mode, which means everyone tracking sleep stages with Oura will get data from the improved algorithm. Shyamal Patel, the company’s head of science, calls the new algorithm a «massive improvements of accuracy» in sleep data. The new algorithm has 79% agreement with polysomnography sleep tests done in a clinic, Patel told CNET.

Compared to Oura’s older sleep-tracking algorithm, ring wearers might experience slight changes in the amount of time Oura tells you you’re spending in deep sleep versus light sleep versus REM sleep.

«Those numbers are likely to shift a little bit,» Patel said.

For more on the Oura ring, read more about how the tracker can tell you whether you’re a morning person and how the Oura ring compares to the Apple Watch as a sleep tracker. Also, here’s our thorough review of Oura, the wearable that can tell when you’re sick.

Technologies

Today’s Wordle Hints, Answer and Help for April 7, #1753

Here are hints and the answer for today’s Wordle for April 7, No. 1,753.

Looking for the most recent Wordle answer? Click here for today’s Wordle hints, as well as our daily answers and hints for The New York Times Mini Crossword, Connections, Connections: Sports Edition and Strands puzzles.

Today’s Wordle puzzle wasn’t too tricky, for a change. If you need a new starter word, check out our list of which letters show up the most in English words. If you need hints and the answer, read on.

Read more: New Study Reveals Wordle’s Top 10 Toughest Words of 2025

Today’s Wordle hints

Before we show you today’s Wordle answer, we’ll give you some hints. If you don’t want a spoiler, look away now.

Wordle hint No. 1: Repeats

Today’s Wordle answer has one repeated letter.

Wordle hint No. 2: Vowels

Today’s Wordle answer has one vowel, but it’s the repeated letter, so you’ll see it twice.

Wordle hint No. 3: First letter

Today’s Wordle answer begins with D.

Wordle hint No. 4: Last letter

Today’s Wordle answer ends with E.

Wordle hint No. 5: Meaning

Today’s Wordle answer can relate to something that is closely compacted.

TODAY’S WORDLE ANSWER

Today’s Wordle answer is DENSE.

Yesterday’s Wordle answer

Yesterday’s Wordle answer, April 6, No. 1752, was SWORN.

Recent Wordle answers

April 2, No. 1748: SOBER

April 3, No. 1749: SINGE

April 4, No. 1750: SANDY

April 5, No. 1751: ENVOY

What’s the best Wordle starting word?

Don’t be afraid to use our tip sheet ranking all the letters in the alphabet by frequency of uses. In short, you want starter words that lean heavy on E, A and R, and don’t contain Z, J and Q.

Some solid starter words to try:

ADIEU

TRAIN

CLOSE

STARE

NOISE

Technologies

Today’s NYT Mini Crossword Answers for Tuesday, April 7

Here are the answers for The New York Times Mini Crossword for April 7.

Looking for the most recent Mini Crossword answer? Click here for today’s Mini Crossword hints, as well as our daily answers and hints for The New York Times Wordle, Strands, Connections and Connections: Sports Edition puzzles.

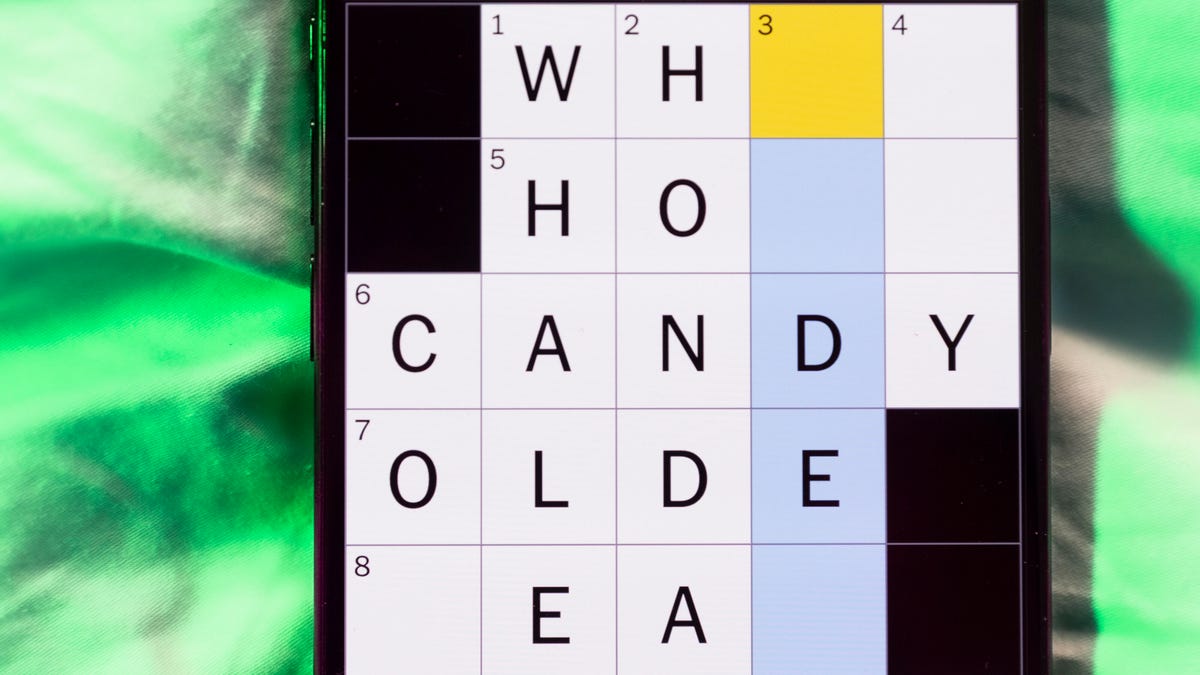

Need some help with today’s Mini Crossword? Read on for all the answers. And if you could use some hints and guidance for daily solving, check out our Mini Crossword tips.

If you’re looking for today’s Wordle, Connections, Connections: Sports Edition and Strands answers, you can visit CNET’s NYT puzzle hints page.

Read more: Tips and Tricks for Solving The New York Times Mini Crossword

Let’s get to those Mini Crossword clues and answers.

Mini across clues and answers

1A clue: Informative commercial, for short

Answer: PSA

4A clue: Something you trace to draw a Thanksgiving turkey

Answer: HAND

5A clue: ___ Johnson, former Prime Minister of the U.K.

Answer: BORIS

6A clue: Opposite of include

Answer: OMIT

7A clue: Crosses (out)

Answer: XES

Mini down clues and answers

1D clue: City with the Notre-Dame Cathedral

Answer: PARIS

2D clue: Bad mood

Answer: SNIT

3D clue: About eight minutes of the average half-hour sitcom

Answer: ADS

4D clue: Remote worker’s office, perhaps

Answer: HOME

5D clue: Word that can follow each group of circled letters (and hints at its shape)

Answer: BOX

Technologies

NASA’s Artemis II Breaks Record With Trip Around The Moon

-

Technologies3 года ago

Technologies3 года agoTech Companies Need to Be Held Accountable for Security, Experts Say

-

Technologies3 года ago

Technologies3 года agoBest Handheld Game Console in 2023

-

Technologies3 года ago

Technologies3 года agoTighten Up Your VR Game With the Best Head Straps for Quest 2

-

Technologies4 года ago

Technologies4 года agoBlack Friday 2021: The best deals on TVs, headphones, kitchenware, and more

-

Technologies5 лет ago

Technologies5 лет agoGoogle to require vaccinations as Silicon Valley rethinks return-to-office policies

-

Technologies5 лет ago

Technologies5 лет agoVerum, Wickr and Threema: next generation secured messengers

-

Technologies4 года ago

Technologies4 года agoOlivia Harlan Dekker for Verum Messenger

-

Technologies4 года ago

Technologies4 года agoThe number of Сrypto Bank customers increased by 10% in five days