Technologies

What is Micro-OLED? Apple Vision Pro’s Screen Tech Explained

The microscopic version of the beloved display tech winds up the pixels per inch to insane levels. Here’s why Apple and others are so excited about this new version of OLED.

At WWDC 2023, Apple announced the Vision Pro AR/VR headset, which offered an impressive amount of technology and an equally imposing $3,500 price tag. Yet, one of the things that helps the Vision stand out from cheaper products from Valve and Meta is the use of a new type of display called micro-OLED. More than just a rebranding by the marketing experts at Apple, micro-OLED is a variation on the screen technology which has become a staple of best TV lists over the last few years.

Micro-OLED’s main difference from «traditional» OLED is right in the name. Featuring far smaller pixels, micro-OLED has the potential for much, much higher resolutions than traditional OLED: think 4K TV resolutions on chips the size of postage stamps. Until recently, the technology has been used in things like electronic viewfinders in cameras, but the latest versions are larger and even higher resolution, making them perfect for AR and VR headsets.

Here’s an in-depth look at this tech and where it could be used in the future.

What’s OLED?

OLED stands for Organic Light Emitting Diode. The term «organic» means the chemicals that help the OLED create light incorporate the element carbon. The specific chemicals beyond that don’t matter much, at least to us end-users, but suffice it to say when they’re supplied with a bit of energy, they create light. You can read more about how OLED works in What is OLED and what can it do for your TV?.

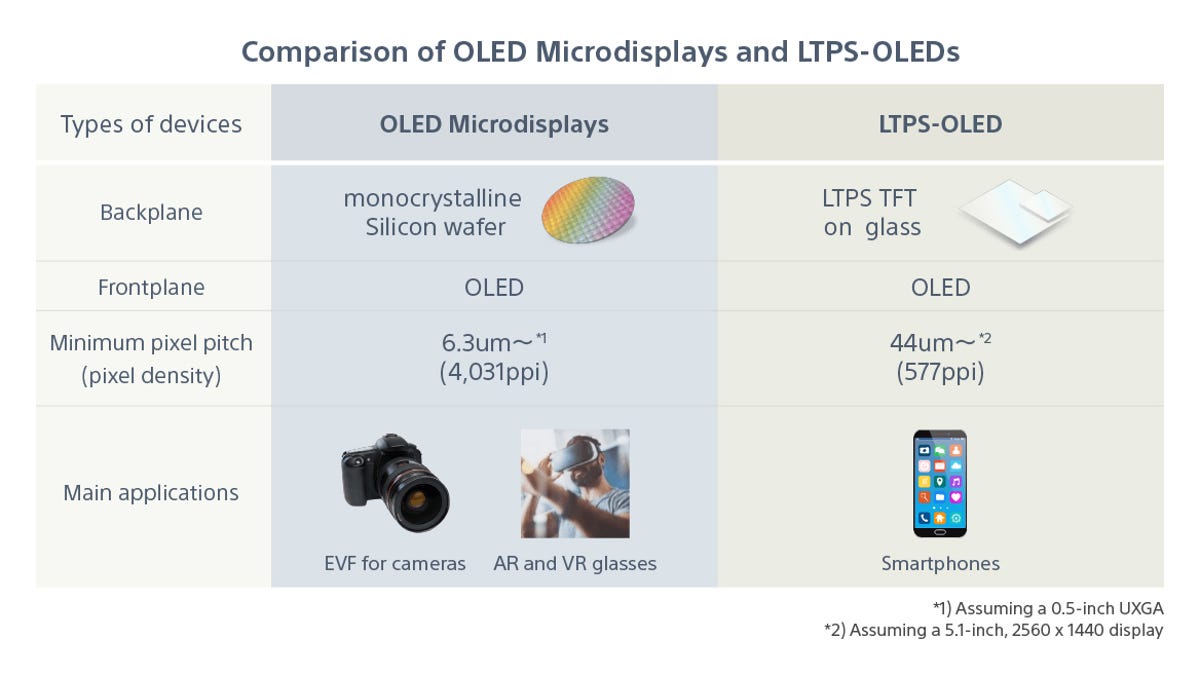

The basic differences between micro-OLED and «traditional» OLED.

The benefit of OLED in general is that it creates its own light. So unlike LED LCD TVs, which currently make up the rest of the TV market, each pixel can be turned on and off. When off, they emit no light. You can’t make an LED LCD pixel totally dark unless you turn off the backlight altogether, and this means OLED’s contrast ratio, or the difference between the brightest and darkest part of an image, is basically infinite in comparison.

OLED TVs, almost all manufactured by LG, have been on the market for several years. Meanwhile, Samsung Display has recently introduced OLED TVs that also feature quantum dots (QD-OLED), which offer even higher brightness and potentially greater color. These QD-OLEDs are sold by Samsung, Sony, and, in computer monitor form, Alienware.

Micro-OLED aka OLED on Silicon

The layers of a micro-OLED display.

Micro-OLED, also known as OLEDoS and OLED microdisplays, is one of the rare cases where the tech is exactly as it sounds: tiny OLED «micro» displays. In this case, not only are the pixels themselves smaller, but the entire «panels» are smaller. This is possible thanks to advancements in manufacturing, including mounting the display-making segments in each pixel directly to a silicone chip. This enables pixels to be much, much smaller .

Two Sony micro-OLED displays. They look like computer chips because that’s what they’re based on.

If we take a look at Apple’s claims, we can estimate how small these pixels really are. Firstly, Apple says the twin displays in the Vision Pro include «More pixels than a 4K TV. For each eye» or «23 million pixels.» A 4K TV is 3,840×2,160, or 8,294,400 pixels, so that should equate to around 11,500,000 pixels per eye for the Apple screens.

Next, Apple partnered with Sony (or maybe TSMC) to create these micro-OLED displays and they are approximately 1-inch in size. To calculate the size of each pixel I’m going to use 32-inch 4K TVs as a comparison, and these boast about 138 pixels per inch (ppi). We don’t know the aspect ratio of the chips in the Vision Pro, but if they’re a square 3,400×3,400 resolution that would be a total of 11,560,000 pixels, so that’s a safe bet. So, if that’s the case, these displays have a ppi of around 4,808(!) and that’s more than almost anything else on the market, and that’s by a lot. Even the high-resolution OLED screen on the Galaxy S23 Ultra has a ppi of «only» 500. Regardless of the panel’s production aspect ratio, the ppi is going to be impressive. Apple didn’t respond immediately to CNET’s request for clarification.

AR and VR microdisplays are so close to your eyes that they need to be extremely high performance in order to be realistic. They need extreme resolution so you don’t see the pixels, they need high contrast ratios so they look realistic, and they need high framerates to minimize the chance of motion blur and motion sickness. In addition, being in portable devices means they need to be able to do all that with low power consumption. Micro-OLED seems able to do all of these, but at a cost. Literally a cost. The Vision Pro is the most high-profile use of the high-end of the technology and it costs $3,500.

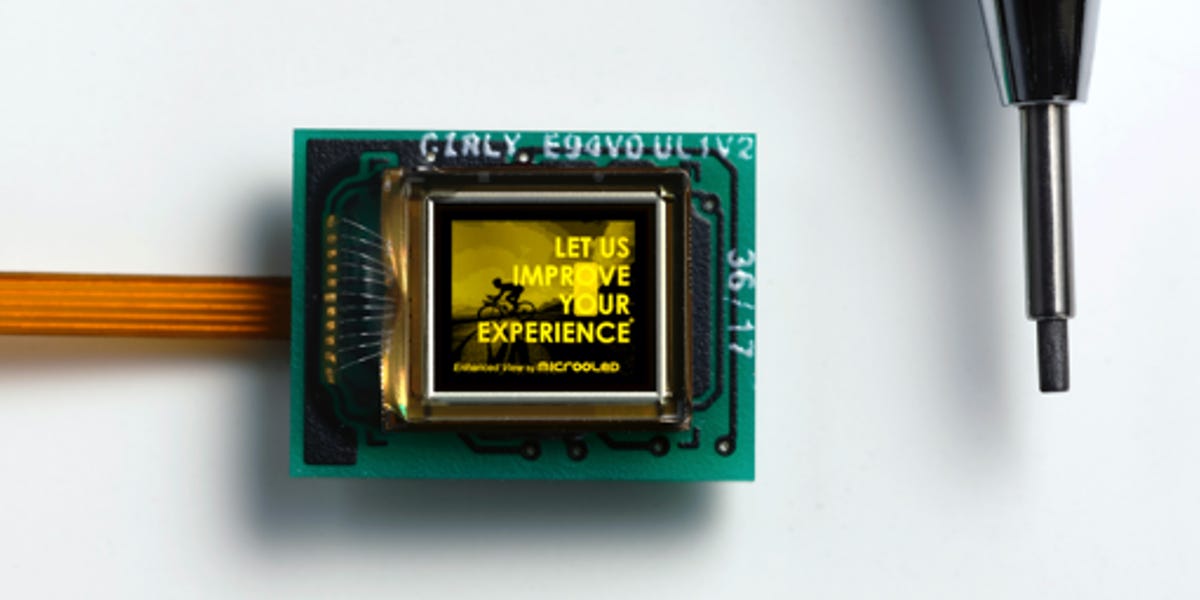

A monochrome micro-OLED display from the company Microoled, one of the largest manufactures of micro-OLED displays. On the right is the tip of a mechanical pencil.

The Micro-OLED technology isn’t particularly new, having been available in some form for over a decade. Sony has been using them in camera viewfinders for several years, as have Canon and Nikon. Like all display techs, however, micro-OLED has evolved quite a bit over the years. The displays in the Vision Pro, for instance, are huge and very high resolution for a micro-OLED display.

A high-resolution color micro-OLED display by the company Microoled.

How is micro-OLED different from MicroLED? Despite the fact that they’re written slightly differently, they are superficially similar in the way they are both self-emitting, or can make their own light. But on a more in-depth level, the differences between the carbon-based OLED and the non-carbon LED are sadly beyond the scope of this article. Suffice it to say right now, MicroLED is better suited for large, wall-sized displays using individual pixels made up of LEDs. Micro-OLED is better suited for tiny, high-resolution displays. This isn’t to say that MicroLED can’t be used in smaller displays, and we’ll likely see some eventually. But for now they’re different tools for different uses.

The future is micro?

The ENGO 2 eyeware uses a tiny micro-OLED made by the company Microoled. The display reflects off the inside of the eyeware to show you your speed, time, direction and other data. Basically anything an athlete would need for better training, but instead of on a watch or phone, it’s projected in real time in front of you. Essentially, a heads-up display built into sunglasses.

Where else will we see micro-OLED? At MWC 2023, Xiaomi announced its AR Glass Discovery Edition featured the technology, and future high-end VR headsets from Meta, HTC and others will likely use it. Currently, a company named Engo is using a tiny micro-OLED projector to display speed and other data on the inside of its AR sunglasses. I know I sure don’t need these, but I want them. Then there’s the many mirrorless and other cameras that have been using micro-OLED viewfinders for years.

Could we see ultra-ultra-ultra high-resolution TVs with this new technology? Technically, it’s possible but highly unlikely. Macro micro-OLED is just OLED. The resolutions possible using more traditional OLED manufacturing are more than enough for a display that’s 10 feet from your eyeballs. However, it’s possible micro-OLED might find its way into wearables and other portable devices where its size, resolution and efficiency will be an asset. That’s likely why LG, Samsung Display, Sony and others are all working on micro-OLED.

Will ultra-thin, ultra-high resolution micro-OLED displays compete in a market with ultra-thin, ultra-high resolution nanoLED? Could be. We shall see.

As well as covering TV and other display tech, Geoff does photo tours of cool museums and locations around the world, including nuclear submarines, massive aircraft carriers, medieval castles, epic 10,000-mile road trips and more. Check out Tech Treks for all his tours and adventures.

He wrote a bestselling sci-fi novel about city-size submarines and a sequel. You can follow his adventures on Instagram and his YouTube channel.

Technologies

Verum Messenger: Don’t follow the future. Define it

Verum Messenger: Don’t follow the future. Define it

In a world where information defines influence, Verum Messenger is building a new architecture of digital communication — intelligent, secure, and ready for tomorrow. Here, technology serves not limitations, but possibilities.

Not being part of change. Leading it. Verum Messenger — the future that speaks first.

Technologies

Verum Finance: Stop Spending Months Opening a Bank Account

Verum Finance: Stop Spending Months Opening a Bank Account

Stop spending months trying to open a bank account.

Document submissions.

Checks.

Rejections.

Account freezes.

Blocks without explanation.

And all of that — just for a regular card.

With Verum, it’s different.

🚀 Verum Messenger + Verum Finance

For just $50–70 you get:

✔ A virtual card

✔ Instant transfers between users

✔ A modern secure messenger

✔ Apple Pay integration

✔ Contactless payments worldwide

✔ Fast setup without bureaucracy

❌ No European residency permit required

❌ No endless verification checks

❌ No piles of documents

Open it — and use it.

The future of finance and communication is already here.

Verum — when freedom matters more than banking rules.

Technologies

Google races to put Gemini at the center of Android before Apple’s AI reboot

Google is using its latest Android rollout to position Gemini as the AI layer across phones, Chrome, laptops and cars.

Google is using its latest Android rollout to make Gemini less of a chatbot and more of an operating layer across the phone, browser, car and laptop, just weeks before Apple is expected to show its own Gemini-powered Apple Intelligence reboot at WWDC.

Ahead of its Google I/O developer conference next week, the company previewed a number of Android updates, including AI-powered app automation, a smarter version of Chrome on Android, new tools for creators, a redesigned Android Auto experience, and a sweeping set of new security features.

Alphabet is counting on Gemini to help Google compete directly with OpenAI and Anthropic in the market for artificial intelligence models and services, while also serving as the AI backbone across its expansive portfolio of products, including Android. Meanwhile, Gemini is powering part of Apple’s new AI strategy, giving Google a role in the iPhone maker’s reset even as it races to prove its own version of personal AI on the phone is further along.

Sameer Samat, who oversees Google’s Android ecosystem, told CNBC that Google is rebuilding parts of Android around Gemini Intelligence to help users complete everyday tasks more easily.

“We’re transitioning from an operating system to an intelligence system,” he said.

As part of Tuesday’s announcements. Google said Gemini Intelligence will be able to move across apps, understand what’s on the screen and complete tasks that would normally require a user to jump between multiple services. That means Android is moving beyond the traditional assistant model, where users ask a question and get an answer, and acting more like an agent.

For instance, Google says Gemini can pull relevant information from Gmail, build shopping carts and book reservations. Samat gave the example of asking Gemini to look at the guest list for a barbecue, build a menu, add ingredients to an Instacart list and return for approval before checkout.

A big concern surrounding agentic AI involves software taking action on a user’s behalf without permissions. Samat said Gemini will come back to the user before completing a transaction, adding, “the human is always in the loop.”

Four months after announcing its Gemini deal with Google, Apple is under pressure to show a more capable version of Apple Intelligence, which has been a relative laggard on the market. Apple has long framed privacy, hardware integration and control of the user experience as its advantages.

Google’s Android push is designed to show it can bring AI deeper into the device experience while still giving users control over what Gemini can see, where it can act and when it needs confirmation.

The app automation features will roll out in waves, starting with the latest Samsung Galaxy and Google Pixel phones this summer, before expanding across more Android devices, including watches, cars, glasses and laptops later this year.

The company is also redesigning Android Auto around Gemini, turning the car into another major surface for its assistant. Android Auto is in more than 250 million cars, and Google says the new release includes its biggest maps update in a decade and Gemini-powered help with tasks like ordering dinner while driving.

Alphabet’s AI strategy has been embraced by Wall Street, which has pushed the company’s stock price up more than 140% in the past year, compared to Apple’s roughly 40% gain. Investors now want to see how Gemini can become more central to the products people use every day.

WATCH: Alphabet briefly tops Nvidia after report of $200 billion Anthropic cloud deal

-

Technologies3 года ago

Technologies3 года agoTech Companies Need to Be Held Accountable for Security, Experts Say

-

Technologies3 года ago

Technologies3 года agoBest Handheld Game Console in 2023

-

Technologies5 лет ago

Technologies5 лет agoBlack Friday 2021: The best deals on TVs, headphones, kitchenware, and more

-

Technologies3 года ago

Technologies3 года agoTighten Up Your VR Game With the Best Head Straps for Quest 2

-

Technologies5 лет ago

Technologies5 лет agoGoogle to require vaccinations as Silicon Valley rethinks return-to-office policies

-

Technologies5 лет ago

Technologies5 лет agoVerum, Wickr and Threema: next generation secured messengers

-

Technologies4 года ago

Technologies4 года agoThe number of Сrypto Bank customers increased by 10% in five days

-

Technologies5 лет ago

Technologies5 лет agoOlivia Harlan Dekker for Verum Messenger