Technologies

Apple, iPhones, photos and child safety: What’s happening and should you be concerned?

The tech giant’s built new systems to fight child exploitation and abuse, but security advocates worry it could erode our privacy. Here’s why.

Apple’s long presented itself as a bastion of security, and as one of the only tech companies that truly cares about user privacy. But a new technology designed to help an iPhone, iPad or Mac computer detect child exploitation images and videos stored on those devices has ignited a fierce debate about the truth behind Apple’s promises.

On Aug. 5, Apple announced a new feature being built into the upcoming iOS 15, iPad OS 15, WatchOS 8 and MacOS Monterey software updates designed to detect if someone has child exploitation images or videos stored on their device. It’ll do this by converting images into unique bits of code, known as hashes, based on what they depict. The hashes are then checked against a database of known child exploitation content that’s managed by the National Center for Missing and Exploited Children. If a certain number of matches are found, Apple is then alerted and may further investigate.

Apple said it developed this system to protect people’s privacy, performing scans on the phone and only raising alarms if a certain number of matches are found. But privacy experts, who agree that fighting child exploitation is a good thing, worry that Apple’s moves open the door to wider uses that could, for example, put political dissidents and other innocent people in harm’s way.

«Even if you believe Apple won’t allow these tools to be misused there’s still a lot to be concerned about,» tweeted Matthew Green, a professor at Johns Hopkins University who’s worked on cryptographic technologies.

Apple’s new feature, and the concern that’s sprung up around it, represent an important debate about the company’s commitment to privacy. Apple has long promised that its devices and software are designed to protect their users’ privacy. The company even dramatized that with an ad it hung just outside the convention hall of the 2019 Consumer Electronics Show, which said «What happens on your iPhone stays on your iPhone.»

«We at Apple believe privacy is a fundamental human right,» Apple CEO Tim Cook has often said.

Apple’s scanning technology is part of a trio of new features the company’s planning for this fall. Apple also is enabling its Siri voice assistant to offer links and resources to people it believes may be in a serious situation, such as a child in danger. Advocates had been asking for that type of feature for a while.

It also is adding a feature to its messages app to proactively protect children from explicit content, whether it’s in a green-bubble SMS conversation or blue-bubble iMessage encrypted chat. This new capability is specifically designed for devices registered under a child’s iCloud account and will warn if it detects an explicit image being sent or received. Like with Siri, the app will also offer links and resources if needed.

There’s a lot of nuance involved here, which is part of why Apple took the unusual step of releasing research papers, frequently asked questions and other information ahead of the planned launch.

Here’s everything you should know:

Why is Apple doing this now?

The tech giant said it’s been trying to find a way to help stop child exploitation for a while. The National Center for Missing and Exploited Children received more than 65 million reports of material last year. Apple said that’s way up from the 401 reports 20 years ago.

«We also know that the 65 million files that were reported is only a small fraction of what is in circulation,» said Julie Cordua, head of Thorn, a nonprofit fighting child exploitation that supports Apple’s efforts. She added that US law requires tech companies to report exploitative material if they find it, but it does not compel them to search for it.

Other companies do actively search for such photos and videos. Facebook, Microsoft, Twitter, and Google (and its YouTube subsidiary) all use various technologies to scan their systems for any potentially illegal uploads.

What makes Apple’s system unique is that it’s designed to scan our devices, rather than the information stored on the company’s servers.

The hash scanning system will only be applied to photos stored in iCloud Photo Library, which is a photo syncing system built into Apple devices. It won’t hash images and videos stored in the photos app of a phone, tablet or computer that isn’t using iCloud Photo Library. So, in a way, people can opt out if they choose not to use Apple’s iCloud photo syncing services.

Could this system be abused?

The question at hand isn’t whether Apple should do what it can to fight child exploitation. It’s whether Apple should use this method.

The slippery slope concern privacy experts have raised is whether Apple’s tools could be twisted into surveillance technology against dissidents. Imagine if the Chinese government were able to somehow secretly add data corresponding to the famously suppressed Tank Man photo from the 1989 pro-democracy protests in Tiananmen Square to Apple’s child exploitation content system.

Apple said it designed features to keep that from happening. The system doesn’t scan photos, for example — it checks for matches between hash codes. The hash database is also stored on the phone, not a database sitting on the internet. Apple also noted that because the scans happen on the device, security researchers can audit the way it works more easily.

Is Apple rummaging through my photos?

We’ve all seen some version of it: The baby in the bathtub photo. My parents had some of me, I have some of my kids, and it was even a running gag on the 2017 Dreamworks animated comedy The Boss Baby.

Apple says those images shouldn’t trip up its system. Because Apple’s system converts our photos to these hash codes, and then checks them against a known database of child exploitation videos and photos, the company isn’t actually scanning our stuff. The company said the likelihood of a false positive is less than one in 1 trillion per year.

«In addition, any time an account is flagged by the system, Apple conducts human review before making a report to the National Center for Missing and Exploited Children,» Apple wrote on its site. «As a result, system errors or attacks will not result in innocent people being reported to NCMEC.»

Is Apple reading my texts?

Apple isn’t applying its hashing technology to our text messages. That, effectively, is a separate system. Instead, with text messages, Apple is only alerting a user who’s marked as a child in their iCloud account about when they’re about to send or receive an explicit image. The child can still view the image, and if they do a parent will be alerted.

«The feature is designed so that Apple does not get access to the messages,» Apple said.

What does Apple say?

Apple maintains that its system is built with privacy in mind, with safeguards to keep Apple from knowing the contents of our photo libraries and to minimize the risk of misuse.

«At Apple, our goal is to create technology that empowers people and enriches their lives — while helping them stay safe,» Apple said in a statement. «We want to protect children from predators who use communication tools to recruit and exploit them, and limit the spread of Child Sexual Abuse Material.»

Technologies

Stuck in a Coffee Rut? ChatGPT Can Now Plan Your Next Starbucks Order

Don’t be surprised if the chatbot suggests mixing espresso with lemonade.

If you like getting your daily cup of coffee from Starbucks, you’ll now be able to consult with ChatGPT for your next beverage. Starbucks said on Wednesday that a new Starbucks app in ChatGPT, now in beta, will help you figure out your next order based on your mood or craving in the moment.

Although you won’t be able to order your Starbucks coffee directly through the ChatGPT app, it will suggest drinks and menu items you may enjoy, then direct you to the Starbucks app or website to complete your order.

OpenAI has added a host of other apps you can interact with in ChatGPT since announcing the functionality last year. You can do everything from browsing home listings to designing playlists without leaving the chatbot interface.

You’ll be able to use prompts like, «@Starbucks, I want something bright to start my morning,» or upload an image to describe your mood and location. Once the menu suggestion appears in ChatGPT, you can start the order through the chatbot and then complete it in the Starbucks app or online.

Paul Riedel, senior vice president of digital and loyalty at Starbucks, said in a statement that Starbucks noticed customers weren’t always starting off by looking at the menu. «They’re starting with a feeling,» he said. «We wanted to meet customers right in that moment of inspiration and make it easier than ever to find a drink that fits.»

Starbucks said interacting with ChatGPT lets you personalize your order more and discover menu options you never considered before.

(Disclosure: Ziff Davis, CNET’s parent company, in 2025 filed a lawsuit against OpenAI, alleging it infringed Ziff Davis copyrights in training and operating its AI systems.)

When I tried out the new feature, I asked it about the oddest beverage combinations you can get at Starbucks. One interesting combo ChatGPT came up with was espresso with lemonade. The AI described another drink as «basically liquid dessert soup,» if that’s more up your alley.

Technologies

Today’s NYT Connections: Sports Edition Hints and Answers for April 16, #570

Here are hints and the answers for the NYT Connections: Sports Edition puzzle for April 16 No. 570.

Looking for the most recent regular Connections answers? Click here for today’s Connections hints, as well as our daily answers and hints for The New York Times Mini Crossword, Wordle and Strands puzzles.

Today’s Connections: Sports Edition is a fun one, especially if you enjoy unusual team names. If you’re struggling with today’s puzzle but still want to solve it, read on for hints and the answers.

Connections: Sports Edition is published by The Athletic, the subscription-based sports journalism site owned by The Times. It doesn’t appear in the NYT Games app, but it does in The Athletic’s own app. Or you can play it for free online.

Read more: NYT Connections: Sports Edition Puzzle Comes Out of Beta

Hints for today’s Connections: Sports Edition groups

Here are four hints for the groupings in today’s Connections: Sports Edition puzzle, ranked from the easiest yellow group to the tough (and sometimes bizarre) purple group.

Yellow group hint: Put your glasses on for this.

Green group hint: Hoops home.

Blue group hint: The minors.

Purple group hint: Hidden hoops word.

Answers for today’s Connections: Sports Edition groups

Yellow group: Look at.

Green group: Seen at an NBA court.

Blue group: Double-A baseball teams.

Purple group: Starts with a WNBA team.

Read more: Wordle Cheat Sheet: Here Are the Most Popular Letters Used in English Words

What are today’s Connections: Sports Edition answers?

The yellow words in today’s Connections

The theme is look at. The four answers are observe, spectate, view and watch.

The green words in today’s Connections

The theme is seen at an NBA court. The four answers are benches, half-court logo, scorer’s table and shot clock.

The blue words in today’s Connections

The theme is double-A baseball teams. The four answers are Biscuits, Drillers, Trash Pandas and Wind Surge.

The purple words in today’s Connections

The theme is starts with a WNBA team. The four answers are dreamy, firefly, Skype and sundial.

Technologies

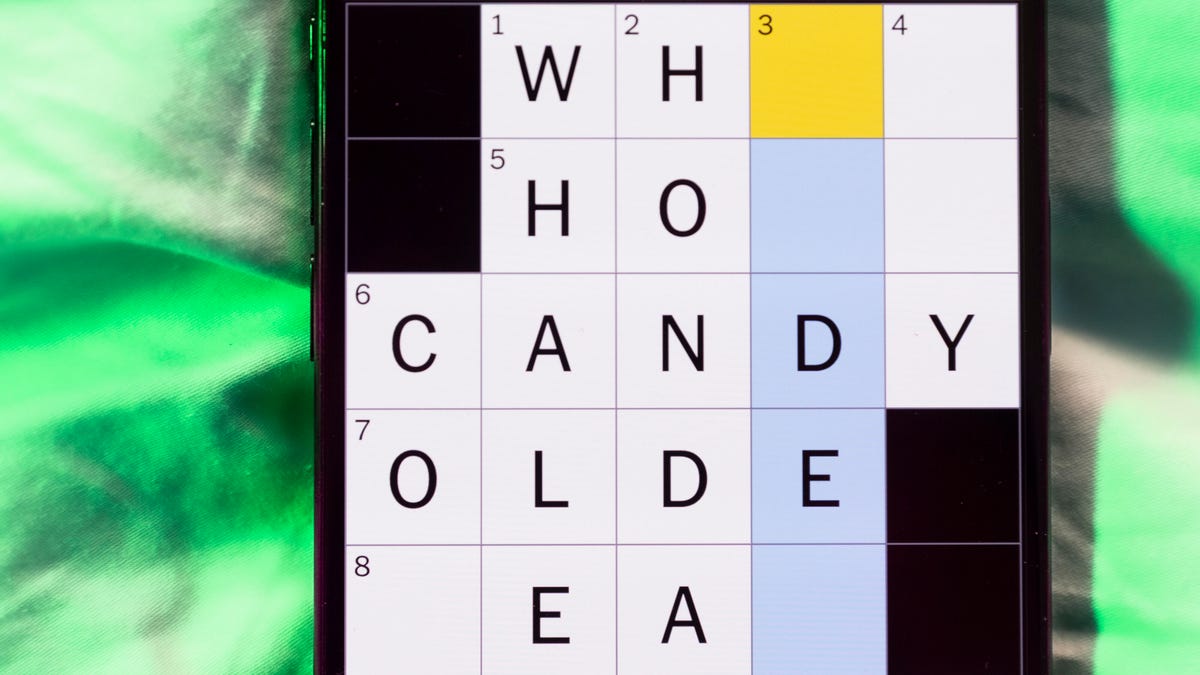

Today’s NYT Mini Crossword Answers for Thursday, April 16

Here are the answers for The New York Times Mini Crossword for April 16.

Looking for the most recent Mini Crossword answer? Click here for today’s Mini Crossword hints, as well as our daily answers and hints for The New York Times Wordle, Strands, Connections and Connections: Sports Edition puzzles.

Need some help with today’s Mini Crossword? It’s pretty simple, but 1-Across is a bit tricky. Read on for all the answers. And if you could use some hints and guidance for daily solving, check out our Mini Crossword tips.

If you’re looking for today’s Wordle, Connections, Connections: Sports Edition and Strands answers, you can visit CNET’s NYT puzzle hints page.

Read more: Tips and Tricks for Solving The New York Times Mini Crossword

Let’s get to those Mini Crossword clues and answers.

Mini across clues and answers

1A clue: Bow ties and ribbons that you can’t wear?

Answer: PASTA

6A clue: Opposite of lower

Answer: UPPER

7A clue: Flappable origami creation

Answer: CRANE

8A clue: Where the Hangul alphabet is used

Answer: KOREA

9A clue: Apparatus under a trapeze

Answer: NET

Mini down clues and answers

1D clue: Disc dropped on center ice

Answer: PUCK

2D clue: One might read «Kiss the Chef»

Answer: APRON

3D clue: Unlikely outcome after a 7-10 split

Answer: SPARE

4D clue: Fundamental belief

Answer: TENET

5D clue: Bay ___ (part of California)

Answer: AREA

-

Technologies3 года ago

Technologies3 года agoTech Companies Need to Be Held Accountable for Security, Experts Say

-

Technologies3 года ago

Technologies3 года agoBest Handheld Game Console in 2023

-

Technologies3 года ago

Technologies3 года agoTighten Up Your VR Game With the Best Head Straps for Quest 2

-

Technologies4 года ago

Technologies4 года agoBlack Friday 2021: The best deals on TVs, headphones, kitchenware, and more

-

Technologies5 лет ago

Technologies5 лет agoGoogle to require vaccinations as Silicon Valley rethinks return-to-office policies

-

Technologies5 лет ago

Technologies5 лет agoVerum, Wickr and Threema: next generation secured messengers

-

Technologies4 года ago

Technologies4 года agoOlivia Harlan Dekker for Verum Messenger

-

Technologies4 года ago

Technologies4 года agoThe number of Сrypto Bank customers increased by 10% in five days