Technologies

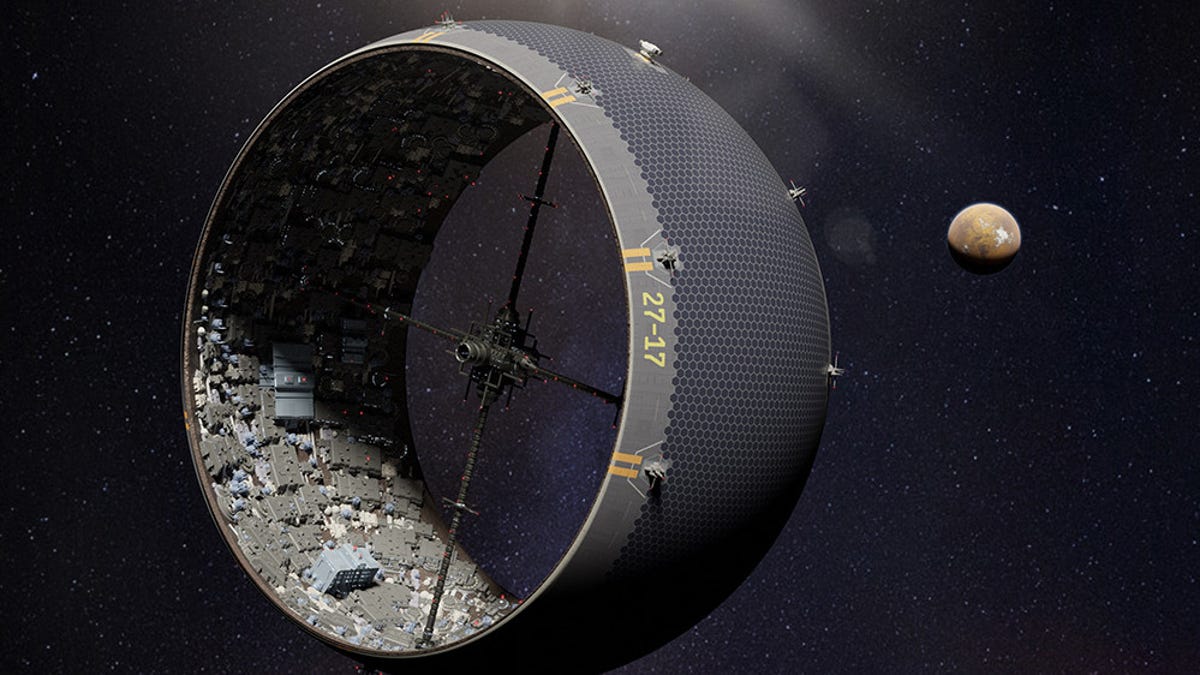

Space Cities Inside Asteroids Could Actually Work, Scientists Say

The plan «on the edge of science and science fiction» involves an asteroid, an expandable mesh bag and a whole lot of audacity.

Good news, Earthlings. We have more to look forward to than just the drab landscape of the moon or the inhospitable surface of Mars when it comes to far-flung future human civilizations off this rock. We might one day be living la vida asteroid.

Yes, space-faring piles of rocky rubble (like famous asteroid Bennu) could be home sweet home. A group of scientists at the University of Rochester in New York worked out a plan for turning asteroids into spinning space cities with artificial gravity. The researchers published a «wildly theoretical» study in Frontiers in Astronomy and Space Sciences earlier this year.

«Our paper lives on the edge of science and science fiction,» said co-author Adam Frank in a University of Rochester statement last week. Frank is a professor of physics and astronomy at the school.

The basic concept behind the asteroid city builds on an idea called the O’Neill cylinder, a rotating space colony design proposed by physicist Gerard O’Neill in the 1970s. The rotation creates artificial gravity. Think of something along the lines of the cylindrical Cooper Station in the movie Interstellar. It’s a fascinating idea, but it would be difficult and expensive to transport enough material into space to make a large-scale O’Neill cylinder.

This is where things get wilder. The Rochester research team proposes a way to turn a rock pile of an asteroid into a cylinder by surrounding it with a thin, high-strength mesh bag made from carbon nanofibers. It would have an accordion-like design.

«A cylindrical containment bag constructed from carbon nanotubes would be extremely light relative to the mass of the asteroid rubble and the habitat, yet strong enough to hold everything together,» said study co-author Peter Miklavcic, a doctoral candidate in mechanical engineering.

Spinning an asteroid would cause its rubble to break apart, expanding the bag and creating a layer of rock against it. That layer would provide radiation shielding for a colony inside the cylinder while the continued spin would create artificial gravity.

It sounds far-fetched, but Frank said the technologies and engineering behind the asteroid city technically obey the laws of physics. «Based on our calculations, a 300-meter-diameter asteroid just a few football fields across could be expanded into a cylindrical space habitat with about 22 square miles of living area,» Frank said. «That’s roughly the size of Manhattan.»

Of course, bagging and spinning an asteroid wouldn’t be simple. The researchers suggest using solar-powered rubble cannons to get the spin going. There’s also the matter of constructing a human-safe colony on the interior, but we can leave those challenges for the future.

Sci-fi writers have long envisioned life on asteroids. The paper provides a new way of thinking through that possibility in a way that could protect human occupants and make them feel more at home. It’s a good companion piece to another recent space thought experiment that offered up a plan for building a «forest bubble» on Mars.

My imagination is now taking me from my cozy quarters inside an asteroid to a vacation destination in a Martian nature reserve. This may not be relegated to the realm of sci-fi forever. «Space cities might seem like a fantasy now,» Frank said, «but history shows that a century or so of technological progress can make impossible things possible.»

Technologies

Google races to put Gemini at the center of Android before Apple’s AI reboot

Google is using its latest Android rollout to position Gemini as the AI layer across phones, Chrome, laptops and cars.

Google is using its latest Android rollout to make Gemini less of a chatbot and more of an operating layer across the phone, browser, car and laptop, just weeks before Apple is expected to show its own Gemini-powered Apple Intelligence reboot at WWDC.

Ahead of its Google I/O developer conference next week, the company previewed a number of Android updates, including AI-powered app automation, a smarter version of Chrome on Android, new tools for creators, a redesigned Android Auto experience, and a sweeping set of new security features.

Alphabet is counting on Gemini to help Google compete directly with OpenAI and Anthropic in the market for artificial intelligence models and services, while also serving as the AI backbone across its expansive portfolio of products, including Android. Meanwhile, Gemini is powering part of Apple’s new AI strategy, giving Google a role in the iPhone maker’s reset even as it races to prove its own version of personal AI on the phone is further along.

Sameer Samat, who oversees Google’s Android ecosystem, told CNBC that Google is rebuilding parts of Android around Gemini Intelligence to help users complete everyday tasks more easily.

“We’re transitioning from an operating system to an intelligence system,” he said.

As part of Tuesday’s announcements. Google said Gemini Intelligence will be able to move across apps, understand what’s on the screen and complete tasks that would normally require a user to jump between multiple services. That means Android is moving beyond the traditional assistant model, where users ask a question and get an answer, and acting more like an agent.

For instance, Google says Gemini can pull relevant information from Gmail, build shopping carts and book reservations. Samat gave the example of asking Gemini to look at the guest list for a barbecue, build a menu, add ingredients to an Instacart list and return for approval before checkout.

A big concern surrounding agentic AI involves software taking action on a user’s behalf without permissions. Samat said Gemini will come back to the user before completing a transaction, adding, “the human is always in the loop.”

Four months after announcing its Gemini deal with Google, Apple is under pressure to show a more capable version of Apple Intelligence, which has been a relative laggard on the market. Apple has long framed privacy, hardware integration and control of the user experience as its advantages.

Google’s Android push is designed to show it can bring AI deeper into the device experience while still giving users control over what Gemini can see, where it can act and when it needs confirmation.

The app automation features will roll out in waves, starting with the latest Samsung Galaxy and Google Pixel phones this summer, before expanding across more Android devices, including watches, cars, glasses and laptops later this year.

The company is also redesigning Android Auto around Gemini, turning the car into another major surface for its assistant. Android Auto is in more than 250 million cars, and Google says the new release includes its biggest maps update in a decade and Gemini-powered help with tasks like ordering dinner while driving.

Alphabet’s AI strategy has been embraced by Wall Street, which has pushed the company’s stock price up more than 140% in the past year, compared to Apple’s roughly 40% gain. Investors now want to see how Gemini can become more central to the products people use every day.

WATCH: Alphabet briefly tops Nvidia after report of $200 billion Anthropic cloud deal

Technologies

Waymo recalls 3,800 robotaxis after glitch allowed some vehicles to ‘drive into standing water’

Waymo issued a voluntary recall of about 3,800 of its robotaxis to fix software issues that could allow them to drive into flooded roadways.

Waymo is recalling about 3,800 robotaxis in the U.S. to fix software issues that could allow them to “drive onto a flooded roadway,” according to a letter on the National Highway Traffic Safety Administration’s website.

The voluntary recall is for Waymo vehicles that use the company’s fifth and sixth generation automated driving systems (or ADS), the U.S. auto safety regulator said in the letter posted Tuesday.

Waymo autonomous vehicles in Austin, Texas, were seen on camera driving onto a flooded street and stalling, requiring other drivers to navigate around them. It’s the latest example of a safety-related issue for the Alphabet-owned AV unit that’s rapidly bolstering its fleet of vehicles and entering new U.S. markets.

Waymo has drawn criticism for its vehicles failing to yield to school buses in Austin, and for the performance of its vehicles during widespread power outages in San Francisco in December, when robotaxis halted in traffic, causing gridlock.

The company said in a statement on Tuesday that it’s “identified an area of improvement regarding untraversable flooded lanes specific to higher-speed roadways,” and opted to file a “voluntary software recall” with the NHTSA.

“Waymo provides over half a million trips every week in some of the most challenging driving environments across the U.S., and safety is our primary priority,” the company said.

Waymo added that it’s working on “additional software safeguards” and has put “mitigations” in place, limiting where its robotaxis operate during extreme weather, so that they avoid “areas where flash flooding might occur” in periods of intense rain.

WATCH: Waymo launches new autonomous system in Chinese-made vehicle

Technologies

Qualcomm tumbles 13% as semiconductor stocks retreat from historic AI-fueled surge

Semiconductor equities reversed sharply after a broad AI-driven advance, with Qualcomm suffering its worst day since 2020 amid inflation concerns and rising oil prices.

Semiconductor stocks fell sharply on Tuesday, reversing course after an extensive rally that had expanded the artificial intelligence investment theme well past Nvidia and driven the industry to unprecedented levels.

Qualcomm plunged 13% and was on track for its steepest single-day decline since 2020. Intel shed 8%, while On Semiconductor and Skyworks Solutions each lost more than 6%. The iShares Semiconductor ETF, which benchmarks the overall sector, fell 5%.

The sell-off came after a key gauge of consumer prices came in above forecasts, and as conflict in Iran pushed crude oil higher—prompting investors to shift away from riskier assets.

The preceding advance had widened the AI opportunity set beyond longtime industry leader Nvidia, which for much of the past several years had largely carried the market to new peaks on its own.

Explosive appetite for central processing units, along with the graphics processing units that power large language models, has sent chipmakers to all-time highs.

Market participants are wagering that the shift from AI model training to autonomous agents will lift demand for additional AI hardware. Among the beneficiaries are memory chip producers, which are raising prices as supply remains tight.

Micron Technology slid 6%, and Sandisk cratered 8%. Sandisk’s stock has surged more than six times over since January.

-

Technologies3 года ago

Technologies3 года agoTech Companies Need to Be Held Accountable for Security, Experts Say

-

Technologies3 года ago

Technologies3 года agoBest Handheld Game Console in 2023

-

Technologies5 лет ago

Technologies5 лет agoBlack Friday 2021: The best deals on TVs, headphones, kitchenware, and more

-

Technologies3 года ago

Technologies3 года agoTighten Up Your VR Game With the Best Head Straps for Quest 2

-

Technologies5 лет ago

Technologies5 лет agoGoogle to require vaccinations as Silicon Valley rethinks return-to-office policies

-

Technologies5 лет ago

Technologies5 лет agoVerum, Wickr and Threema: next generation secured messengers

-

Technologies4 года ago

Technologies4 года agoThe number of Сrypto Bank customers increased by 10% in five days

-

Technologies5 лет ago

Technologies5 лет agoOlivia Harlan Dekker for Verum Messenger