Technologies

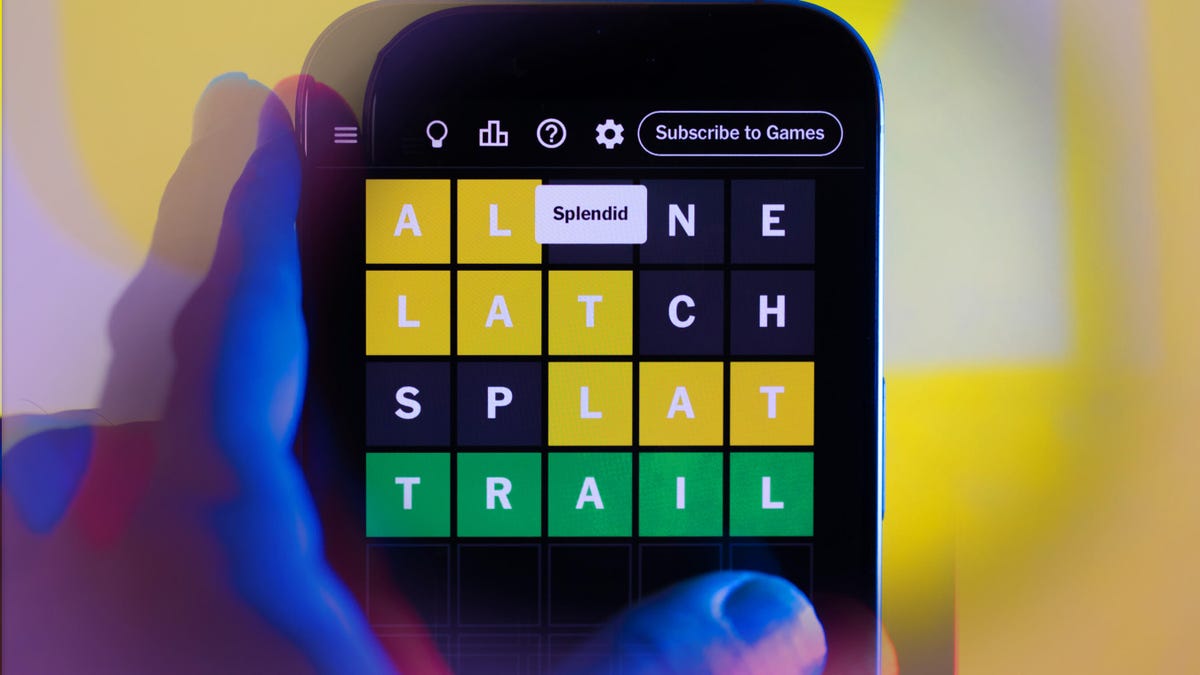

Was April the Toughest Month Ever for Wordle? Who Guesses X and Z?

New York Times puzzle-solvers faced some tough challenges in April.

New York Times puzzlemakers, what was that about? Was it just me, or was April 2025 one of your toughest months ever — for all your games, maybe, but especially for Wordle? CNET publishes the daily answers for Wordle, Connections, Strands, Connections: Sports Edition and the Mini Crossword, and I’ve seen some doozies. But April Wordle broke my streak twice. Maybe more than that. I’m not counting anymore.

Read more: Daily NYT Puzzle Answers

(Spoilers for past puzzles ahead.)

If you play Wordle, you probably have your own starter words all lined up. I almost always begin with TRAIN and CLOSE. I’m not the kind of person who just looks around the room, sees a chair and plays that word. I play those words because I know, from a list I made for CNET and based on research from the Oxford English Dictionary, that TRAIN and CLOSE contain some of the most popular letters used in English.

This month’s Wordle answers included OZONE, with a Z, the 24th-least-popular letter, smack in the middle, and three vowels that I just couldn’t place in the right spots.

But even tougher might have been INBOX on April 19. Three letters — Z, J and Q — are used in English less than X (J? Why J?). But somehow, few letters come less frequently to my mind than X.

And April ended on a tough note, too, with Wednesday’s puzzle answer being IDLER. I mean, I know «idle» describes someone who’s lazy or avoiding work. But I don’t think I’ve ever pulled that out as an insult, and I sure didn’t see the letter pattern popping up.

No one wants an easy puzzle, but April seemed especially brain-busting. There’s good news, though. It’s gonna be May! And there’s bad news. The May 1 Wordle is a stumper too. Happy solving!

Technologies

Today’s NYT Strands Hints, Answers and Help for Oct. 23 #599

Here are hints and answers for the NYT Strands puzzle for Oct. 23, No. 599.

Looking for the most recent Strands answer? Click here for our daily Strands hints, as well as our daily answers and hints for The New York Times Mini Crossword, Wordle, Connections and Connections: Sports Edition puzzles.

Today’s NYT Strands puzzle might be Halloween-themed, as the answers are all rather dangerous. Some of them are a bit tough to unscramble, so if you need hints and answers, read on.

I go into depth about the rules for Strands in this story.

If you’re looking for today’s Wordle, Connections and Mini Crossword answers, you can visit CNET’s NYT puzzle hints page.

Read more: NYT Connections Turns 1: These Are the 5 Toughest Puzzles So Far

Hint for today’s Strands puzzle

Today’s Strands theme is: Please don’t eat me!

If that doesn’t help you, here’s a clue: Remember Mr. Yuk?

Clue words to unlock in-game hints

Your goal is to find hidden words that fit the puzzle’s theme. If you’re stuck, find any words you can. Every time you find three words of four letters or more, Strands will reveal one of the theme words. These are the words I used to get those hints but any words of four or more letters that you find will work:

- POND, NOON, NODE, BALE, SOCK, LOVE, LOCK, MOCK, LEER, REEL, GLOVE, DAIS, LEAN, LEAD, REEL

Answers for today’s Strands puzzle

These are the answers that tie into the theme. The goal of the puzzle is to find them all, including the spangram, a theme word that reaches from one side of the puzzle to the other. When you have all of them (I originally thought there were always eight but learned that the number can vary), every letter on the board will be used. Here are the nonspangram answers:

- AZALEA, HEMLOCK, FOXGLOVE, OLEANDER, BELLADONNA

Today’s Strands spangram

Today’s Strands spangram is POISONOUS. To find it, look for the P that is the first letter on the far left of the top row, and wind down and across.

Technologies

Today’s NYT Connections: Sports Edition Hints and Answers for Oct. 23, #395

Here are hints and the answers for the NYT Connections: Sports Edition puzzle for Oct. 23, No. 395.

Looking for the most recent regular Connections answers? Click here for today’s Connections hints, as well as our daily answers and hints for The New York Times Mini Crossword, Wordle and Strands puzzles.

Today’s Connections: Sports Edition has one of those crazy purple categories, where you wonder if anyone saw the connection, or if people just put that grouping together because only those four words were left. If you’re struggling but still want to solve it, read on for hints and the answers.

Connections: Sports Edition is published by The Athletic, the subscription-based sports journalism site owned by The Times. It doesn’t show up in the NYT Games app but appears in The Athletic’s own app. Or you can play it for free online.

Read more: NYT Connections: Sports Edition Puzzle Comes Out of Beta

Hints for today’s Connections: Sports Edition groups

Here are four hints for the groupings in today’s Connections: Sports Edition puzzle, ranked from the easiest yellow group to the tough (and sometimes bizarre) purple group.

Yellow group hint: Fan noise.

Green group hint: Strategies for hoops.

Blue group hint: Minor league.

Purple group hint: Look for a connection to hoops.

Answers for today’s Connections: Sports Edition groups

Yellow group: Sounds from the crowd.

Green group: Basketball offenses.

Blue group: Triple-A baseball teams.

Purple group: Ends with a basketball stat.

Read more: Wordle Cheat Sheet: Here Are the Most Popular Letters Used in English Words

What are today’s Connections: Sports Edition answers?

The yellow words in today’s Connections

The theme is sounds from the crowd. The four answers are boo, cheer, clap and whistle.

The green words in today’s Connections

The theme is basketball offenses. The four answers are motion, pick and roll, Princeton and triangle.

The blue words in today’s Connections

The theme is triple-A baseball teams. The four answers are Aces, Jumbo Shrimp, Sounds and Storm Chasers.

The purple words in today’s Connections

The theme is ends with a basketball stat. The four answers are afoul, bassist, counterpoint and sunblock.

Technologies

Amazon’s Delivery Drivers Will Soon Wear AI Smart Glasses to Work

The goal is to streamline the delivery process while keeping drivers safe.

Amazon announced on Wednesday that it is developing new AI-powered smart glasses to simplify the delivery experience for its drivers. CNET smart glasses expert Scott Stein mentioned this wearable rollout last month, and now the plan is in its final testing stages.

The goal is to simplify package delivery by reducing the need for drivers to look at their phones, the label on the package they’re delivering and their surroundings to find the correct address.

Don’t miss any of our unbiased tech content and lab-based reviews. Add CNET as a preferred Google source.

A heads-up display will activate as soon as the driver parks, pointing out potential hazards and tasks that must be completed. From there, drivers can locate and scan packages, follow turn-by-turn directions and snap a photograph to prove delivery completion without needing to take out their phone.

The company is testing the glasses in select North American markets.

Watch: See our Instagram post with a video showing the glasses

A representative for Amazon didn’t immediately respond to a request for comment.

To fight battery drain, the glasses pair with a controller attached to the employee’s delivery vest, allowing them to replace depleted batteries and access operational controls. The glasses will support an employee’s eyeglass prescription. An emergency button will be within reach to ensure the driver’s safety.

Amazon is already planning future versions of the glasses, which will feature «real-time defect detection,» notifying the driver if a package was delivered to the incorrect address. They plan to add features to the glasses to detect if pets are in the yard and adjust to low light.

-

Technologies3 года ago

Tech Companies Need to Be Held Accountable for Security, Experts Say

-

Technologies3 года ago

Best Handheld Game Console in 2023

-

Technologies3 года ago

Tighten Up Your VR Game With the Best Head Straps for Quest 2

-

Technologies4 года ago

Verum, Wickr and Threema: next generation secured messengers

-

Technologies4 года ago

Black Friday 2021: The best deals on TVs, headphones, kitchenware, and more

-

Technologies4 года ago

Google to require vaccinations as Silicon Valley rethinks return-to-office policies

-

Technologies4 года ago

Olivia Harlan Dekker for Verum Messenger

-

Technologies4 года ago

iPhone 13 event: How to watch Apple’s big announcement tomorrow